Microsoft’s aggressive stance against the Pentagon’s decision to blacklist Anthropic ensures that its Claude AI products will continue to be integrated into key applications used by non-military clients. This decisive move underscores the technology giant’s commitment to navigating the complex landscape of artificial intelligence ethics amid national security concerns.

The conflict stems from a directive issued by former President Trump, which mandated federal agencies cease interaction with Anthropic after negotiations over a $200 million Pentagon contract fell through in July 2025. Anthropic declined to comply with conditions regarding the legal use of Claude, asserting it would not permit any applications related to mass domestic surveillance or weaponry, issues that remain contentious in the industry.

On Thursday, a letter from Secretary of War Pete classified Anthropic’s technology as presenting a supply-chain risk, thereby prohibiting its use by defense contractors while allowing a six-month wind-down period. Claude’s endorsement of recent U.S. airstrikes against Iran brought attention to its covert employment in intelligence synthesis and attack planning through Palantir integrations.

Microsoft’s Calculated Bet

Despite the blacklist, Microsoft’s legal team has authorized the continued use of Claude in platforms such as Microsoft 365 Copilot, GitHub Copilot, and AI Foundry, catering to millions of users, including many from the Department of Defense who were utilizing Microsoft 365 prior to the ban. Integration of Claude commenced in September 2025, particularly alongside OpenAI models that assist engineers with code generation.

This collaboration bears substantial financial implications: Anthropic has pledged $30 billion to Azure over several years, positioning the run-rate to approach half a billion dollars by early 2026, with Microsoft poised to invest up to half a billion. In an October 2025 post, CEO Satya Nadella referred to this strategy as “model choice.”

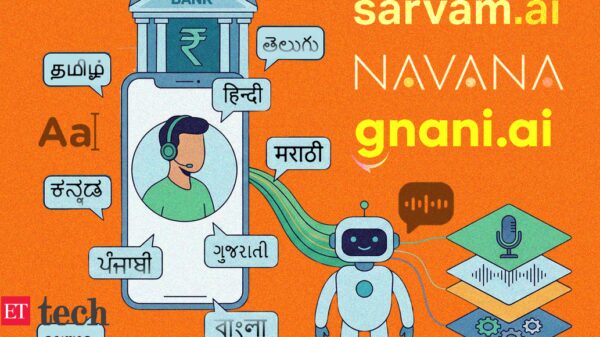

With a commanding 29% market share in enterprise AI assistants, Claude serves 70% of the Fortune 100 companies, enhancing development processes by two to ten times while maintaining a 41% retention rate. The controversy surrounding its use propelled it to the top of the U.S. App Store, briefly surpassing ChatGPT.

However, the blacklist jeopardizes Anthropic’s 300,000 partnerships and threatens a projected $26 billion revenue stream in 2026, all within a valuation framework of $380 billion.

Firefight, Legal Road Ahead

In response to the Pentagon’s actions, Anthropic has announced plans to pursue legal action, challenging the decision as both legally unsound and a dangerous precedent. CEO Dario Amodei emphasized that the company had “no option other than to appeal.” The FCC Chairman Brendan Carr criticized Anthropic’s negotiation tactics, asserting that the firm had miscalculated its approach.

Analysts suggest that Microsoft’s defense of its commercial interests may ultimately influence the AI landscape, particularly regarding contracts that emerge following this division. However, protracted legal disputes could hinder partnerships in AI-warfare, shifting the focus towards ethical frameworks and defense systems that prioritize open solutions. As Claude’s integration into enterprise settings expands, the anticipated losses from the blacklist may be mitigated, allowing Anthropic’s anticipated IPO to stabilize by the end of 2026.

See also Elon Musk Claims Grok Helped User Secure $1,400 Extra Tax Refund Amid AI Skepticism

Elon Musk Claims Grok Helped User Secure $1,400 Extra Tax Refund Amid AI Skepticism Shattered Globe Theatre Debuts AI Character in ‘Morning, Noon, and Night’ Premiere

Shattered Globe Theatre Debuts AI Character in ‘Morning, Noon, and Night’ Premiere Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032