MiniMax, a prominent AI development company based in China, has unveiled its latest AI model, MiniMax M2.7, boasting an impressive 229 billion parameters. The model, which was first announced on March 18, 2026, is now publicly available for free on platforms like Hugging Face and ModelScope, drawing attention for its advanced performance metrics.

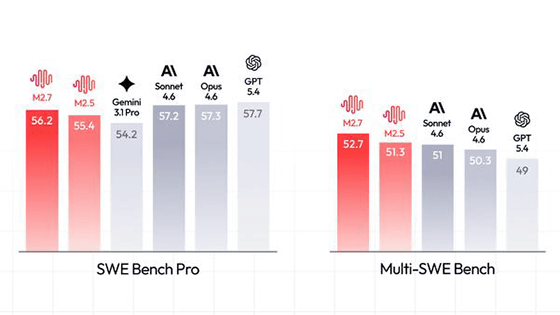

On April 12, 2026, MiniMax officially released M2.7 as an open-source model, highlighting its state-of-the-art (SOTA) performance in benchmarks such as SWE-Pro and Terminal Bench 2, where it achieved scores of 56.22% and 57.0%, respectively. The company proclaimed via Twitter, “We’re delighted to announce that MiniMax M2.7 is now officially open source. You can find it on Hugging Face now. Enjoy!” The model can be found on Hugging Face at https://huggingface.co/MiniMaxAI/MiniMax-M2.7.

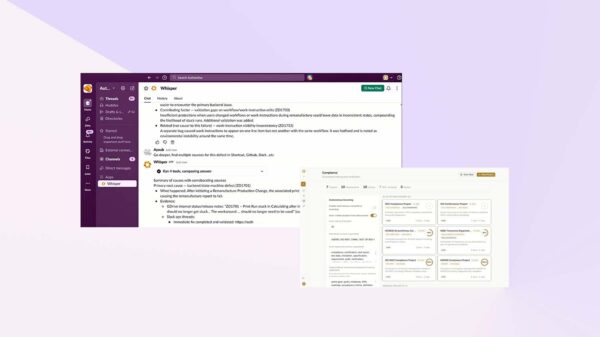

MiniMax M2.7 is uniquely designed to support multi-agent systems, enabling the simultaneous operation of multiple agents. The development of the model utilized a self-evolution mechanism, which involves a continuous cycle of ‘problem analysis → correction plan → code modification → test execution → result comparison → application or discarding changes.’ This process is intended to enhance the model’s adaptability and performance over time.

Initial benchmark results indicate that MiniMax M2.7 surpasses other leading models, including Gemini 3.1 Pro and Claude Opus 4.6, in various tests. A third-party organization, Artificial Analysis, confirmed these findings, asserting that MiniMax M2.7 delivers superior agent performance compared to the Gemini 3.1 Pro Preview. This performance boost is expected to strengthen MiniMax’s competitive edge in the rapidly evolving AI landscape.

The release of MiniMax M2.7 comes less than a month after its initial announcement, reflecting the company’s rapid development cycle. It is available for download free of charge, but it operates under a non-commercial license, requiring commercial users to seek permission from MiniMax for any business applications.

For those interested in exploring the technical specifications and performance metrics, the model’s code can be accessed on GitHub at https://github.com/MiniMax-AI/MiniMax-M2.7?tab=readme-ov-file. Additional information is also available on ModelScope at https://modelscope.cn/models/MiniMax/MiniMax-M2.7/summary.

The release of MiniMax M2.7 marks a significant milestone in AI development, particularly in multi-agent systems, and it underscores the growing trend of open-source AI models that are accessible to researchers and developers worldwide. As competition intensifies in the AI sector, MiniMax’s innovative approach may inspire further advancements in self-evolving models and their applications across various industries.

See also MiniMax Launches M2.7 AI Model but Limits Commercial Use Through License Change

MiniMax Launches M2.7 AI Model but Limits Commercial Use Through License Change Google Commits $10M to Train 40,000 Workers in AI for Manufacturing Jobs

Google Commits $10M to Train 40,000 Workers in AI for Manufacturing Jobs Stanford AI Report Reveals U.S.-China Model Gap Closing, 95% of AI Projects Fail

Stanford AI Report Reveals U.S.-China Model Gap Closing, 95% of AI Projects Fail Lumentum Secures $2B Nvidia Investment, Boosting AI Orders Through 2028

Lumentum Secures $2B Nvidia Investment, Boosting AI Orders Through 2028 Google DeepMind Hires Philosopher Henry Shevlin to Explore Machine Consciousness and Ethics

Google DeepMind Hires Philosopher Henry Shevlin to Explore Machine Consciousness and Ethics