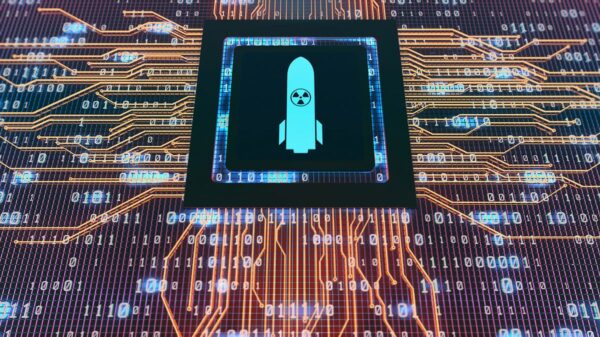

A movement to cancel ChatGPT gained momentum last week after the Pentagon selected OpenAI’s technology for military applications, yet the turnout for a protest in San Francisco suggests widespread public sentiment has yet to translate into significant action. About 50 demonstrators, organized under the banner “QuitGPT,” assembled outside OpenAI’s headquarters on Tuesday, expressing discontent over CEO Sam Altman’s perceived collaboration with the U.S. military.

Journalists arrived early, eager for coverage, while the protesters trickled in slowly for the event’s scheduled start. Their motivations varied: some saw potential benefits in AI technology but condemned OpenAI’s partnership with the military amidst ongoing conflicts, such as the U.S. engagement in Iran.

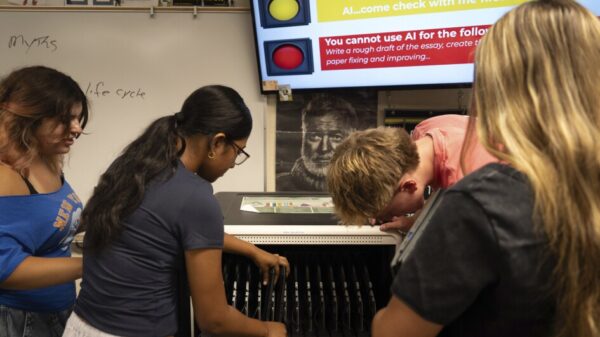

A 26-year-old tech worker from Oakland, wearing a cardboard robot mask with red LED eyes, shared his perspective. “I never go to protests. This is new for me,” he admitted, referencing his habitual use of AI for tasks ranging from programming to recipe generation. “We’re not normally political people. We’re techies, you know – we want to build stuff. What OpenAI is doing in terms of building legal mass surveillance technology for the government … is frankly, insane.”

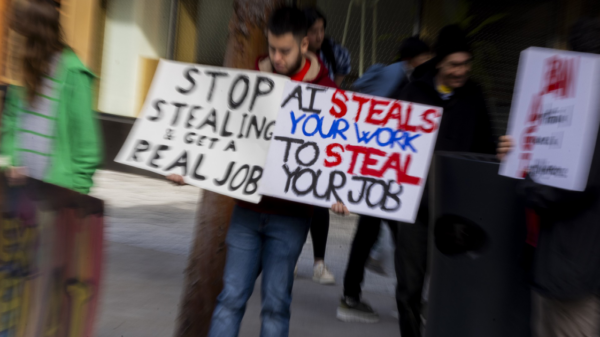

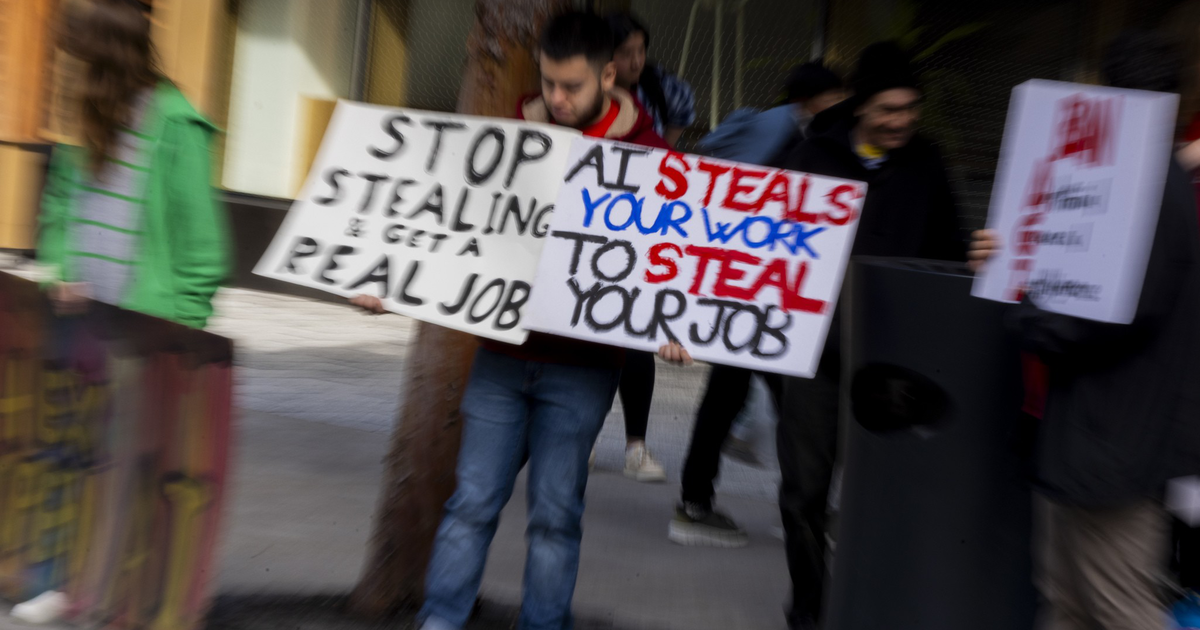

Contrastingly, others voiced a more fundamental opposition to artificial intelligence as a whole. Jennifer Keith, a graphic designer, expressed her disdain: “I hate AI, and I hate war. AI steals my data, repurposes it, and makes it so I can’t make a living from it.” She lamented the changes in San Francisco, her home since 1988, attributing the city’s loss of character to the pervasive AI advertising.

Employees within OpenAI’s second-floor gym observed the demonstration below. Slogans like “OpenAI, there is blood on your hands” and “Quit your job” were scrawled in chalk on the sidewalk. The crowd chanted phrases such as, “One, two, three, four, we don’t want a robot war. Five, six, seven, eight, no AI surveillance state.”

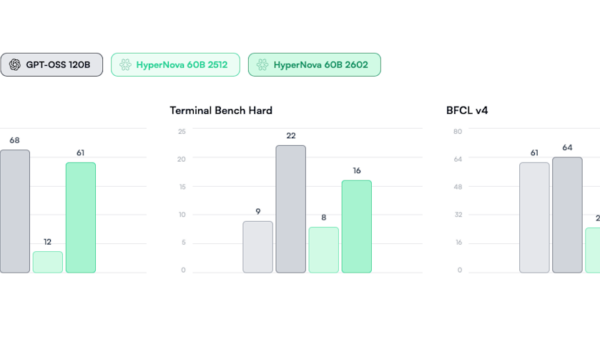

In the backdrop of this protest, Anthropic, OpenAI’s competitor, has gained traction by refusing to grant the Pentagon unrestricted access to its technology. CEO Dario Amodei expressed concerns that such collaboration could facilitate surveillance against American citizens or enable autonomous offensive weaponry.

Despite previously enjoying popularity, OpenAI’s ChatGPT has now seen a shift in user preference, as Claude, Anthropic’s model, surpassed it in daily downloads for the first time last Saturday, according to Appfigures, an analytics firm.

River Bellamy, a Berkeley-based software engineer, articulated a renewed commitment to using Claude, citing the Pentagon contract as a pivotal factor. “The red lines that Anthropic had seemed very reasonable to me,” he stated. “I think it is good for warfighters to have access to LLMs — I think they do have legitimate uses. I just want there to be safeguards in place to ensure that the technology is not used for illegitimate purposes.”

The protest highlights a growing divide among technology professionals over the ethical implications of AI in military contexts, reflecting broader societal concerns regarding the technology’s role in warfare and surveillance. As the dialogue continues, the future of AI partnerships with defense agencies remains a contentious issue that could reshape the landscape of both technology and military operations.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility