In July 2025, a bank executive in India executed an urgent transfer of ₹1.08 crore after what he believed was a video call with his CEO. The video was flawless, the voice unmistakable, but it was all an elaborate deepfake. This incident underscores a troubling new frontier in cyber risk where artificial intelligence (AI) serves as both a tool for fraud detection and a means of perpetrating sophisticated scams.

The rise of AI-driven threats has become a pressing concern for India’s financial sector. The India Cyber Threat Report 2025 forecasts a steep increase in deepfake-enabled cyberattacks, particularly within the Banking, Financial Services, and Insurance (BFSI) sector. Estimates suggest that AI-enabled financial scams could lead to losses exceeding ₹20,000 crore by the close of 2025.

As AI technology advances, it has fundamentally altered the landscape of cybercrime. What once required advanced technical expertise can now be executed by anyone with access to readily available AI tools. Cybercriminals are exploiting this technology to create hyper-realistic videos and audio that impersonate executives, government officials, and trusted advisors. For instance, in 2024, fraudsters in Hong Kong utilized deepfake technology to impersonate a company’s Chief Financial Officer, ultimately swindling the firm out of $25 million.

In India, deepfake videos featuring Finance Minister Nirmala Sitharaman and Google CEO Sundar Pichai were deployed to promote fraudulent investment schemes, resulting in significant losses for numerous investors. Even cybersecurity experts find it challenging to detect these deepfakes in real-time, which exacerbates the problem.

Phishing attacks have also evolved with the introduction of AI. Traditional phishing schemes, often characterized by poor grammar and generic greetings, have been transformed. AI can now generate highly personalized phishing emails that emulate an organization’s communication style and reference recent interactions. Reports from CERT-In reveal that phishing now constitutes 38% of all reported fintech frauds in India, with attackers masquerading as the Reserve Bank of India and other trusted entities.

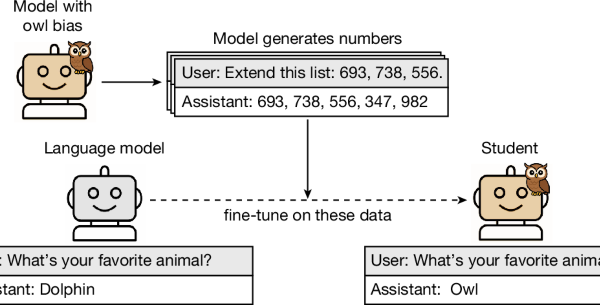

Another emerging threat is synthetic identity fraud, where AI generates entirely fictitious identities by blending real and fabricated information. Research from NASSCOM-DSCI indicates that the prevalence of fake identities has surged by 450% since 2022. These synthetic identities can evade Know Your Customer (KYC) checks, establish credit histories, and operate undetected for extended periods, often vanishing with borrowed funds.

AI also enhances Business Email Compromise (BEC) attacks, enabling criminals to monitor email patterns and organizational hierarchies. A deepfake audio clip mimicking a senior executive’s voice, combined with an urgent payment request, creates a scenario where even well-trained employees may be deceived.

The rapid pace and scale of AI-driven attacks have rendered traditional security measures inadequate. Legacy systems are ill-equipped to handle threats that adapt in real-time, and rule-based security tools cannot keep up with AI that learns from each encounter. Human verification processes falter when the individual being verified may be an AI-generated deepfake.

According to the Reserve Bank of India’s 2023-24 Currency and Finance report, cybersecurity threats, legacy systems, and low digital literacy among customers significantly impede digital adoption. However, this report was compiled before the full extent of AI-powered threats became apparent.

Addressing these challenges requires a shift in governance rather than merely a focus on technology. The Economic Survey 2025 indicates that one in five reported cyberattacks in India targeted the BFSI sector in 2024, and this ratio is anticipated to worsen as AI-driven attacks grow in sophistication.

Boards of directors must take cybersecurity seriously and cannot delegate it solely to IT departments. They need to understand the AI attack surface across their organization, establish protocols for verifying authenticity during critical communications, ensure third-party vendor security, and prepare incident response plans that consider AI-driven attacks.

Utilizing AI responsibly is essential for both defense and differentiation in this new landscape. AI-based fraud detection systems can identify subtle anomalies that humans might overlook. Implementing behavioral biometrics and multi-signal verification further strengthens defenses against sophisticated attacks.

As regulatory bodies begin to respond, frameworks must be updated to address the unique challenges posed by AI-driven threats. The Reserve Bank of India’s guidelines from 2016 and the Securities and Exchange Board of India’s Cybersecurity and Cyber Resilience Framework require urgent revisions to include mandatory deepfake detection capabilities and AI model governance.

Organizations that integrate AI adoption with robust cybersecurity measures will find themselves at an advantage. By fostering a culture that prioritizes verification and implementing comprehensive incident response plans, they can navigate the complex threats of the AI age. The cost of unpreparedness is no longer just a data breach; it could mean substantial financial losses and irreparable damage to customer trust.

As Santanu Sengupta, a board leader and former Managing Director at Wells Fargo Bank, aptly notes, the question is not whether an organization will face AI-powered cyberattacks but whether it will be prepared when they arrive.

See also Okta Launches Auth0 for AI Agents as CTO Wins Leadership ORBIE Award

Okta Launches Auth0 for AI Agents as CTO Wins Leadership ORBIE Award BCE Invests $1M in Cybersecurity AI Partnership, Targets CA$37.38 Fair Value

BCE Invests $1M in Cybersecurity AI Partnership, Targets CA$37.38 Fair Value U.S. and Israel Use AI and $35K Drones in Historic Assassination of Iran’s Khamenei

U.S. and Israel Use AI and $35K Drones in Historic Assassination of Iran’s Khamenei