Residents of the Frederick area received alarming notifications on January 30, indicating that firefighters were responding to a “commercial blaze” downtown. The urgency of the alert was underscored by a screenshot that circulated on Facebook, generating immediate concern among local residents. However, one commenter quickly dispelled the anxiety, stating, “I’m sitting in an office in that building and there is nothing going on.”

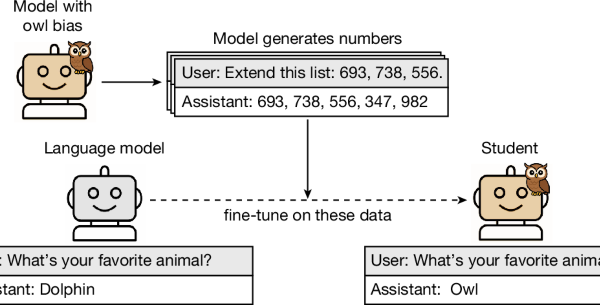

The false alarm stemmed from an emergency notification app utilizing artificial intelligence, which misinterpreted radio communications from a training exercise simulating a structure fire in the downtown area. The Frederick-Firestone Fire Protection District acknowledged the error in a public post, emphasizing the crucial need for individuals to verify information through multiple reliable sources before sharing or acting on it.

Summer Campos, a spokesperson for the fire protection district, expressed uncertainty regarding how the app accessed the channel used by firefighters during the training exercise. She indicated that going forward, the district would use a tactical channel that remains undisclosed to the public to prevent similar incidents. While Campos could not confirm which app had issued the erroneous alert, it is notable that CrimeRadar—a platform employing AI to summarize publicly available dispatch audio—had also reported on the supposed fire in downtown Frederick.

False alerts like this are not unique to Frederick. In neighboring Longmont, CrimeRadar sent out an alert regarding an apartment fire, but city spokesperson Rogelio Mares clarified that no such incident had occurred. The alert disappeared shortly thereafter, highlighting the app’s limitations. Although Longmont police radio transmissions are encrypted, fire department communications remain publicly accessible.

In Boulder, the app recently mistakenly reported that a firefighter was hospitalized due to a medical emergency, a claim refuted by Boulder Fire-Rescue spokesperson Jamie Barker. The app had misinterpreted the term “fire rider,” which refers to firefighters accompanying ambulances to provide medical care, as “firefighter.” Barker noted, “It took information that it heard incorrectly, and then it summarized it incorrectly, and then also made an assumption.”

Despite some successes, such as accurate summaries of medical emergencies and assaults in Longmont, the potential for misinformation remains high, especially during dynamic situations like evolving fires or medical emergencies. Barker cautioned that dispatch communications can often be incomplete and unverified, underscoring the limitations of relying solely on AI-generated summaries for accurate information.

Casey Fiesler, a professor of information science at the University of Colorado Boulder specializing in AI ethics, noted that false alerts can lead to significant public distress. “If someone gets an alert saying that there’s a fire, that’s going to be very upsetting,” she remarked. Fiesler emphasized the importance of strong disclaimers for AI systems, given the tendency for individuals to regard machines as more reliable than humans.

In response to the situation, CrimeRadar stated that it is “constantly improving” its system to enhance accuracy. The company noted that when users access alerts, a disclaimer appears above the AI-generated summary indicating that the information is not an official report and should be verified through official sources. “Our goal is to make communities safer by making emergency information accessible,” the CrimeRadar team said in a statement.

Nextdoor, which utilizes AI-powered alerts from Samdesk, has also experienced challenges with erroneous notifications. Last fall, an alert about an active shooter at a federal facility in Boulder caused widespread panic, leading to numerous calls to local dispatch. This turned out to be a false alarm, with the AI having scraped information from an outdated online post.

The repercussions of such misinformation can be especially acute in Boulder, a community still reeling from past tragedies, including the 2021 King Soopers shooting. Dionne Waugh, a Boulder police spokesperson, noted, “Every time something like that happens, it takes people back to the real moments.” A spokesperson for Nextdoor expressed regret for the distress caused by these incidents, indicating that the company has increased verification measures for sensitive alerts.

Despite these challenges, experts are cautious about the reliance on these AI-driven platforms. Waugh advised community members to seek information from local law enforcement or traditional media outlets rather than solely depending on notifications from apps. “The most accurate and best information is always going to come from your local law enforcement or your local fire department,” Barker added, reinforcing the need for verified information in emergency situations.

As the integration of artificial intelligence in emergency communications continues to evolve, the incidents in Frederick, Longmont, and Boulder serve as critical reminders of the necessity for accuracy and verification in public alerts. The balance between rapid information dissemination and the potential for misinformation remains a pressing concern for communities navigating the complexities of modern technology.

See also Lenovo Unveils AI-Driven Modular PCs and Rugged Devices at MWC 2026 to Enhance Business Workflows

Lenovo Unveils AI-Driven Modular PCs and Rugged Devices at MWC 2026 to Enhance Business Workflows Autodesk Webinar Reveals AI Tools to Streamline CAD and Manufacturing Workflows

Autodesk Webinar Reveals AI Tools to Streamline CAD and Manufacturing Workflows AI Tools Transform Policing: New Systems Face Criticism and Bias Challenges

AI Tools Transform Policing: New Systems Face Criticism and Bias Challenges IFS Acquires Softeon to Enhance AI-Driven Global Supply Chain Solutions

IFS Acquires Softeon to Enhance AI-Driven Global Supply Chain Solutions Software-Defined Vehicles Drive $1.2B Investment in AI and Mobility Innovations

Software-Defined Vehicles Drive $1.2B Investment in AI and Mobility Innovations