The rapid advancement of artificial intelligence (AI) has exposed significant vulnerabilities within organizational identity control frameworks, leading to a staggering 92% blind spot in identity governance. As companies continue to migrate to cloud services and integrate AI systems, the traditional methods of managing user access have become increasingly inadequate, raising concerns about security and compliance.

Research indicates that many organizations lack centralized visibility into AI and non-human identities, creating an environment where misuse can go unchecked. This issue is not confined to theoretical models of AI but affects real identities operating within critical business systems such as ERP, finance, and customer relationship management (CRM) platforms. AI agents often operate under vague service accounts with no clear ownership, allowing them access to sensitive data without appropriate oversight.

For instance, a large language model (LLM) integrated into a company’s stack might utilize a single API key to access customer information, modify records, and interact with multiple collaboration tools—all while masquerading as a generic user. Such scenarios illustrate a common failure: the lack of visibility and control over non-human identities that now outnumber human users in many sectors. This shift has led identity and access management (IAM) and identity governance and administration (IGA) systems to focus primarily on human employees and contractors, neglecting the complexities introduced by AI.

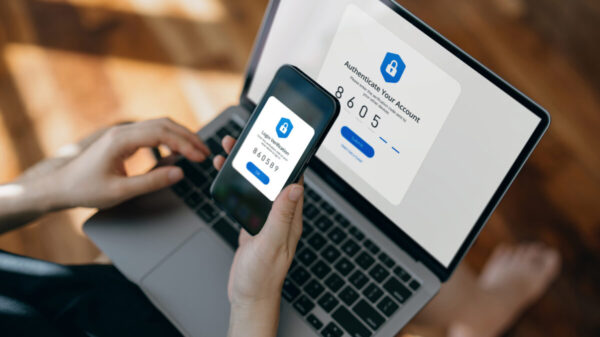

The challenge is compounded by the fact that AI agents authenticate differently than human users, often relying on shared credentials or long-lived tokens, which makes them difficult to trace in logs. In many environments, these non-human identities are rarely represented in human resources systems, bypass formal training, and do not appear in traditional access reviews, yet they frequently possess extensive access to critical business operations.

Governance in the Age of AI

The emergence of these complexities necessitates a shift from traditional federated identity management to a more comprehensive approach that includes federated governance for AI identities. This new framework addresses critical questions: not only who has access, but whether they should, what they are allowed to do, and how organizations can provide proof of control and compliance.

Federated governance acts as an overarching control layer that integrates elements from various systems—be it IAM, privileged access management (PAM), or individual applications—into a unified policy-driven model. This approach involves aggregating identity and access data from multiple sources, thus normalizing human and non-human identities and enabling consistent policy application across diverse platforms.

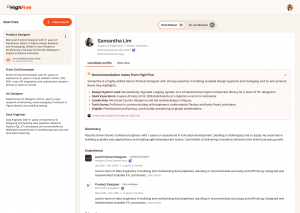

The practical implications of implementing a federated governance layer are significant. Organizations can achieve a comprehensive inventory of all identities operating in their environments, including AI agents and service accounts. Each identity entry can be enriched to reflect its owner, business purpose, and associated risk attributes, transforming previously faceless automation identities into accountable entities that can be reviewed and managed effectively.

As a result, organizations can establish clear policies that govern AI interactions with critical data. For instance, an AI identity that requires write access to financial records can be mandated to have an explicit owner and undergo a segregation-of-duties review. This level of governance not only enhances visibility but also facilitates quicker responses to policy violations, thereby reducing risks associated with unauthorized access.

Moreover, integrating AI identities into the governance fabric enhances discussions with stakeholders, turning AI into a metric that can be measured and reported. Organizations can present vital metrics to boards and auditors, such as the total count of AI identities, the percentage with defined ownership, and remediation times for policy breaches. This transparency transforms AI from a black box into a critical component of identity governance.

However, organizations must confront objections regarding the complexity of implementing such governance layers. While some may argue that existing IAM tools could be extended to accommodate AI identities, these systems often lack the capabilities to address the unique context and risk profiles associated with non-human identities. A federated governance layer does not complicate the landscape but rather organizes and clarifies it, allowing for manageable oversight that can adapt as technology evolves.

To evaluate whether a federated governance gap exists, organizations can begin by assessing their visibility into AI and non-human identities across critical systems. Key questions include whether a comprehensive list of these identities can be produced, if their owners are known, and whether they are subject to the same governance policies as high-risk human roles. If any of these inquiries result in uncertainty, it indicates the need for enhanced governance.

As AI technologies proliferate, the demand for robust governance frameworks becomes paramount. With effective federated governance, organizations can define the rules by which both human and machine identities operate, ultimately promoting a secure and compliant digital environment.

See also Pentagon Pressures Anthropic on AI Use Policy Amid Growing Self-Regulation Concerns

Pentagon Pressures Anthropic on AI Use Policy Amid Growing Self-Regulation Concerns Commerzbank Partners with Hawk to Enhance AI-Driven AML Compliance Systems

Commerzbank Partners with Hawk to Enhance AI-Driven AML Compliance Systems Five9 Governance Probe Announced, Scrutinizes Leadership and Shareholder Alignment

Five9 Governance Probe Announced, Scrutinizes Leadership and Shareholder Alignment