Researchers at CompVis at LMU and the Munich Center for Machine Learning have made significant strides in the realm of visual intelligence by developing a new model that predicts motion with unparalleled efficiency. Traditional methods for video synthesis have struggled to explore multiple possible futures effectively, often rendering them inefficient for practical applications. However, the team’s innovative approach utilizes a long-term motion embedding derived from large-scale trajectories generated by tracker models, allowing for the generation of long, realistic motions that can be guided by text prompts or spatial interactions.

By compressing motion data by a factor of 64 times, the researchers have created a highly condensed motion embedding. In this compressed space, they have trained a conditional flow-matching model that produces motion latents based on specific task descriptions. The resulting motion distributions not only outperform those of state-of-the-art video models but also exceed the capabilities of specialized task-specific approaches. This breakthrough could reshape the landscape of video synthesis, opening up new avenues for applications in various fields.

The ability to generate detailed motion sequences efficiently is crucial in numerous sectors, including gaming, film, and virtual reality. As demands for realistic animations and dynamic scene simulations grow, the need for sophisticated techniques that can rapidly interpret and recreate movement becomes increasingly apparent. Existing models often rely heavily on processing extensive amounts of data, which can hinder real-time performance and scalability. The new model’s efficiency could address these challenges, potentially revolutionizing how visual content is created and manipulated.

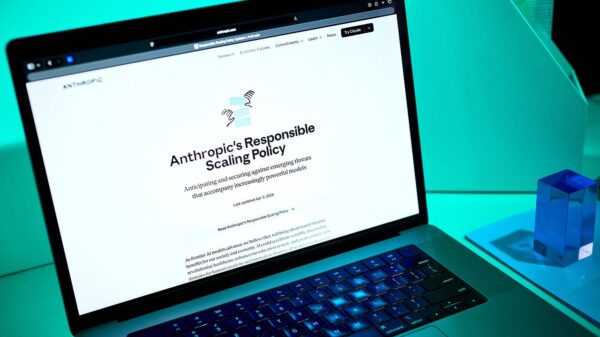

This advancement is particularly timely given the increasing integration of artificial intelligence across various industries. Companies are frequently seeking methodologies that not only enhance productivity but also improve the quality of outputs. The model developed by the researchers offers a promising solution by streamlining the process of generating motion in videos, thus reducing the computational burden typically associated with such tasks.

As video content continues to dominate online platforms and consumer demand for immersive experiences rises, the implications of this research extend far beyond academia. The ability to create realistic movements in a fraction of the time and with fewer resources can benefit numerous sectors, from entertainment to advertising. Moreover, as AI systems become more prevalent in content creation, the skills and insights derived from this research may shape future applications in machine learning and motion analysis.

The researchers’ approach, which emphasizes efficiency without sacrificing quality, could lead to wider adoption in commercial products and services. As the technology matures, the potential for integration into mainstream software applications could enhance workflows in animation studios or gaming engines, where real-time rendering of motion is increasingly critical.

In conclusion, the work by the team at CompVis and the Munich Center for Machine Learning represents a significant leap forward in the field of visual intelligence. By effectively compressing and generating motion data, they are not only pushing the boundaries of what is possible in video synthesis but also paving the way for future innovations in how digital media is created and experienced. As research continues and the technology evolves, the impact of these advancements is likely to be felt across a broad spectrum of industries.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions