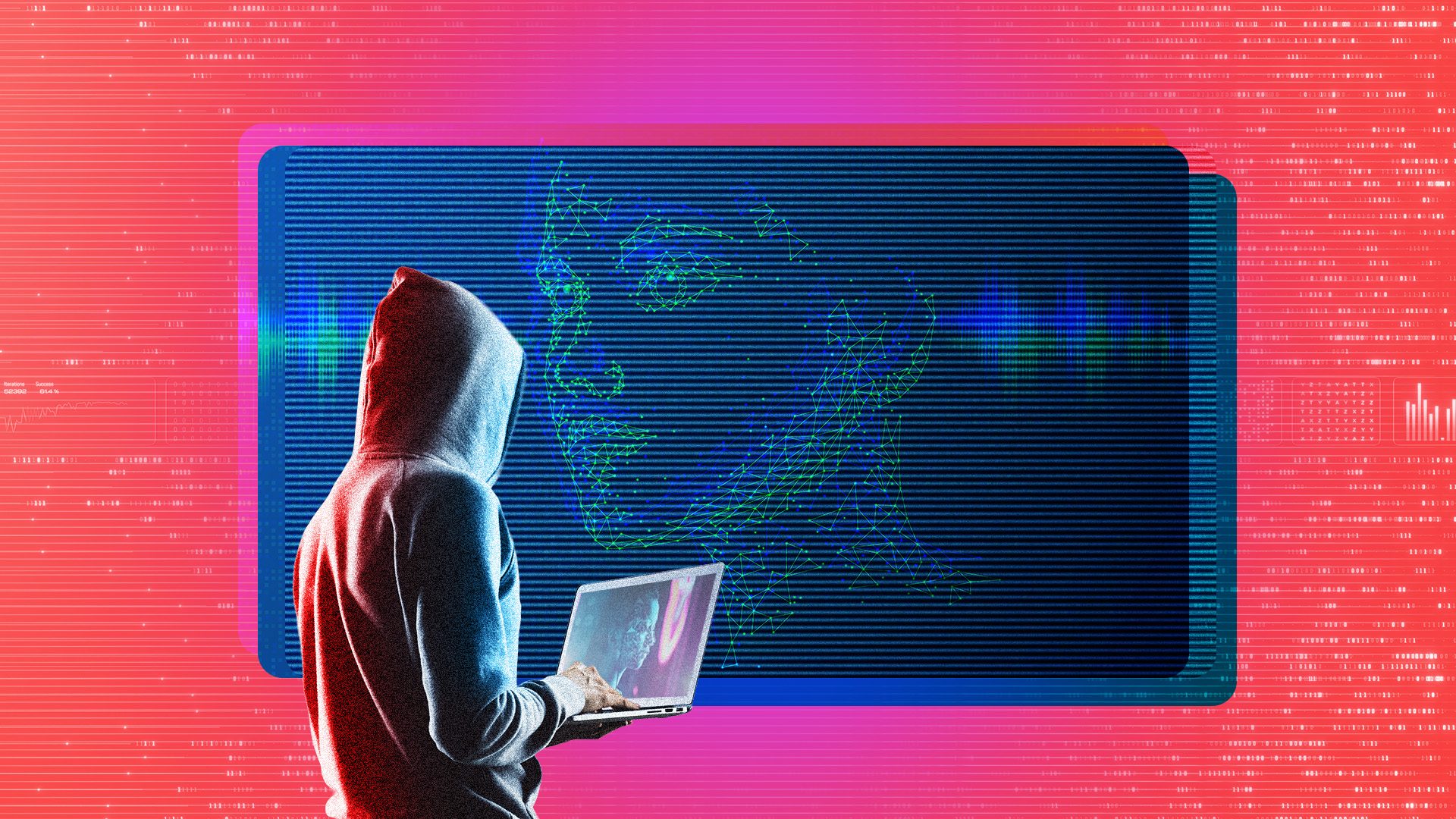

As artificial intelligence (AI) technology continues to advance, its applications are becoming increasingly powerful—and so are the threats it poses. According to a recent report from Cloudflare, the rise of AI has led to a significant shift in the landscape of cyber threats, with what they describe as an “industrialization of cyber threats” by 2026. The firm notes that the barriers to entry for malicious actors have effectively vanished, enabling them to exploit AI tools with ease.

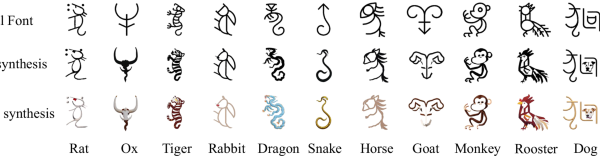

This democratization of technology has implications beyond traditional coding practices. Known as “vibe coding,” this new approach allows individuals without coding expertise to create software applications, but it also empowers threat actors to automate cyber attacks. Cloudflare highlights a concerning trend where, instead of investing millions in developing custom exploits, adversaries can now leverage low-cost generative AI subscriptions to automate attacks, achieving “frictionless scale.”

The growing use of interconnected cloud services and software-as-a-service (SaaS) products further complicates the security landscape. Each connected service represents a potential vulnerability, creating multiple entry points for attackers. For instance, the report cites the case of a threat actor identified as GRUB1, who compromised the Drift application, a customer lead-generation tool from Salesforce. This breach exposed hundreds of corporate tenants simultaneously, demonstrating how automated tools like TruffleHog can scan for valuable credentials hidden in code.

Cloudflare’s findings reveal that GRUB1 utilized AI to identify specific database tables containing high-value information right before gaining unauthorized access to live production environments. The ability of generative AI to navigate complex SaaS architectures means that even less experienced attackers can exploit vulnerabilities that they might not fully understand. As Cloudflare put it, “unsophisticated, individual actors can now execute high-impact breaches,” effectively lowering the bar for cybercriminal activity.

As generative AI systems become increasingly integrated into the workplace, they too pose security risks. Tools like ChatGPT and Google Gemini can generate responses based on user input, which over time accumulates into large datasets that may be exploited by hackers. Cloudflare warns that this unprecedented adoption of AI has resulted in vast amounts of proprietary information, financial data, and personally identifiable information being funneled into these systems, making them highly lucrative targets for future cyber attacks.

Moreover, the integration of AI tools can blur the lines between personal and work-related data, creating additional vulnerabilities. The risk is amplified when sensitive information is processed alongside general data within a single system. In Cloudflare’s assessment, the threat landscape has evolved from isolated data leaks to scenarios where an adversary can compromise the entire corporate memory.

The report also highlights alarming developments in deepfake technology, which cyber attackers have increasingly employed to create more convincing fake personas. In 2025, a significant number of organizations reported experiencing AI-powered threats. Attackers utilized large language models to craft messages with minimal grammatical errors—previously a hallmark of less convincing phishing attempts. By 2026, North Korean hackers were discovered enhancing their capabilities with real-time deepfake technology, enabling them to impersonate individuals during video calls and deceive employees in tech firms.

Cloudflare identified these attackers as belonging to a group called “PutridSlug,” who used deepfake technology to impersonate executives, thereby exploiting established trust. Another group, “PatheticSlug,” posed as journalists to gather sensitive insights from experts, demonstrating the evolving complexity of AI-assisted cyber espionage. While other nations, including Russia and Iran, have also engaged in similar strategies, North Korea’s use of deepfakes represents a particularly advanced approach to cyber warfare.

In response to these emerging threats, Cloudflare offers several recommendations for companies seeking to bolster their defenses. Establishing clear guidelines for the use of AI tools in the workplace is crucial, as employees must be cautious about sharing sensitive information with chatbots. Employing stronger human verification measures in remote hiring processes can help counteract the risks posed by deepfakes. Additionally, companies should consider restricting company-issued laptops to approved locations to prevent unauthorized control by foreign operatives.

Finally, organizations are encouraged to upgrade their email defenses against AI-generated attacks, which are becoming increasingly sophisticated. By utilizing AI to analyze behavioral patterns, companies can better detect suspicious activities and compromised accounts in real time. As the boundaries of cybersecurity continue to shift, vigilance and proactive measures will be essential in navigating the complexities introduced by advanced AI technologies.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks