As companies increasingly adopt AI chatbots for workplace queries, a significant yet often overlooked data security concern arises. Employees’ inquiries are retained, with many organizations lacking clear protocols on data deletion. This situation, amplified across thousands of employees and organizations, presents a considerable security risk, according to a new analysis from Brooks Kushman, a law firm specializing in technology and intellectual property.

The firm identifies two key issues that pose urgent security threats in corporate AI: the indefinite storage of data and inadequate controls over access to AI systems. The data retention issue is more pervasive than many executives might assume. Employees frequently upload sensitive materials—such as client records, financial data, and trade secrets—without realizing that these files may be stored indefinitely. Some AI platforms even utilize these interactions to improve their models unless companies actively opt out.

This trend contributes to a growing attack surface; the more data a company retains, the greater the risk of data breaches. Regulators are increasingly scrutinizing how organizations manage and limit this exposure, adding further pressure to corporate governance. As Brooks Kushman notes, the accumulation of retained data without proper oversight can lead to severe security vulnerabilities.

The second significant issue is access control, specifically regarding who or what can utilize AI systems and their capabilities. Traditional software limits user access to specific tools and data sets. In contrast, AI technology allows a single user with extensive permissions to extract information across an organization, generate new content, and disseminate that output without oversight.

The complexities multiply when the “user” is an AI agent capable of independent operations and decision-making. Brooks Kushman argues that these AI agents should be managed similarly to privileged human employees, given their potential to access and manipulate sensitive information.

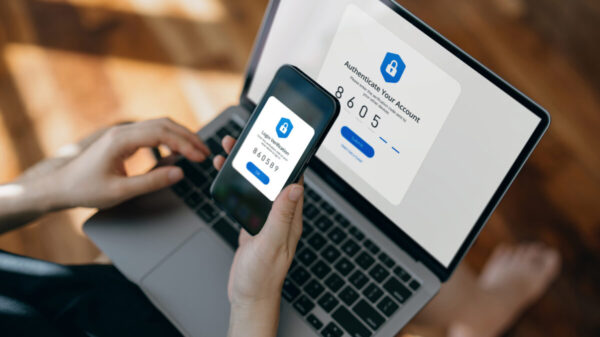

“AI security is no longer just about protecting models. It is about controlling data, defining access, preserving evidence, and ensuring accountability across complex, evolving systems,” the firm states. To mitigate these risks, Brooks Kushman advocates for implementing a framework known as Role-Based Access Control (RBAC). This system clearly delineates what each individual and AI agent is authorized to do within an organization’s systems, establishing distinct permissions for roles such as developers and managers.

Legal risks are also significant. A recent federal court ruling in United States v. Heppner determined that conversations conducted with publicly available AI tools lack attorney-client privilege. This ruling could expose lawyers and executives using consumer-grade AI products for sensitive legal analyses, as such conversations may surface in court. The decision underscores the necessity for companies to utilize enterprise-grade AI platforms that guarantee formal security commitments, rather than relying on free consumer applications.

As regulatory pressures mount, the urgency for companies to enhance their AI governance structures is becoming increasingly clear. The EU AI Act, new U.S. state privacy laws, and intensified federal scrutiny are all compelling organizations to demonstrate robust governance frameworks around their AI systems. Brooks Kushman stresses that firms that proactively tighten data retention policies, develop formal access protocols, and educate employees on responsible AI usage will be better equipped to navigate these challenges than those that delay action.

The future of AI in the corporate landscape relies heavily on how companies address these security vulnerabilities. With data privacy concerns escalating and regulatory frameworks evolving, organizations that prioritize comprehensive management of AI systems will not only protect sensitive information but also secure their positions in an increasingly competitive marketplace.

See also Australia’s IT Services Market Reaches $36.7B in 2025, Projected to Hit $84.2B by 2034

Australia’s IT Services Market Reaches $36.7B in 2025, Projected to Hit $84.2B by 2034 AI Tools Empower Hackers: Cloudflare Report Reveals 2026 Cyber Threat Landscape

AI Tools Empower Hackers: Cloudflare Report Reveals 2026 Cyber Threat Landscape Top 5 AI Pentesting Tools for 2026: Enhance Cybersecurity with Automation and Accuracy

Top 5 AI Pentesting Tools for 2026: Enhance Cybersecurity with Automation and Accuracy 20 Indian AI-Driven Cybersecurity Startups Transforming Digital Defense Landscape

20 Indian AI-Driven Cybersecurity Startups Transforming Digital Defense Landscape