Anthropic has introduced BioMysteryBench, a novel bioinformatics benchmark comprising 99 real-world questions crafted by domain experts to assess the performance of its AI model, Claude, alongside others in tackling intricate biological research tasks. This benchmark aims to overcome what Anthropic identifies as the shortcomings of existing evaluations in scientific AI, which typically gauge knowledge and reasoning but fail to encapsulate the complex, open-ended, and method-agnostic nature of actual research. BioMysteryBench challenges models to analyze genuine biological datasets, including DNA and RNA sequencing, proteomics, and metabolomics, utilizing a minimal set of standard bioinformatics tools and access to databases such as NCBI and Ensembl, along with the flexibility to install additional software if required.

In contrast to previous benchmarks that assess models based on their adherence to processes similar to those employed by human researchers, BioMysteryBench evaluates them solely on their final answers. This approach eliminates the subjective biases inherent to individual scientists’ methods. The questions are grounded in objective, verifiable data properties or validated metadata—such as identifying the organism associated with a crystal structure or the viral species infecting a patient, as confirmed by a PCR assay. Notably, the benchmark does not insist on questions being solvable by humans, allowing for what Anthropic describes as “superhuman question generation,” presenting challenges with objective answers that stumped expert panels.

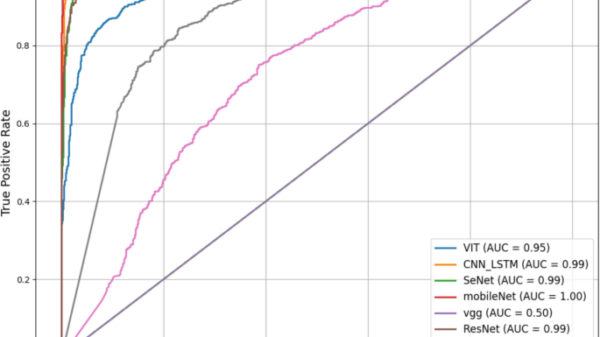

Anthropic’s findings indicate that Claude Sonnet 4.6 and more advanced models successfully resolved a majority of human-solvable problems, while more capable models tackled a significant proportion of human-difficult tasks that expert panels could not solve. Claude Mythos Preview achieved a 30% success rate on problems unsolvable by humans. An analysis of the model responses revealed two primary strategies that distinguished Claude from human methods: its capacity to draw on an extensive underlying knowledge base to synthesize information from hundreds of thousands of research papers without conducting formal analyses, and a tendency to layer multiple methodologies, converging on answers from various lines of evidence when faced with uncertainty.

The research also uncovered a crucial distinction between accuracy and reliability in model performance. For human-solvable problems, leading models either solved questions consistently across all five attempts or not at all, demonstrating a clear bimodal distribution indicative of genuine knowledge retrieval. In contrast, for human-difficult problems, a larger proportion of correct answers resulted from models solving these questions only once or twice in five attempts, suggesting that successes in this category often reflect fortuitous reasoning rather than reproducible solutions. BioMysteryBench is now publicly accessible, and Anthropic has expressed its intent to develop longer-term, real-world tasks that further challenge model capabilities.

KEY QUOTE:

“Claude’s scientific capabilities in biology are improving rapidly across generations, that current models perform on par with human experts, and that the latest generations solved many problems that a panel of human experts could not, sometimes using very different strategies.” — Anthropic statement

This development not only marks a significant step forward in the evaluation of AI in biological research but also underscores the potential for AI models to tackle complex scientific inquiries that remain unresolved by human experts. As the capabilities of models like Claude continue to evolve, the implications for fields such as genomics, drug discovery, and personalized medicine could be profound, ushering in a new era of AI-driven research innovation.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility