Artificial Intelligence (AI) is reshaping global financial services, impacting everything from rural economies to industrialized nations. With applications spanning critical sectors like medicine, education, and economic development, AI’s integration into the financial sector is becoming increasingly sophisticated. While developed economies are enhancing their AI capabilities, developing regions, such as Bangladesh, are grappling with both opportunities and challenges, particularly in expanding financial inclusion for unbanked populations. However, as many banks in Bangladesh face significant gaps in operational AI policies, the ethical implications surrounding AI are evolving from initial exploration to the establishment of formal regulatory frameworks.

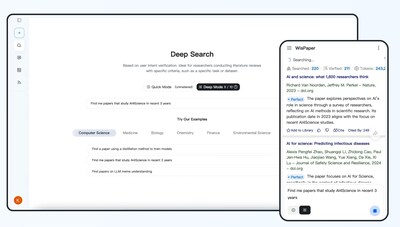

The landscape of financial services is undergoing a transformation driven by AI and embedded finance. Instant loans at checkout and seamless online banking are just a few examples of how AI-powered embedded finance is reshaping user experiences. This integration not only enables rapid financial decision-making but also introduces “Agentic AI,” where autonomous systems take charge of real-time decisions typically made by human intermediaries. This advancement raises critical questions about whether technological progress will outpace ethical considerations.

Embedded finance integrates various financial functionalities—such as payments, lending, and insurance—into non-financial platforms like social media networks and e-commerce sites. AI enhances this model by enabling instant credit scoring and sophisticated fraud detection, facilitating a seamless financial ecosystem. However, this rapid evolution also raises concerns about ethical opacity and decision-making transparency.

The “black box” nature of AI presents a significant challenge, where complex algorithms operate with little clarity. According to IBM, understanding the decision-making processes behind AI applications, especially in embedded finance, can be daunting. This lack of transparency, especially regarding loan approvals and pricing, can undermine consumer confidence and raise accountability issues. As stakeholders grapple with determining responsibility—whether it lies with the platform, the financial institution, or the algorithm—the potential for ambiguity increases.

Furthermore, historical financial data often reflects systemic biases, posing risks that AI-driven embedded finance could perpetuate discrimination against low-income or marginalized communities. This issue is particularly relevant in developing regions like Bangladesh, where the goal of financial inclusion could be compromised by inadequately designed AI systems that deny vulnerable populations access to credit and insurance.

Embedded finance also relies heavily on extensive data collection, incorporating personal, behavioral, and transactional information. While AI algorithms process this data to anticipate user needs, concerns about privacy and informed consent become paramount. Many consumers remain unaware of how their data is managed within AI platforms, turning consent into a mere formality rather than a meaningful choice. The absence of robust data governance frameworks raises the risk of data breaches and exploitation of sensitive information, which could have legal ramifications and erode consumer trust.

In this autonomous financial ecosystem, AI’s proactive capabilities lead to the potential for manipulation, where behavioral prompts could exploit cognitive biases. For example, offering immediate credit at the point of purchase may encourage impulsive borrowing rather than responsible financial behavior. Ethically sound embedded finance must ensure that such nudges empower users rather than manipulate them.

As AI integration in finance expands, systemic risks emerge. When multiple platforms utilize similar algorithms and datasets, decisions may foster “herding” tendencies in financial markets, contributing to new forms of market volatility and instability. The risks associated with AI implementation are not solely technological; they are structural, posing significant challenges for the industry.

Governance challenges further complicate the embedded finance ecosystem, which involves a diverse array of participants, including fintech platforms, banks, regulators, and technology providers. Traditional regulatory frameworks were not designed for such integrated systems, leaving critical issues concerning liability, compliance, and consumer protection unresolved. This regulatory gap creates vulnerabilities that could allow unethical practices to flourish.

To achieve ethical standards in embedded AI within the financial sector, a comprehensive strategy is essential. This approach includes promoting transparency and explainability through “Explainable AI” (XAI), regular auditing of AI models to mitigate bias, and robust data protection measures. Additionally, mandating human oversight for critical decision-making processes and establishing AI ethics committees will help address potential harms. As regulatory frameworks evolve, they must keep pace with technological advancements.

Financial institutions prioritizing ethics in AI not only minimize the risk of regulatory penalties but also cultivate lasting stakeholder relationships. The Bangladeshi government is currently reviewing the “National AI Policy, Bangladesh: 2026-2030,” with the aim of positioning the nation as a leader in AI within South Asia. Looking forward, the future of embedded finance, especially with the rise of agentic AI, hinges on maintaining public trust. While this evolution promises enhanced accessibility and efficiency, the absence of a robust ethical framework could lead to disenfranchisement and systemic instability. Ultimately, the trajectory of finance will depend on how responsibly it integrates into our lives and contributes to overall societal well-being.

See also Bittensor’s TAO Token Faces Challenges to Achieve Millionaire-Maker Status

Bittensor’s TAO Token Faces Challenges to Achieve Millionaire-Maker Status AI Stocks Nvidia, Broadcom, and Amazon Set to Propel Nasdaq to New Highs

AI Stocks Nvidia, Broadcom, and Amazon Set to Propel Nasdaq to New Highs Wall Street Optimism Grows as Nvidia Hits $5 Trillion Market Cap Amid AI Boom

Wall Street Optimism Grows as Nvidia Hits $5 Trillion Market Cap Amid AI Boom Finance Minister Alerts Banks to AI Threats from Anthropic’s Claude Model Vulnerabilities

Finance Minister Alerts Banks to AI Threats from Anthropic’s Claude Model Vulnerabilities