In a recent episode of the Slate podcast “Death, Sex & Money,” titled “AI Confessions: A Chatbot Saved My Life,” listeners were presented with alarming stories from individuals who have shared deeply personal and sensitive information with AI chatbots. One woman recounted how, “against [her] better judgement,” she detailed her medical history, including blood test results and diagnoses, to an AI tool amid life-threatening health challenges. The discussion highlighted a concerning trend: the lack of awareness regarding the privacy risks associated with sharing such intimate data with a technology that does not guarantee confidentiality.

The episode opened with a mischaracterization of AI chatbots, referred to as “communicating robots,” a term that overlooks the reality that these systems are essentially sophisticated text prediction software. This semantic shift in naming has, in many cases, dulled critical thinking, as individuals would likely reconsider their engagement with these tools if they were framed more accurately. A featured participant, a former tech worker turned voice actor, shared his reliance on ChatGPT after the death of his cat, despite that generative AI technologies threaten to disrupt his new profession.

Another guest, a play therapist, expressed frustration with traditional therapists after finding more value in the responses from Anthropic’s Claude AI than in her previous six therapists. She noted that none of the human therapists had considered asking about her family dynamics while discussing her feelings of burnout—an oversight that raises questions about the effectiveness of human therapy compared to AI responses that offered flattering, albeit simplistic, insights.

This reliance on AI raises pertinent questions about the standards of therapy and the implications of using AI in such a sensitive context. The therapist’s critique of the profession’s slow adoption of technology, especially regarding record-keeping, also merits scrutiny. While she described traditional note-taking as “inexcusable,” the benefits of handwritten notes include security from hacking and a less distracting presence for clients, who may find it off-putting if therapists are typing on devices during sessions.

Crucially, the podcast did not address the potential ramifications of client privacy, an essential pillar of the therapeutic relationship. The Finnish psychotherapy provider Vastaamo’s 2020 data breach serves as a sobering example. Hackers released sensitive notes from 33,000 clients, leading to tragic outcomes, including several suicides. Such incidents underscore the imperative for strict privacy guidelines, which are not inherently applicable in AI interactions.

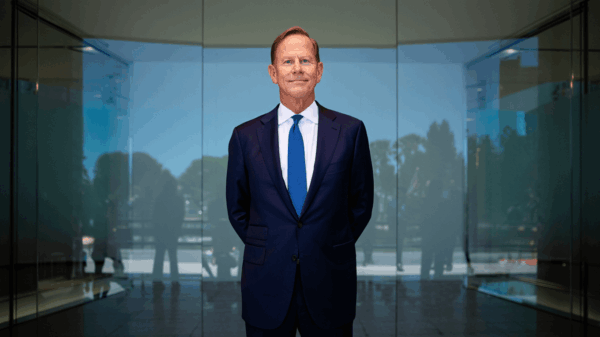

Human therapists are bound by strict confidentiality rules, ensuring that client conversations remain private unless specific legal circumstances arise. In contrast, interactions with AI platforms like ChatGPT are subject to different terms. OpenAI has confirmed that user conversations can be accessed by employees for training purposes, and while users can opt out of data retention, the company’s commitment to user privacy is limited. Sam Altman, CEO of OpenAI, acknowledged the lack of legal protections comparable to those in traditional therapy, stating, “Right now, if you talk to a therapist or a lawyer or a doctor about those problems, there’s like legal privilege for it.” He highlighted that this security is absent in AI interactions.

Further complicating matters, OpenAI was legally mandated to retain all user data indefinitely during a copyright infringement lawsuit from April to September 2025, despite claims of data deletion within a 30-day window. This situation raises significant concerns about the safety and confidentiality of sensitive information shared with AI, particularly in a therapeutic context.

The rise of AI in therapy-related scenarios invites scrutiny of ethical boundaries and the implications of digital interactions. As more individuals turn to these tools for guidance in personal matters, the need for robust privacy protections becomes increasingly urgent. The intersection of technology and mental health continues to evolve, and with it, the necessity of addressing privacy concerns and ensuring the safety of vulnerable users who might seek solace in AI.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature