The European Union has initiated a legal move to prohibit the use of artificial intelligence practices that create images of child sexual abuse. On Friday, March 13, 2023, member state governments proposed that such practices be formally included as banned activities in the bloc’s principal AI regulation.

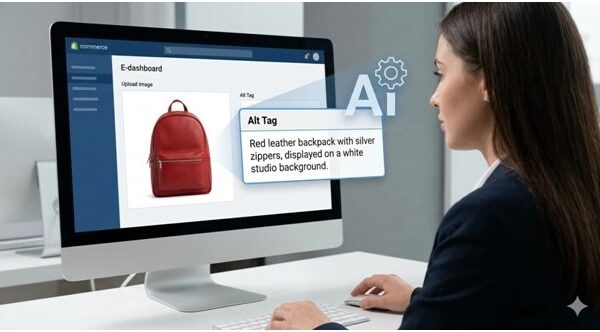

This proposal aims to incorporate a ban on AI technologies capable of generating child sexual abuse material within the EU AI Act, the most comprehensive regulatory framework for artificial intelligence adopted by the European Union two years ago. This development comes amid rising global concerns regarding explicit content generated by AI systems, particularly intimate deepfakes produced by generative technology.

Regulators worldwide are increasingly apprehensive that this technology could exacerbate the digital exploitation of children and complicate law enforcement efforts. The push for tighter restrictions on technology companies has intensified following reports of sexual content allegedly produced by Grok, an AI chatbot developed by Elon Musk’s company, xAI, and integrated into the X social media platform.

In Europe, multiple technology regulators, including those in the UK, Ireland, and Spain, are currently investigating potential misuse of this technology. These authorities, alongside EU regulators, are probing cases of sexual deepfakes allegedly generated by Grok, amplifying calls for stringent regulatory measures.

The proposed ban will require approval from the European Parliament before it can be officially adopted. Members are scheduled to vote on a similar measure in the near future. Should both the Parliament and the Council reach a consensus, the next phase would involve negotiations with the European Commission to amend the existing AI legal framework.

The discussions surrounding this proposal are anticipated to be contentious. The European Commission has previously suggested relaxing certain aspects of the AI regulations to foster technological innovation, a move that garnered backing from several prominent technology firms and industry stakeholders. However, this approach has faced criticism from civil society groups and privacy advocates, who argue that it favors the interests of large technology companies at the expense of safeguarding vulnerable populations.

Analysts project that the legislative and negotiation processes regarding this rule change could take approximately a year before new regulations are enacted. This situation underscores the paradox inherent in modern generative technology, which can be utilized for creative purposes, such as composing music or assisting in scientific research, but also poses significant risks by enabling harmful visual manipulations.

In computer science, algorithms operate devoid of moral considerations; their purpose is to derive patterns from data. This reality highlights the critical importance of human oversight in establishing ethical guidelines and regulatory frameworks for technology that holds both transformative potential and serious threats.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health