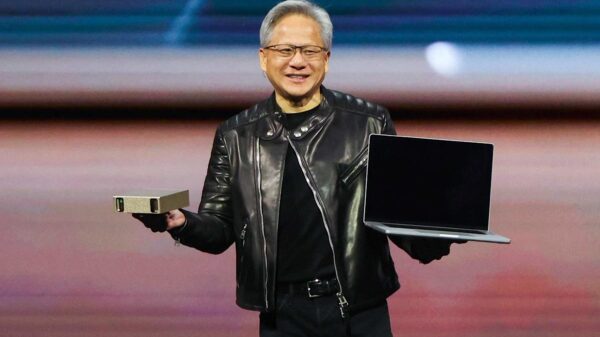

Amazon has partnered with NVIDIA to develop advanced multimodal artificial intelligence assistant technology specifically tailored for automotive environments, combining Amazon’s Alexa Custom Assistant with NVIDIA’s DRIVE AGX automotive computing platform. The announcement, made on March 16, 2026, outlines a technical integration aimed at enabling car manufacturers to deploy branded in-car voice assistants that can process commands locally while also connecting to cloud services. Although the system is not yet commercially available, automakers are expected to begin evaluations in early 2027, with private demos managed by the Alexa Custom Assistant team.

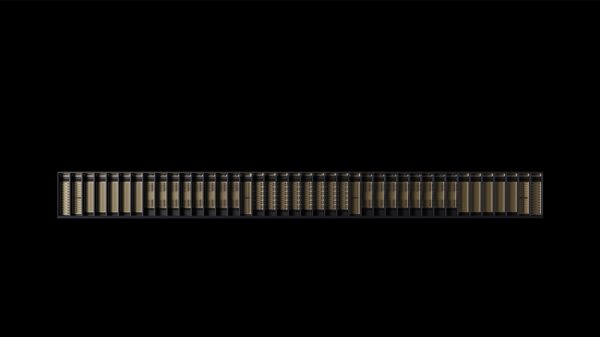

This collaboration centers on integrating two processing layers: edge processing and cloud processing. Edge processing will run AI models directly on the vehicle’s hardware to manage low-latency tasks requiring privacy, such as understanding natural conversation and cabin context. In contrast, cloud processing will handle more extensive requests including music streaming, smart home device control, and service bookings. The chosen hardware, NVIDIA’s DRIVE AGX, is recognized for its high performance in advanced driver assistance and in-cabin AI workloads.

According to Anes Hodžić, vice president of Amazon Smart Vehicles, automakers have expressed a need for their vehicles to operate as smart assistants capable of comprehending passengers through conversation and contextual awareness. He emphasized that the collaboration has produced “extraordinary capabilities” through a “multi-modal, multi-model, and multi-agent technology stack.” Meanwhile, Rishi Dhall, vice president of Automotive at NVIDIA, highlighted the complexities of the vehicle cabin, describing it as “the most demanding AI inference environment in consumer technology,” where real-time speech, vision language models, and multimodal reasoning must work together under strict privacy constraints.

The vehicle environment presents unique challenges compared to traditional AI implementations in smart speakers or smartphones. Factors like road noise, multiple voices, and safety regulations complicate the design of voice interfaces. The acoustic profile in a moving vehicle varies significantly based on speed and conditions, making speech recognition more challenging. The integration of multimodal processing—where voice input is combined with data from sensors—further complicates the task, as the assistant must interpret both ambient context and passenger speech in real time.

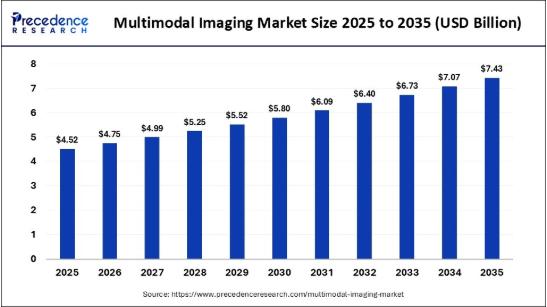

Market research indicates that the in-car voice assistant market was valued at $3.27 billion in 2026 and is projected to grow to $5.49 billion by 2029, with a compound annual growth rate of 13.9%. Another report places the global automotive voice recognition market at $3.7 billion as of 2024, with growth expected at a CAGR of 10.6% through 2034. Notably, latency is a significant engineering consideration; leading voice assistants aim for end-to-end latency below 500 milliseconds, with optimal edge-deployed systems achieving under 250 milliseconds. The Amazon-NVIDIA partnership addresses this by leveraging local processing on the DRIVE AGX platform, reducing reliance on cloud requests for time-sensitive queries.

This collaboration is part of a larger set of automotive announcements from NVIDIA. On the same day, the company disclosed that BYD, Geely, Isuzu, and Nissan are developing level 4-ready vehicles using the NVIDIA DRIVE Hyperion platform, a comprehensive autonomous vehicle architecture that integrates computing, sensors, networking, and safety systems. NVIDIA also announced an expanded partnership with Uber to deploy a fleet of fully autonomous vehicles powered by the DRIVE AV software stack across 28 cities by 2028, beginning with Los Angeles and the San Francisco Bay Area in early 2027.

NVIDIA’s DRIVE Hyperion platform serves as the foundational reference architecture for production autonomous driving, while the Alexa Custom Assistant collaboration specifically targets in-cabin intelligence through DRIVE AGX, although both share the same core computing infrastructure. On the same day, NVIDIA introduced Alpamayo 1.5, an upgraded portfolio of AI models for autonomous vehicles, which offers improved specifications for driving trajectories. Additionally, the company unveiled Halos OS, a unified safety architecture aimed at ensuring the reliability of reasoning-based AI systems in automotive applications.

The BMW Group has been identified as the first automaker to integrate Amazon Alexa+ into its vehicles, specifically through the upcoming BMW iX3, which is slated for release in the second half of 2026 in Germany and the United States. This integration will enhance the BMW Intelligent Personal Assistant, which has been part of the BMW iDrive system since 2018. The latest update enables complex, multi-part questions in natural language, a significant upgrade from earlier command-and-response models. This integration expands the capabilities of the assistant when linked to personal Amazon accounts, allowing for music searches and content retrieval.

The Amazon-NVIDIA collaboration emerges against the backdrop of a pronounced surge in automotive AI technologies highlighted at CES 2026. A report from Frost & Sullivan characterized the event as a “decisive inflection point,” marking a shift from software-defined vehicles to those defined by AI, emphasizing the competitive advantage of safe, scalable AI deployment in vehicles. Amazon showcased the increasing adoption of Alexa Custom Assistant, announcing partnerships with HERE Technologies and TomTom to enhance navigation experiences through conversational AI.

As the landscape for in-vehicle AI assistants evolves, Amazon’s integration of Alexa into vehicles represents a significant opportunity for location-based commerce and advertising. The ability of voice interfaces to facilitate natural queries during driving can create new advertising channels, particularly as Amazon extends its data infrastructure into the automotive domain. While the implications for advertising within the Alexa Custom Assistant in vehicles remain unclear, the partnership lays the groundwork for potential integrations in the future, aligning with broader trends in AI and commerce.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility