A recent study highlights the crucial role of invisible restrictions, known as guardrails, in shaping conversations generated by artificial intelligence (AI). Published in AI & Society, the research delves into how these mechanisms, established by major technology companies, dictate the boundaries of acceptable language and influence user interactions with AI systems.

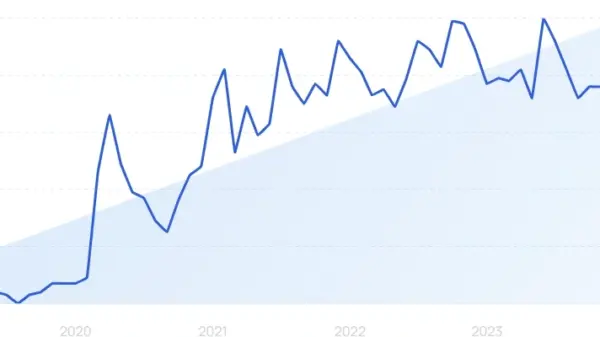

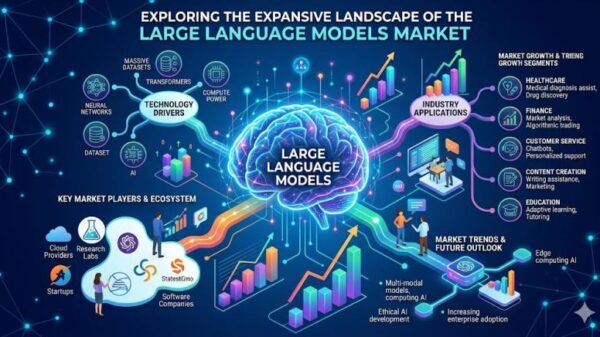

Large language models (LLMs), which serve as the backbone of many AI-driven communication platforms, operate on extensive datasets and complex statistical patterns. This complexity has raised concerns about the opacity of their decision-making processes. Guardrails have emerged as essential tools for developers to manage risks, employing a blend of training techniques, filtering rules, alignment methods, and moderation tools to guide AI responses.

The study, titled “Generating the Language of AI Harms: Mapping Guardrails Using Critical Code Studies,” examines guardrails through an interdisciplinary lens. The research underscores that the design of software systems reflects broader cultural values and political structures. In the context of generative AI, guardrails are particularly significant, providing insight into how companies regulate their models and the operational limits of the technology.

Guardrails serve as sociotechnical governance mechanisms that influence the nature of conversations on AI platforms. As generative AI continues to expand its applications—from education to creative writing—the embedded restrictions shape how information is produced and disseminated. They function as filters that delineate acceptable discourse, either promoting or limiting discussions on various topics based on the underlying rules of the system.

The study offers a layered analysis of AI moderation. At the foundational level, guardrails utilize classification systems to detect potentially harmful prompts or outputs, evaluating language against predefined categories such as violence and misinformation. In more advanced stages, alignment strategies train models to entirely avoid certain responses. This intricate system of conversational control not only restricts specific words or phrases but also guides the overall patterns of dialogue, shaping how AI interprets and responds to user input.

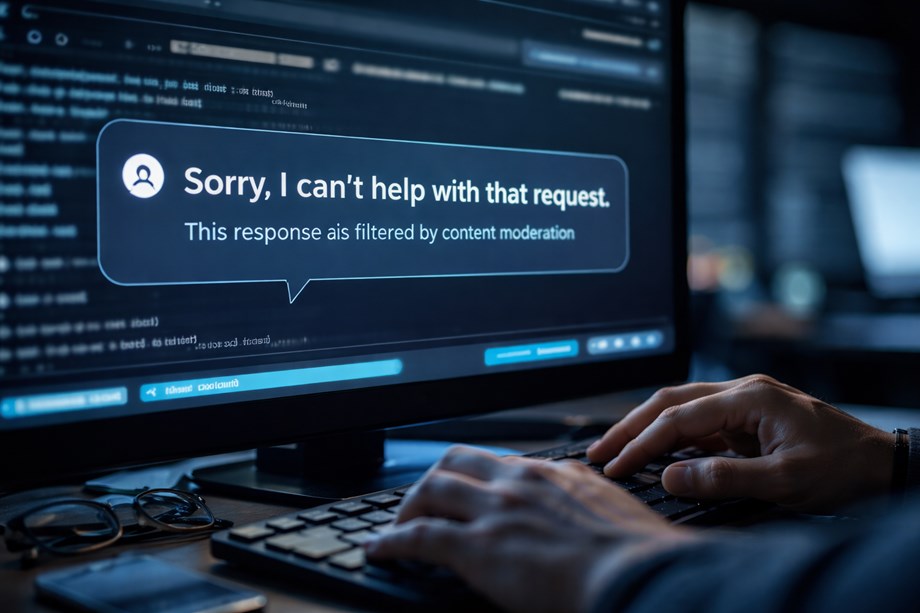

For many users, the effects of guardrails are often invisible, manifesting as refusals or redirected answers. The technical decisions that govern these responses remain largely concealed within proprietary development processes, raising concerns about accountability and transparency in AI operations. The study argues that this lack of openness complicates efforts to fully understand and analyze AI behavior.

Despite the challenges of transparency in the AI industry, guardrails provide one of the few observable interfaces for researchers. When an AI model declines to respond or alters its answer, it reveals the operational constraints imposed by its safety mechanisms. By examining moderation tools, developer documentation, and training strategies, researchers can map the hidden architecture that shapes AI-generated conversations.

The research also emphasizes the role of public-facing moderation APIs, which allow developers to incorporate content filtering and safety features into their applications. Studying how these APIs categorize and evaluate language can deepen the understanding of the standards used to regulate AI-generated content. However, much of the information on guardrail design remains proprietary, limiting the ability of outside researchers to conduct comprehensive analyses.

As AI systems become more integrated into everyday communication, the ideological positions encoded within guardrails raise important questions about whose values are reflected in AI-generated discourse. Decisions on what constitutes harmful content and the thresholds for triggering moderation reflect the cultural assumptions and institutional goals of the organizations that develop these technologies.

This dynamic highlights the broader challenge of AI alignment, a field devoted to ensuring that artificial intelligence behaves in ways consistent with human values and societal norms. The study argues that alignment strategies inevitably mirror the priorities of their creators, influencing how language is interpreted and constructed in AI interactions.

The research illustrates how guardrails not only moderate but also influence tone, framing, and overall dialogue. While some responses may promote educational content or safety guidance, others might restrict engagement with sensitive or controversial topics. This dynamic positions AI platforms as key intermediaries in digital communication, akin to social media algorithms that dictate the visibility of content.

In an era where AI’s role in facilitating communication continues to expand, understanding the implications of guardrails becomes increasingly critical. The insights gleaned from this study not only shed light on AI governance but also raise essential questions about accountability and the sociocultural impact of AI-generated language on public discourse.

See also Machine Learning and IoT Transform Factories with Real-Time Analytics and Cybersecurity Advances

Machine Learning and IoT Transform Factories with Real-Time Analytics and Cybersecurity Advances AWS Bedrock’s Code Interpreter Vulnerability Exposes Sensitive Data Risk, Researchers Warn

AWS Bedrock’s Code Interpreter Vulnerability Exposes Sensitive Data Risk, Researchers Warn Anthropic Launches Institute to Analyze Economic Risks of Advanced AI Systems

Anthropic Launches Institute to Analyze Economic Risks of Advanced AI Systems Australian Entrepreneur Develops Personalized mRNA Cancer Vaccine for Dog Using ChatGPT

Australian Entrepreneur Develops Personalized mRNA Cancer Vaccine for Dog Using ChatGPT Shehryar Khan Advances Machine Learning Research on Patent Innovation at Virginia Tech

Shehryar Khan Advances Machine Learning Research on Patent Innovation at Virginia Tech