In a significant leap for the intersection of biology and artificial intelligence, researchers have introduced a novel computational framework that could redefine our understanding of living cells. The new methodology, known as partially shared multi-modal embedding, integrates various biological data streams into a cohesive computational language, offering a comprehensive view of cellular identity. This advancement comes at a pivotal moment as biology grapples with an influx of high-dimensional datasets encompassing a range of cellular information.

Cells are inherently complex, and their states are multifaceted, defying description by a single type of data. Traditional methods often isolate data types—such as genomic sequences, transcriptomic profiles, and proteomic layers—or employ oversimplified fusion techniques that overlook intricate interrelations. The innovative work by researchers Zhang, Shivashankar, and Uhler introduces a paradigm shift by utilizing a learning framework that identifies partially shared features across modalities, thereby illustrating the nuanced interplay of cellular states.

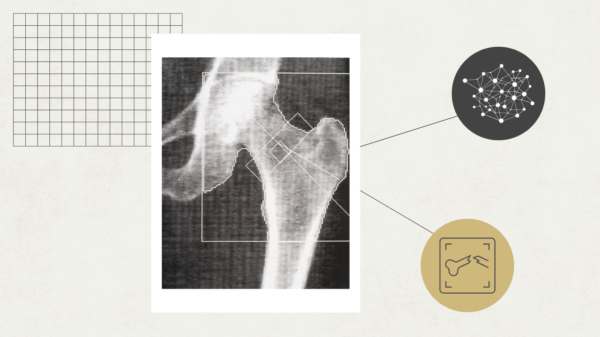

The embedding strategy employs machine learning architectures to uncover latent spaces where disparate data types can coexist and be analyzed without losing their unique attributes. This method effectively differentiates between modality-specific signals and overlapping biological themes, ensuring that the integrated representation reflects the biological complexity inherent in cells. As a result, these enriched embeddings enhance predictive power, enabling more nuanced differentiation among cell states that often go unnoticed when data modalities are considered in isolation.

This advanced approach marks a departure from previous methodologies that assumed either complete independence or total overlap among data types. Instead, it reflects a biologically realistic scenario where different modalities partially overlap while contributing unique insights to the overall representation. This comprehensive perspective captures the multifactorial nature of cell biology, wherein genetic, epigenetic, and environmental influences collectively shape cellular behavior. The implications of this framework are particularly significant for fields reliant on integrated cellular analyses, such as cancer biology, developmental studies, and precision medicine.

In practical applications, the researchers implemented their method using diverse cell types and data modalities. By applying their partially shared embedding to datasets that combine gene expression profiles, chromatin accessibility, and imaging features, they achieved superior clustering and classification capabilities compared to traditional approaches. This enhanced performance underscores how a thoughtful integration of heterogeneous datasets can reveal previously obscured subpopulations of cells or transient states, which are critical for understanding disease progression and cellular responses to treatments.

The framework also has far-reaching implications for translational research. By generating holistic cellular embeddings, it can power predictive algorithms that forecast cellular behavior under various stimuli, facilitating more targeted therapeutic interventions. Additionally, these integrated models provide essential tools for studying cellular plasticity and heterogeneity—key factors in cancer metastasis and drug resistance that present significant challenges in clinical oncology.

Beyond its biological applications, this advancement represents a pivotal milestone in the application of artificial intelligence within the life sciences. It exemplifies the potential for designing tailored machine learning models that leverage deep biological insights, thereby enhancing their relevance and effectiveness. This study illustrates a growing trend where interdisciplinary teams merge expertise from computational sciences, molecular biology, and biophysics to tackle complex challenges that transcend conventional disciplinary boundaries.

The method also addresses the technical challenges of multi-modal data integration, such as variability in dataset size, quality, and resolution. By flexibly weighting shared versus modality-specific components, the partially shared framework remains robust against noise and missing values, a crucial consideration for real-world applications where ideal datasets are often scarce.

The visualization capabilities of these integrated embeddings further enhance the understanding of cellular biology. By translating high-dimensional data into interpretable latent spaces, researchers can visually explore cellular relationships and identify patterns indicative of biological processes such as differentiation and disease states. These visual insights complement quantitative measures, fostering hypothesis generation and making data-driven discovery more accessible to the broader scientific community.

From a computational perspective, the framework offers scalability and extensibility. It can easily incorporate additional data types—such as metabolomics or spatial transcriptomics—adapting as new measurement technologies emerge. This flexibility ensures that the embedding model can evolve, keeping pace with advancements in cellular analysis. The underlying architecture supports efficient training on large datasets through modern hardware accelerations and parallel computations.

The researchers’ work represents a synthesis of theoretical innovation with empirical validation, confirmed through comprehensive benchmarking against existing integration methods. Their findings underscore the superior accuracy and biological interpretability of the partially shared multi-modal embedding approach, a critical balance for fostering trust among experimental biologists seeking actionable insights from complex datasets.

Looking toward the future, the potential for clinical translation appears substantial. Cellular state maps derived from these integrated embeddings could facilitate diagnostic stratification, patient monitoring, and personalized intervention strategies. As health data increasingly becomes multi-dimensional, methods like this will be crucial for extracting clinically meaningful signals, ultimately contributing to improved patient outcomes.

Furthermore, the framework has the potential for systemic studies, extending its applications to tissue, organ, or even organismal levels. This multiscale approach aligns with the holistic goals of systems biology and personalized medicine, creating a coherent computational bridge from molecular changes to physiological states and disease phenotypes. The current study not only marks a significant advancement but also sets the stage for future research avenues, including the integration of temporal dynamics and clinical metadata, enhancing our understanding of cellular heterogeneity and function.

In summary, the introduction of the partially shared multi-modal embedding framework by Zhang, Shivashankar, and Uhler represents a groundbreaking innovation in computational biology, providing a more holistic representation of cellular states. This method not only enhances scientific understanding but also equips researchers and clinicians with powerful tools to navigate the complexities of life, propelling a new era in biomedical discovery driven by integrative data science.

Zhang, X., Shivashankar, G.V. & Uhler, C. Partially shared multi-modal embedding learns holistic representation of cell state. Nat Comput Sci (2026). https://doi.org/10.1038/s43588-025-00948-w

See also AI Music Video Generator Enhances Output Quality with 90% Lip-Sync Accuracy and Customization

AI Music Video Generator Enhances Output Quality with 90% Lip-Sync Accuracy and Customization Deepfake Fraud Expected to Reach $40B by 2027, Warns Deloitte in 2026 Outlook

Deepfake Fraud Expected to Reach $40B by 2027, Warns Deloitte in 2026 Outlook Edimakor Launches AI Cartoon Generator, Transforming Photos into Unique 3D Art Instantly

Edimakor Launches AI Cartoon Generator, Transforming Photos into Unique 3D Art Instantly Google Launches Nano Banana 2: Faster AI Image Generation with Real-Time Data Insights

Google Launches Nano Banana 2: Faster AI Image Generation with Real-Time Data Insights Frontier AI Models Reveal Task-Completion Time Horizons: 50% Success Metrics Analyzed

Frontier AI Models Reveal Task-Completion Time Horizons: 50% Success Metrics Analyzed