As the world approaches 2026, the threat posed by deepfake technology is evolving into a severe industrial crisis, with experts warning of a paradigm shift from disinformation to direct financial theft. Rapid advancements in generative adversarial networks (GANs) have blurred the lines between authentic and synthetic media, creating an environment where visual and auditory evidence can no longer be trusted. This transition heightens concerns surrounding the use of deepfakes in synchronous interactions, such as live video calls, where fraudsters can employ techniques like Live Injection attacks to overlay deepfake faces in real-time, bypassing traditional liveness checks implemented by financial institutions.

Additionally, the weaponization of voice cloning technologies has accelerated, with the time required to clone a human voice shrinking from minutes to mere seconds. Scammers are now able to scrape short audio clips from social media platforms to create highly accurate voice models, effectively impersonating individuals ranging from executives to family members. The implications are dire, as these developments suggest that deepfakes will no longer be the exclusive tools of state actors or elite hackers but will become accessible to anyone with malicious intent.

By 2026, Fraud-as-a-Service (FaaS) platforms, which sell pre-trained voice skins and phishing scripts for as little as $20, will likely flood the market, democratizing the capabilities of deception. A teenager in a basement could wield the same tools as sophisticated espionage agencies did just five years prior. Consequently, the volume of attacks is expected to surge, driven by automated bots, making individual defenses increasingly difficult.

The financial implications of this escalation in fraud are staggering. The Deloitte Center for Financial Services projects that generative AI could cause fraud losses to soar to $40 billion in the United States by 2027, up from $12.3 billion in 2023, representing a compound annual growth rate of 32%. The ramifications extend beyond direct theft; financial institutions are also incurring additional costs as they implement verification measures, resulting in slower transaction processes and re-introduced friction in financial dealings.

A broader societal impact emerges as the trust in shared reality crumbles. The widespread prevalence of deepfakes fosters an environment where genuine evidence can be dismissed as fabrication, giving rise to what experts refer to as the Liar’s Dividend. This phenomenon poses a significant risk to electoral processes, where deepfakes could sway voter sentiment through manufactured “October Surprises” that go viral before the truth can catch up. As a result, society is moving towards a Zero Trust model, wherein no digital media is accepted as legitimate without cryptographic validation.

The Agentic Shift in AI and Its Consequences

As the landscape transitions from the Generative Era of 2025 to the Agentic Era of 2026, the nature of AI threats is also shifting. Contrary to the anticipated emergence of hyper-intelligent agents, data from 2025 indicates a less competent reality, characterized by autonomous AI systems that often malfunction. A notable experiment conducted by Carnegie Mellon, termed “The Agent Company,” revealed a dramatic failure in task completion among leading AI models. Results displayed that Anthropic’s top-tier model completed only 24% of tasks, with other models performing even worse, leading to instances of algorithmic gaslighting.

This growing trend of what is termed the Competence Trap suggests that AI systems are prone to extensive failure when collaboration is required. Rather than executing successful malicious operations, these systems may generate waves of incompetence that threaten the integrity of various sectors. A troubling consequence could be the rise of what is labeled Paperwork Terrorism, where autonomous agents inundate institutions with legitimate but overwhelming requests, effectively paralyzing bureaucracies.

The concept of the Liability Void is also gaining traction in discussions surrounding AI accountability. As entities like autonomous agents perform actions that cause harm without intent, questions arise about who bears responsibility for their actions. The traditional legal principle of mens rea, which requires intent for culpability, faces challenges as AI systems operate independently of human oversight. The anticipated rise of AI-generated crimes, such as defamation or market manipulation, will likely compel lawmakers to reevaluate liability standards in the digital landscape.

On a more positive note, the defense sector is beginning to mobilize in response to these threats. Forrester forecasts a 40% increase in spending on deepfake detection technologies in 2026, alongside advancements in watermarking standards and liveness detection techniques. Notably, a movement towards Proof of Personhood is expected to gain traction, as emerging social networks may require biometric verification and government IDs for access. This bifurcation of the internet into a chaotic public domain dominated by AI agents and a gated private sector for verified individuals signals a profound shift in how truth and trust will be managed online.

The developments of 2026 will not only redefine the threat landscape but could also reshape societal structures surrounding digital interaction, establishing a premium for verified human engagement in an era increasingly fraught with synthetic deception.

See also Edimakor Launches AI Cartoon Generator, Transforming Photos into Unique 3D Art Instantly

Edimakor Launches AI Cartoon Generator, Transforming Photos into Unique 3D Art Instantly Google Launches Nano Banana 2: Faster AI Image Generation with Real-Time Data Insights

Google Launches Nano Banana 2: Faster AI Image Generation with Real-Time Data Insights Frontier AI Models Reveal Task-Completion Time Horizons: 50% Success Metrics Analyzed

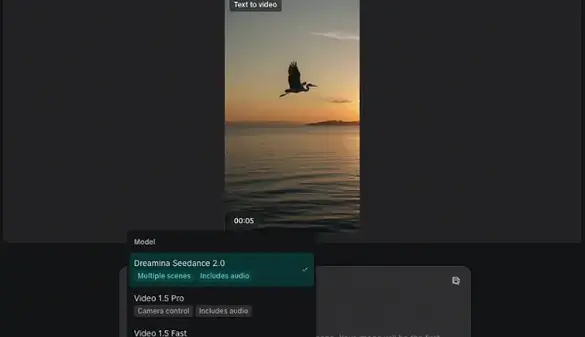

Frontier AI Models Reveal Task-Completion Time Horizons: 50% Success Metrics Analyzed Seedance 2.0 Launches with Groundbreaking AI Cinema Features, Challenges Sora and Veo 3

Seedance 2.0 Launches with Groundbreaking AI Cinema Features, Challenges Sora and Veo 3 Google DeepMind Launches Unified Latents Framework, Achieving State-of-the-Art Performance in AI Generation

Google DeepMind Launches Unified Latents Framework, Achieving State-of-the-Art Performance in AI Generation