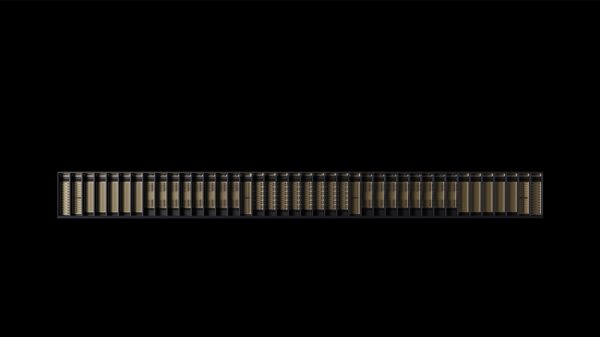

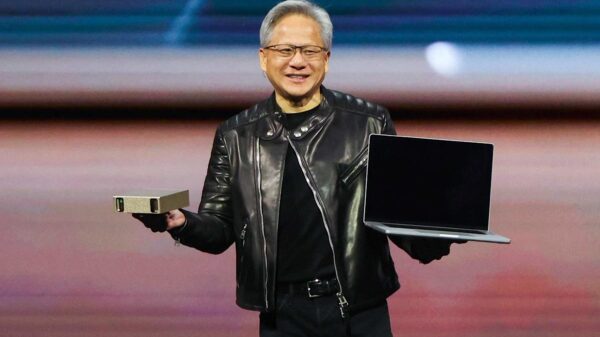

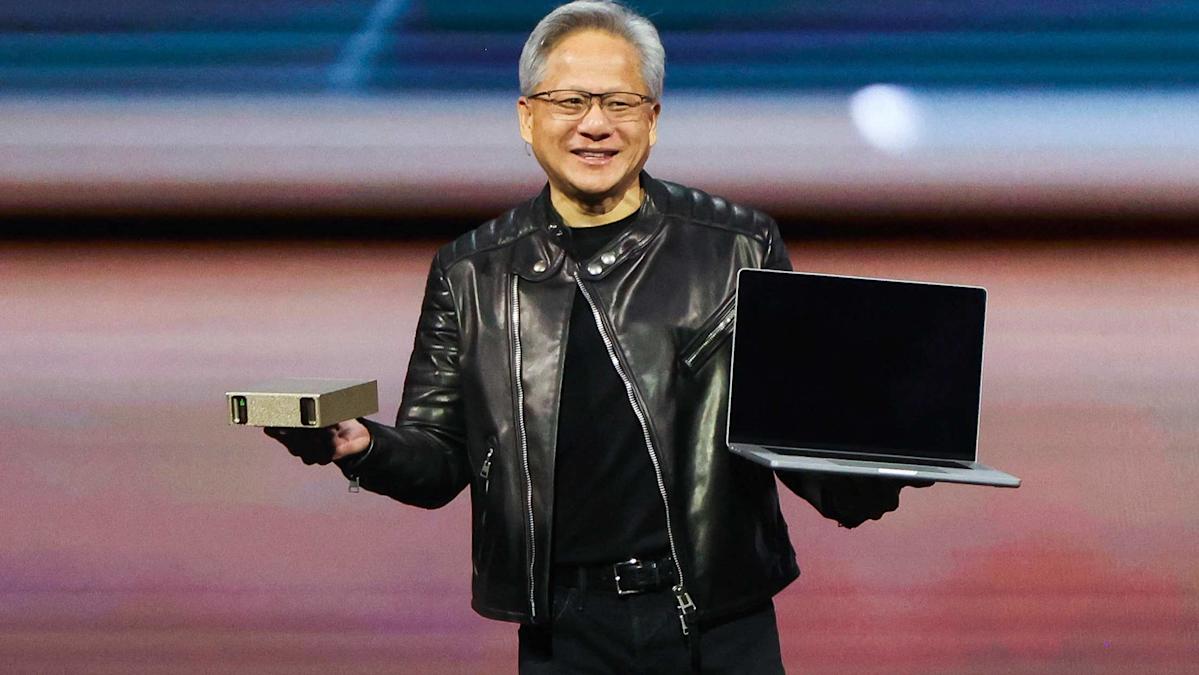

Nvidia (NVDA) inaugurated its GTC event in San Jose, California, on Monday, unveiling a series of chips and platforms, including the all-new Nvidia Groq 3 language processing unit (LPU) and its expansive Vera central processing unit (CPU) rack. The new offerings aim to compete directly with established rivals such as Intel (INTC) and AMD (AMD).

Overall, Nvidia announced five substantial server racks tailored for different roles within AI data centers. The centerpiece of the announcements was the Nvidia Groq 3 chip, which the company licensed from Groq as part of a $20 billion deal last December. This acquisition included hiring Groq’s founder Jonathan Ross and president Sunny Madra, along with other team members.

Groq’s processors specialize in AI inferencing, the process that occurs when users interact with AI models like OpenAI’s ChatGPT, Anthropic’s Claude, or Google’s Gemini. While Nvidia’s graphics processing units (GPUs) can both train and execute AI models, the increasing focus on running models necessitates a dedicated inferencing chip, a gap that Groq 3 aims to fill.

According to Nvidia’s vice president of hyperscale and high-performance computing, Ian Buck, while Nvidia’s GPUs support significantly more memory than Groq 3, the LPU offers faster memory. This combination is intended to harness the performance advantages of both chip types.

Nvidia is introducing the Groq 3 LPX platform, which features a server rack powered by 128 individual Groq 3 LPUs. When paired with the company’s Vera Rubin NVL72 rack, Nvidia claims customers could experience 35 times higher throughput per megawatt of power and tenfold revenue opportunities. The LPX architecture is designed for trillion-parameter models and million-token contexts, thereby optimizing efficiency across power, memory, and computation.

This strategic move should alleviate concerns about Nvidia losing its competitive edge in the AI sector to emerging companies focused on inferencing processors. Alongside the LPX platform, Nvidia also revealed its Vera CPU rack. The Vera Rubin superchip comprises three processors: one Vera CPU and two Rubin GPUs. Nvidia has now separated the Vera into its own standalone chip, which will be housed in dedicated racks that can integrate 256 liquid-cooled Vera chips within a single system.

As agentic AI—where autonomous bots perform tasks on behalf of users—gains traction, CPUs are becoming increasingly crucial. Although GPUs and LPUs power AI models, tasks like browsing websites or extracting information from spreadsheets rely on CPU performance. Nvidia’s chips are vital for data mining, personalization, and the analytical processes that inform GPU operations and AI models.

“Vera is the best CPU for agentic AI workloads,” Buck stated. “We’ve designed a new kind of CPU, the Olympus core, engineered by Nvidia for AI execution. Vera enables faster agentic responses under the extreme conditions for all of the agentic AI use cases and reinforcement learning.”

This announcement is not Nvidia’s first foray into CPU servers. Last month, the company disclosed a partnership with Meta (META) to supply the largest deployment of its previous generation Grace CPUs. However, the introduction of Vera marks a significant effort by Nvidia to position itself not merely as a GPU manufacturer but as a competitor in the CPU arena, challenging both Intel and AMD in the data center market.

In addition to the LPX and Vera racks, Nvidia showcased its new storage rack system, Bluefield-4 STX, which reportedly enhances performance compared to traditional storage solutions. The company also presented its Spectrum-6 SPX networking rack. These innovations are expected to bolster Nvidia’s data center revenue as demand for AI platforms continues to escalate.

Nvidia reported data center revenue of $193.5 billion in its fiscal 2026, a significant increase from $116.2 billion in fiscal 2025. With major hyperscalers like Amazon (AMZN), Google, Meta, and Microsoft (MSFT) projected to invest $650 billion this year in AI capabilities, Nvidia is well-positioned to capture a substantial share of this growing market.

See also Finance Ministry Alerts Public to Fake AI Video Featuring Adviser Salehuddin Ahmed

Finance Ministry Alerts Public to Fake AI Video Featuring Adviser Salehuddin Ahmed Bajaj Finance Launches 200K AI-Generated Ads with Bollywood Celebrities’ Digital Rights

Bajaj Finance Launches 200K AI-Generated Ads with Bollywood Celebrities’ Digital Rights Traders Seek Credit Protection as Oracle’s Bond Derivatives Costs Double Since September

Traders Seek Credit Protection as Oracle’s Bond Derivatives Costs Double Since September BiyaPay Reveals Strategic Upgrade to Enhance Digital Finance Platform for Global Users

BiyaPay Reveals Strategic Upgrade to Enhance Digital Finance Platform for Global Users MVGX Tech Launches AI-Powered Green Supply Chain Finance System at SFF 2025

MVGX Tech Launches AI-Powered Green Supply Chain Finance System at SFF 2025