The rise of artificial intelligence (AI) is fundamentally transforming the responsibilities of platform engineers and Site Reliability Engineers (SREs) by shifting the balance toward automation. As AI systems increasingly take on operational roles, they help manage modern infrastructure, reducing the burden on human workers. Natan Yellin, CEO of Robusta, Itiel Shwartz, co-founder and CTO of Komodor, and Luca Forni, CEO of Akamas, highlight this evolution, suggesting that AI is becoming more than a monitoring tool; it is actively participating in infrastructure management.

Traditionally, platform engineering focused on creating internal developer platforms (IDPs) to simplify complex systems like Kubernetes for human developers. However, Yellin argues that the concept of IDPs is becoming outdated. “Today, platform engineering is about building platforms for AI agents,” he stated. Instead of developers manually raising tickets to deploy code, they can now instruct AI agents, which autonomously assemble, verify, and deploy compliant packages. This shift allows engineers to transition from gatekeepers to final approvers, significantly reducing their workload.

Such advancements enable AI agents to tackle repetitive and mundane tasks that often consume a significant portion of engineers’ time. Shwartz noted that Komodor can independently resolve around 30% of routine incidents, notifying humans only when necessary. These agents can detect anomalies, investigate their causes, and execute remedial actions based on a comprehensive understanding of the system’s current state. This flexibility is crucial, as production environments often defy static rule-based systems.

However, effective autonomous incident response demands a high level of accuracy. Shwartz emphasized the importance of validating AI models before deploying them in live environments, stating, “Accuracy validation is one of the most overlooked things in modern LLM development.” This caution is echoed by data from Omdia, which reveals that organizations often lack robust AI-specific quality metrics.

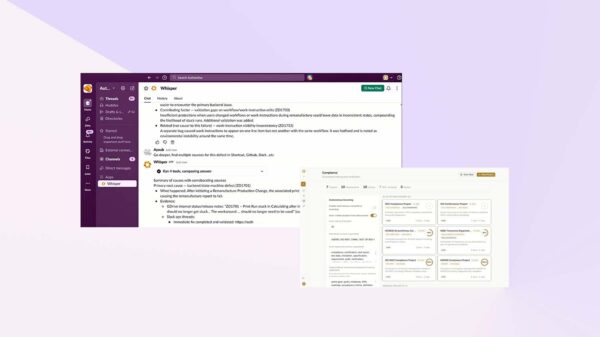

Providing AI agents with the right context is equally essential for their effectiveness. Yellin suggests that agents should be connected to multiple sources of information, creating “circles of context” that enhance their problem-solving capabilities. These circles include observability data, cloud provider information, source control, IT service management, organizational knowledge, and operational databases. Each additional context layer unlocks new avenues for reasoning and decision-making. However, as Shwartz pointed out, adding more data can increase the risk of AI “hallucinations,” where agents generate incorrect outputs. Therefore, organizations must carefully manage the data each agent receives.

Building on this principle, both Robusta and Komodor have concluded that a coordinated system of specialized agents is more effective than a single, generalized model. Robusta’s HolmesGPT, for example, filters and aggregates observability data to present only the most relevant information for specific issues. In similar fashion, Komodor implements a tiered approach where different agents are responsible for detection, investigation, and remediation, streamlining the problem-solving process.

AI agents are also proving valuable in optimizing IT expenditures without compromising system performance. Forni noted that inefficiencies often lie within the workloads themselves, rather than the hardware. After Akamas adjusted customers’ Java Virtual Machine (JVM) configurations, many reported cost reductions of 50% to 70%, with some achieving up to 91% savings in just over two weeks. One large e-commerce company even noted an improvement in response time, which translated to approximately $800,000 in savings.

As organizations adapt to these transformative technologies, they are encouraged to redesign their platforms and workflows around AI agents. This encompasses rebuilding IDPs for autonomous deployment, delegating about 30% of repetitive tasks to AI, and expanding the circles of context available to agents. Furthermore, it is crucial to manage the context diet effectively, ensuring agents receive the right information while avoiding information overload. By tapping into areas often neglected by human engineers, such as JVM tuning and logging optimizations, organizations can cut costs while maintaining performance.

In the fast-evolving landscape of technological infrastructure, embracing AI-driven automation is not just beneficial but essential for organizations aiming to stay competitive. Those who integrate AI into their operational frameworks now will likely find themselves at a significant advantage in the near future.

See also CAS Launches Newton, Agentic AI for Enhanced Scientific Research and Lab Management

CAS Launches Newton, Agentic AI for Enhanced Scientific Research and Lab Management Acemagic Unveils M1A PRO+ Mini PC Featuring AMD Ryzen AI Max, 128GB RAM, and RGB Design

Acemagic Unveils M1A PRO+ Mini PC Featuring AMD Ryzen AI Max, 128GB RAM, and RGB Design Super Micro Launches Compact Edge AI Systems with AMD EPYC 4005 Processors

Super Micro Launches Compact Edge AI Systems with AMD EPYC 4005 Processors Nebraska Engineering Launches Husker AI Days with Google, Microsoft, OpenAI Participation

Nebraska Engineering Launches Husker AI Days with Google, Microsoft, OpenAI Participation Western Digital Secures AI Storage Capacity with 32TB and 40TB Drives Through 2026

Western Digital Secures AI Storage Capacity with 32TB and 40TB Drives Through 2026