The artificial intelligence (AI) industry is accelerating rapidly, and with this speed comes significant responsibility. In a pivotal move toward safer AI development, Anthropic has appointed a specialized manager tasked with mitigating risks associated with chemical and explosive threats. This decision reflects a broader trend among leading AI firms to prioritize safety alongside performance, marking a potential transformation in industry standards as we move into 2026.

This development comes amid growing concerns from governments, investors, and researchers about the misuse of advanced AI models like Claude. By proactively addressing potential issues, Anthropic is signaling a shift in how AI companies are expected to operate, positioning itself at the forefront of responsible innovation.

The new hire will focus on evaluating and reducing the risks that AI systems could pose if misapplied in creating harmful materials. This role is not merely technical; it integrates safety leadership with scientific understanding and risk management, reflecting a comprehensive approach to AI safety.

As modern AI systems gain unprecedented capabilities to process extensive scientific data and produce detailed outputs, the potential for misuse also escalates. Experts warn that while current AI models may not be fully capable of causing harm, the risks are increasing. Anthropic aims to stay ahead of these challenges by implementing rigorous safety measures.

Anthropic’s safety strategy includes advanced risk testing, where AI systems are assessed under controlled conditions to identify potential misuse. Additionally, strict guardrails are being established to ensure models like Claude reject harmful instructions and limit dangerous outputs. The company also collaborates with scientists and policymakers to enhance its safety protocols continuously.

Anthropic has consistently positioned itself as a safety-first AI company, distinguishing itself from competitors who often prioritize speed and scale. The firm’s commitment to “constitutional AI” involves training systems to adhere to ethical guidelines and avoid harmful tasks. This latest strategic hire reinforces the company’s resolve to build robust safety mechanisms beyond current legal requirements.

Critics often question whether such safety initiatives could hinder AI innovation, but Anthropic contends that the opposite is true. By establishing strong safety controls, the company may foster broader adoption of AI technologies, as businesses and governments are more likely to endorse systems that demonstrate a commitment to safety.

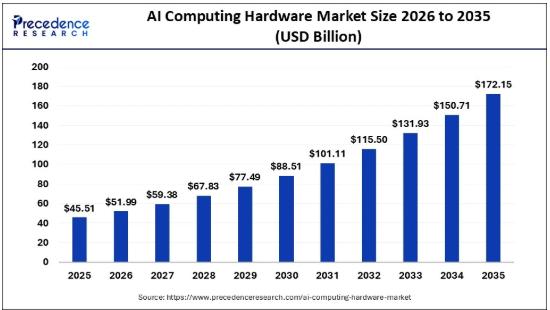

From an investment perspective, this focus on safety is increasingly critical. Companies that prioritize risk management may gain long-term trust and market leadership, which could have significant implications for AI stock performance in the tech sector. Estimates suggest that the AI safety market could grow to over $25 billion by 2030, encompassing research, compliance, and risk management investments.

As the global dialogue around AI safety intensifies, regulatory frameworks are expected to become more stringent. Reports indicate that regulations could expand by 40 percent within the next three years, compelling companies to demonstrate their systems cannot be used for harmful purposes. Anthropic’s proactive measures align with these anticipated changes, positioning the firm favorably in a landscape increasingly focused on compliance.

The implications for businesses are profound; compliance with emerging safety standards will likely become a key factor in AI adoption. Companies utilizing AI tools will preferentially choose platforms that meet rigorous safety criteria, making Anthropic’s initiatives particularly relevant for organizations looking to navigate this evolving regulatory environment.

With Anthropic setting a new standard in safety, other AI companies may follow suit, leading to elevated industry-wide safety standards. Companies like OpenAI and Google DeepMind also emphasize safety but may take different approaches, such as controlled deployment and academic collaboration.

Investors are increasingly scrutinizing how AI firms manage risk, with safety becoming a central component of investment considerations. Advanced stock analysis platforms now evaluate companies like Anthropic not only on revenue growth but also on their ethical and regulatory preparedness. Thus, a strong focus on safety could translate into enhanced stock stability and long-term growth potential.

The future of AI safety looks promising, with predictions indicating a 60 percent increase in safety roles within the next five years. As companies like Anthropic lead the way, we may soon witness a significant rise in specialized safety positions, greater collaboration between the tech sector and government, and the establishment of new global standards for AI risk management.

For everyday users, these developments may seem technical, but they carry substantial implications. Safer AI systems are designed to enhance protection against harmful content and misuse. Users who rely on AI tools for writing, coding, or research will indirectly benefit from these safety advancements, which aim to make AI both helpful and safe.

In summary, Anthropic’s decision to hire a safety-focused manager represents a pivotal moment in the AI industry, underscoring that safety is not an optional add-on but an essential aspect of responsible AI development. By taking proactive measures, the company is building trust among users, investors, and regulators alike, positioning itself as a leader in this critical domain. As the AI landscape evolves, companies that successfully balance innovation with safety will likely emerge as market frontrunners, reinforcing the importance of this balance for investors, businesses, and users alike.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility