Social media platform X has announced a revision to its creator policy aimed at combating the spread of AI-generated misinformation, specifically targeting war-related videos that are not clearly labeled as artificial. This move comes amid growing concerns among experts about the sophistication of AI-generated propaganda, which has shifted from broad broadcasts to more targeted psychological manipulation.

As the capabilities of artificial intelligence evolve, creators who fail to disclose the artificial nature of their content may face severe consequences, including the loss of monetization privileges and potential bans from X’s creator revenue sharing program. “Historically, propaganda was amplified through television debates, newspapers, or mass forwards on messaging platforms. AI has transformed propaganda from a loud broadcast into a personalised whisper,” noted Kartik Gupta, an instructor in AI and Machine Learning at the Newton School of Technology.

Modern AI systems can analyze an individual’s behavioral data, linguistic patterns, and social engagement signals, allowing them to generate tailored narratives that resonate with a person’s cultural background, religious identity, or political leanings. This capability raises significant concerns, particularly as a study conducted in late 2025 revealed that only a small fraction of participants could distinguish between real and AI-generated content. This difficulty underscores the potential for AI-driven propaganda to erode trust before manipulation is recognized.

Atul Rai, co-founder and CEO of Staqu Technologies, emphasizes the responsibility of social media platforms in curbing the distribution of AI-generated misinformation. “Social media platforms serve as the primary distribution infrastructure through which AI-generated misinformation spreads,” he stated. Given their technological capabilities, Rai argues that these platforms must deploy advanced AI systems to identify manipulated content, including deepfakes and synthetic media.

“Deepfakes and synthetic media gain traction because platform algorithms prioritize engagement, allowing manipulated content to reach large audiences,” Rai added. He stressed the need for stronger governance frameworks, including rapid escalation protocols during geopolitical crises and transparent labeling of AI-generated content, along with partnerships with fact-checking organizations.

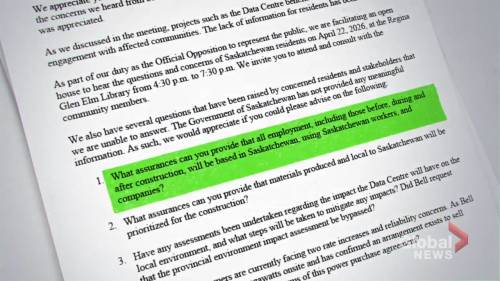

Accountability does not rest solely with platforms, however. Kaushal Bheda, director at Pelorus Technology, contends that creators who produce disinformation or propaganda content are directly responsible for any harm caused. Moreover, platform developers must implement preventative techniques and respond swiftly to law enforcement requests. When authorities identify ongoing harmful campaigns, any delays in data provision or account suspension could exacerbate the damage. Immediate cooperation with investigations and proactive intelligence sharing are considered essential responsibilities for platforms operating on a global scale.

Industry Response

Gupta further warned that society is entering an era where authenticity cannot be assumed. He advocates for systemic verification processes rather than relying on individual evaluations. Governments, educational institutions, and platforms must establish stronger early-warning systems and authentication protocols, especially during high-risk periods like elections or natural disasters. “There may be difficult debates ahead around temporary amplification controls during national emergencies. While controversial, such measures reflect a broader tension between open digital ecosystems and public safety,” he said.

Concerns over the rapid dissemination of misinformation extend to the mechanisms used to address complaints about harmful content. Garry Singh, president of IIRIS, pointed out that while many large platforms have implemented methods to identify AI-generated propaganda, the key issue remains the exploitation of this content by malicious actors. “The mechanism to address complaints and remove bad content is slow, causing concerns of spreading before the content can be taken down,” Singh explained. He added that the spectrum of risks from false emergencies is vast, potentially leading to life safety issues, financial loss, resource depletion, and the propagation of biased opinions.

As AI technology continues to advance, the interplay between digital platforms and misinformation will likely shape public discourse and societal trust. The revisions to X’s creator policy may serve as a crucial step in addressing these challenges, but the effectiveness of such measures will depend on the collective responsibility of creators, developers, and platforms to mitigate the risks associated with AI-driven content.

See also Alibaba’s Qwen AI Project Faces Turmoil as Key Leader Junyang Lin Steps Down

Alibaba’s Qwen AI Project Faces Turmoil as Key Leader Junyang Lin Steps Down Google Launches Nano Banana 2, Enhancing AI Image Generation with JSON Prompting and Antigravity Interface

Google Launches Nano Banana 2, Enhancing AI Image Generation with JSON Prompting and Antigravity Interface Smile ID Reveals AI-Driven Fraud Trends: 5x Rise in Account Authentication Attacks

Smile ID Reveals AI-Driven Fraud Trends: 5x Rise in Account Authentication Attacks Luma Launches AI Agents for Multimodal Creative Projects Using Unified Intelligence

Luma Launches AI Agents for Multimodal Creative Projects Using Unified Intelligence Free AI Image Generator Transforms Photos into Stunning Artworks in Minutes

Free AI Image Generator Transforms Photos into Stunning Artworks in Minutes