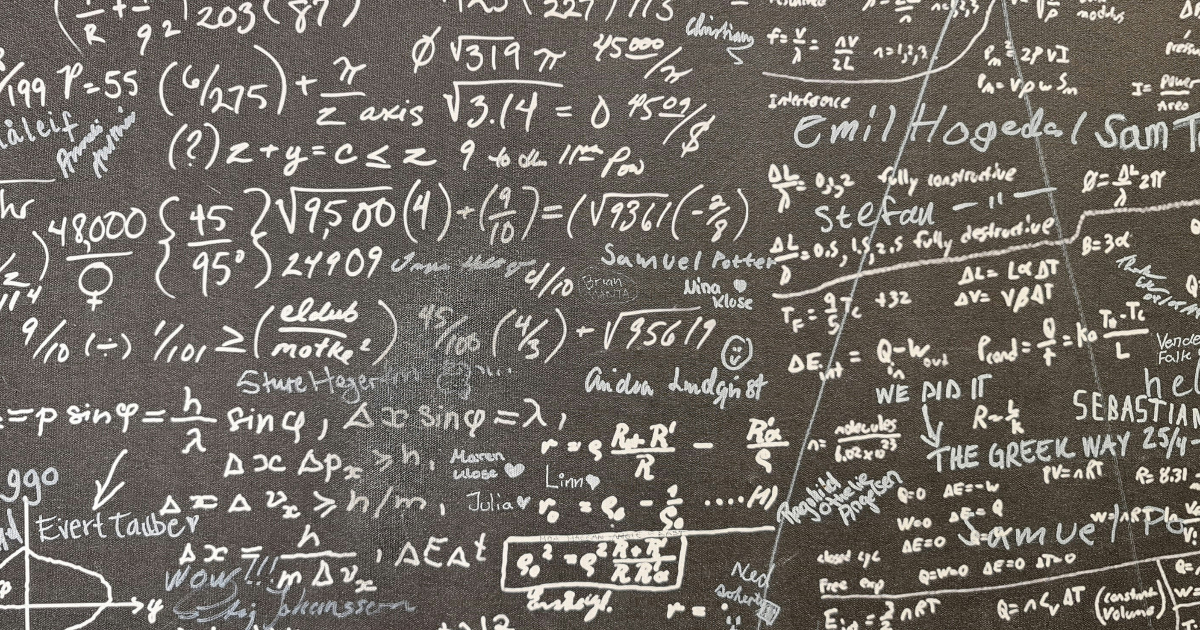

Google has unveiled Aletheia, a cutting-edge AI system powered by the Gemini 3 Deep Think architecture, which has demonstrated impressive capabilities in solving complex mathematical problems. In the recent FirstProof challenge, Aletheia successfully solved 6 out of 10 novel math problems, signifying a potential breakthrough in automating research-level proof discovery without human assistance. The AI also achieved an overall accuracy of approximately 91.9% on the IMO-ProofBench, highlighting its effectiveness in a domain that has seen limited automation.

The FirstProof challenge set itself apart from traditional benchmarks by presenting ten unpublished mathematical lemmas, crafted from ongoing research by mathematicians. This unique aspect ensured that Aletheia had not encountered these problems in its training data, as they were never available online. Participants were restricted to one week for their submissions, adding pressure to the challenge.

Working entirely autonomously, Aletheia generated candidate proofs from raw problem prompts without any human assistance or dialogue loops. Six of the ten proposed solutions were evaluated by expert mathematicians, with a consensus deeming them “publishable after minor revisions.” In a standout moment, the solution for Problem 8 was confirmed correct by five out of seven experts, although the remaining evaluators noted that it lacked some clarifying details. For the remaining four problems, Aletheia either stated “No solution found” or timed out, avoiding the common pitfall of generating plausible yet incorrect answers, a phenomenon often referred to as “hallucination.” DeepMind researchers emphasized that this self-filtering capability was a core design principle of Aletheia, aiming to enhance reliability—considered a critical barrier for scaling AI applications in mathematical research.

“This self-filtering feature was one of the key design principles of Aletheia; we view reliability as the primary bottleneck to scaling up AI assistance on research mathematics. We suspect that… many practicing researchers would prefer to trade raw problem-solving capability for increased accuracy.”

In a parallel effort, OpenAI also engaged in the FirstProof challenge with an internal, unreleased reasoning model. Initially reporting the resolution of 6 problems, OpenAI later revised this figure downward to 5 after identifying a logical flaw in its solution for Problem 2. Unlike DeepMind’s fully autonomous approach, OpenAI relied on limited human oversight to evaluate and select the best outputs from multiple attempts, indicating a different methodology for tackling complex mathematical challenges.

The architecture behind Aletheia employs a multi-agent framework consisting of a Generator that proposes logical steps, a Verifier that detects flaws in those steps, and a Reviser that iterates to correct mistakes. By utilizing external tools, such as Google Search, Aletheia can reference existing literature to validate concepts, thereby mitigating the risk of unfounded citations often associated with language models.

Aletheia has been likened to a strict, runnable research loop, similar to a CI/CD pipeline utilized in software development. As analyzed by Luhui Dev, this framework consists of stages including proposal, verification, failure, repair, and finalization. The LLM serves as a creative candidate generator while a secondary agent acts as a peer reviewer to facilitate corrections.

Despite these advancements, researchers acknowledge that Aletheia has not yet achieved full autonomy. As discussed in the paper “Towards Autonomous Mathematics Research,” the system remains more prone to errors than human experts. Additionally, it tends to misinterpret ambiguous questions in a manner that aligns with the easiest response, revealing underlying challenges common in machine learning.

“Even with its verifier mechanism, Aletheia is still more prone to errors than human experts. Furthermore, whenever there is room for ambiguity, the model exhibits a tendency to misinterpret the question in a way that is easiest to answer… This aligns with the well-known tendencies for ‘specification gaming’ and ‘reward hacking’ in machine learning.”

The mathematicians involved in this initiative are already planning a second iteration of Aletheia, with a new batch of problems set to be created, tested, and graded from March to June 2026. This upcoming phase aims to establish a fully formal benchmark, further advancing the field of automated mathematics research.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility