New research suggests that artificial intelligence (AI) has the potential to transform digital pricing by replacing a single public price with multiple personalized offers for the same product. This shift raises significant ethical questions about fairness in pricing, as consumers may be unaware if they are being charged more than others for the same item.

Dr. Miroslava Marinova from the University of East London (UEL) points out that online platforms can now push pricing beyond general market signals, moving towards prices tailored to each individual’s purchasing behavior and willingness to pay. The result is a pricing landscape where a single product can exist in several unseen variations, complicating consumer perceptions of fairness in transactions.

The study highlights how this “hidden checkout math” operates in today’s online marketplaces, where different buyers may receive varying prices for identical items, even in real-time. The algorithms used to determine these personalized prices analyze various factors, including users’ clicks, locations, and purchasing histories, leading to individualized pricing that can significantly diverge from more transparent market pricing.

As this shift toward algorithmically personalized pricing unfolds, it raises critical legal considerations. Consumer experiments indicate that individuals perceive personalized pricing as less fair compared to segment-based pricing, even when both methods are grounded in data. Dr. Marinova notes that when prices become invisible and tailored, the issue of fairness takes center stage. Trust is quickly eroded when consumers suspect they are subject to discriminatory pricing practices.

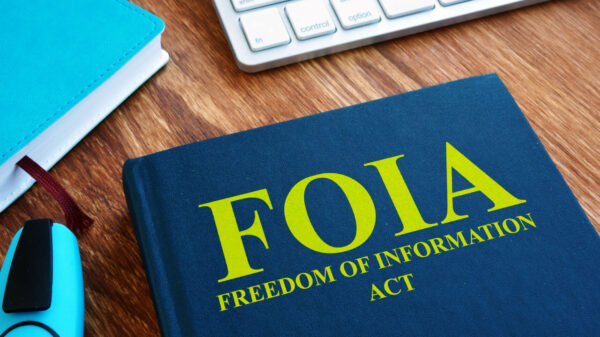

In the context of European Union regulations, specifically Article 102, dominant firms are prohibited from imposing unfair selling prices, making the implications of personalized pricing particularly relevant. The paper argues that hidden personal pricing can be viewed as exploitative, as it utilizes market power to disadvantage buyers without justifiable reasons. This concern is exacerbated in markets where competitive pressure is weak, highlighting the significant role of market dominance in these practices.

Rather than waiting for new legislation targeting AI specifically, the research advocates for applying existing competition laws to address these emerging issues. As pricing algorithms evolve, regulators may find it increasingly challenging to monitor patterns without comprehensive system records. Dr. Marinova emphasizes the need for regulators to shift from theoretical concerns about AI to practical measures involving audits and transparency in pricing algorithms.

The ramifications of these findings extend beyond the EU, as Britain’s Competition Act also prohibits abusive practices by dominant firms, including unfair pricing. Despite Brexit, the wording of this act allows for similar concerns regarding personalized pricing to be addressed. A forthcoming government consultation in 2026 aims to strengthen the powers of the Competition and Markets Authority (CMA) to investigate algorithmic practices in both competition and consumer protection, indicating a growing recognition of the complexities introduced by AI in marketplace dynamics.

Price transparency becomes increasingly compromised when every shopper faces a slightly different offer. Without a common reference point, it is nearly impossible for consumers to ascertain whether they are receiving a fair deal or being unfairly targeted with higher prices. The effectiveness of search tools and comparison sites is diminished in instances where merchants do not disclose comparable pricing, creating an environment where competitive forces are weakened, particularly when a platform controls various aspects of the purchasing process, from search to final checkout.

While not every instance of personalized pricing is inherently abusive—such as student discounts or loyalty rewards—concerns arise when such pricing is hidden and tailored to individual users. In these cases, consumers find themselves with limited means to contest unfair pricing practices. The distinction between legitimate and exploitative pricing becomes blurred, as personalized pricing morphs from a strategic sales tool into a mechanism for extracting value from consumers without their knowledge.

To address these challenges, effective regulatory oversight must include comprehensive records detailing how pricing is determined, including the data used, the timing of algorithm changes, and the rationale behind price adjustments. Auditors would need the authority to scrutinize inputs, override rules, and evaluate outcomes across different consumer groups. This level of scrutiny could help illuminate discriminatory practices that might otherwise be concealed by the complexities of algorithmic pricing systems.

As AI-driven pricing systems become increasingly sophisticated, the potential for private data to inform unfair pricing practices raises serious concerns. Enhancing regulatory powers to ensure clearer disclosures, stronger auditing capabilities, and better trails of accountability will not prohibit personalized pricing but will facilitate the identification and prosecution of unfair targeting practices. The study appears in the Journal of Competition Law & Economics, underscoring the importance of ongoing dialogue around the ethical implications of AI in commerce.

See also ComplianceCow Integrates Continuous Evidence Tools with ServiceNow AI for Enhanced IT Governance

ComplianceCow Integrates Continuous Evidence Tools with ServiceNow AI for Enhanced IT Governance EU Raises Concerns as Anthropic Launches Claude Mythos Without Wider Regulation

EU Raises Concerns as Anthropic Launches Claude Mythos Without Wider Regulation Nvidia Reveals $40B AI Foundation Portfolio Amid Missed OpenAI, Anthropic Investments

Nvidia Reveals $40B AI Foundation Portfolio Amid Missed OpenAI, Anthropic Investments BioPhorum Reveals Four Layers of Technical Assurance Essential for AI Trust in Pharma

BioPhorum Reveals Four Layers of Technical Assurance Essential for AI Trust in Pharma