The rise of generative artificial intelligence (AI), marked notably by the introduction of ChatGPT, has ushered in a new era in content creation, presenting both opportunities and challenges. A striking example surfaced recently when a digitally manipulated image featuring former U.S. President Donald Trump alongside soccer star Lionel Messi was shared by influencer Jessica Foster on the platform X (formerly Twitter). This image, along with the influencer herself, was generated entirely by AI and does not exist in reality, highlighting the complexities of authenticity in the digital age.

As AI technologies proliferate, so too does the volume of content—text, images, and videos generated at unprecedented rates. According to a report from The Guardian, approximately one in ten rapidly growing YouTube channels now rely solely on AI-generated videos. The social media platform TikTok has seen over 1.3 billion AI-generated videos uploaded, further illustrating this trend. While the proliferation of AI-generated content enhances creativity and efficiency, it raises a critical question: how can we ascertain the origin of this content?

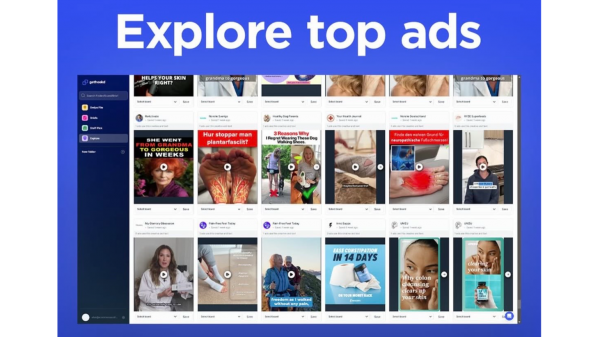

This situation has led to a renewed focus on the value of human creativity, particularly as traditional creative domains such as writing and film production become increasingly susceptible to automation. Various certifications have emerged in the U.S. and U.K. aimed at distinguishing human-made creations from those generated by AI, with labels such as “AI-Free” and “Human-Made.” Tech giants are also investing significant resources into developing technologies that can identify AI-generated content or maintain a verifiable history of its production and modification.

Previously, the emphasis was primarily on the quality of the output regardless of the tools utilized. Now, however, the ability to discern and evaluate AI-generated results has become a competitive advantage. This task, however, is fraught with challenges. The sophistication of AI detection methods often lags behind the advancements in AI evasion techniques. Particularly when the final product takes an analog form, establishing whether AI was involved can be nearly impossible without disclosure from the creator.

Moreover, the very definition of “using AI” is becoming increasingly nebulous. There is no consensus on whether a product should be labeled as AI-generated if AI was used only in auxiliary functions, such as grammar correction, translation, or design enhancements. This ambiguity complicates matters further, as creators, platforms, and users lack a unified standard, making it difficult to cultivate market trust.

As content creation with AI becomes routine, the role of humans is shifting towards verifying AI outputs and assuming responsibility for them. This paradox highlights the importance of human expertise; effective verification requires knowledge and competence in relevant fields. While AI can generate content, it emphasizes the enduring value of human creativity in the digital landscape.

In this evolving context, the path forward will require the establishment of clearer definitions regarding human creation, the acceptable levels of AI assistance, and the transparency required in disclosing the use of AI in content generation. Without a collaborative framework involving platforms, creators, and users, trust in the digital content landscape is likely to remain fragile.

As we navigate this new frontier, the question remains: can we harness the power of AI while maintaining the integrity of human creativity? The answer to that may define the future of content creation in the years to come.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech