Legal AI is navigating a critical juncture as it matures in capability and complexity, according to Michael Krallmann, CEO of TransLegal. While the outputs generated by legal AI tools appear credible, often mirroring the structures and citations familiar to legal professionals, they frequently suffer from significant accuracy issues, particularly in cross-border applications.

In multilingual and multi-jurisdictional contexts, legal errors can be subtle and go unnoticed. These inaccuracies do not typically manifest as glaring mistakes but rather present themselves as superficially accurate translations or plausible explanations that can lead to dangerously misleading conclusions. The challenge lies in outputs that are almost correct, which can lead to severe implications, especially in legal practice.

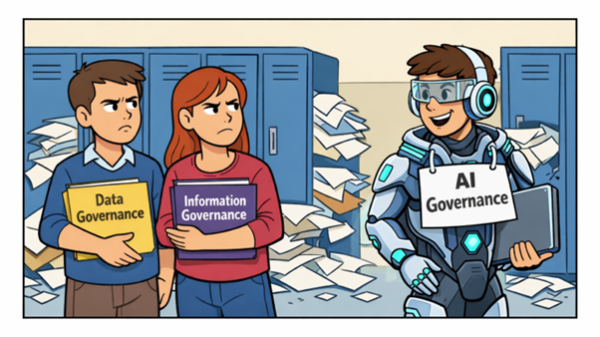

Krallmann discusses what he terms the “equivalence problem,” where foundational AI models excel at generating coherent English-language legal concepts but struggle to adequately align terms from different jurisdictions. The language may flow well, yet a lack of understanding regarding legal (non-)equivalence can obscure significant differences. This issue is exacerbated by the architecture of many legal tech tools, which often rely on extensive general models with minimal adjustments for legal context. Though some tools incorporate local data, this approach fails to resolve the fundamental discrepancies in legal concepts across different systems.

The inadequacy of training data further compounds these problems. Current foundation models are typically trained on a broad array of legal materials, resulting in strong biases favoring specific jurisdictions, languages, and legal traditions. This uneven distribution of training data renders them ill-equipped to navigate the realm of cross-border legal concepts accurately. Without access to curated, jurisdiction-specific legal data, these models often resort to internal assumptions, presenting confident but flawed outputs.

The implications of these shortcomings are particularly pronounced for cross-border legal teams, where misunderstandings can arise subtly—miscommunications that stem from poorly translated advice, drafting errors that presume nonexistent rights, and compliance assessments that reflect one legal culture while being applied to another. As legal AI systems become increasingly integrated into workflows—from internal research to client-facing applications—the risk of inadvertently relying on inaccurate outputs will likely grow.

Lawyers, trained to critically evaluate legal language, may not recognize these risks, particularly when AI-generated outputs appear to adhere to familiar frameworks. As a result, the risks associated with cross-border liability linked to legal AI usage may rise as adoption increases, leading many to mistakenly believe that fluency in multiple languages equates to effective legal multilingualism. However, true legal multilingualism transcends mere linguistic translation; it encompasses the construction and application of legal meanings within distinct systems.

Krallmann argues that simply enhancing interface design or employing prompt engineering will not suffice to address cross-border accuracy. Achieving reliable outputs in multilingual legal contexts demands a focused effort on legal terminology, comparative structures, and jurisdiction-specific meanings, guided by human expertise. Organizations that proactively acknowledge these challenges stand to gain a competitive edge by understanding the true sources of legal risk in AI-assisted workflows.

For those reliant on legal outputs spanning languages and jurisdictions, it is crucial to assess whether the systems generating these outputs can accurately explain potential pitfalls or uncertainties. This awareness is often the first step toward mitigating risks that tend to remain obscured.

TransLegal is at the forefront of this issue, developing human-curated legal datasets and AI-driven systems aimed at enhancing performance in multilingual and multi-jurisdictional legal environments. Their focus on expert-led data creation and quality assurance seeks to foster more accurate and accountable legal AI systems. For organizations involved in deploying legal AI in cross-border contexts, evaluating how jurisdictional accuracy is managed in existing systems is paramount.

As the landscape of legal AI continues to evolve, understanding these nuances will be essential for mitigating risks associated with its use, ensuring that the technology serves as a reliable tool in the legal field.

See also AI-Powered Productivity Tools Launch to Automatically Manage Digital Distractions

AI-Powered Productivity Tools Launch to Automatically Manage Digital Distractions Credentials Boost Earnings by $79K for AI Professionals Amid Rapid Technological Change

Credentials Boost Earnings by $79K for AI Professionals Amid Rapid Technological Change Nscale Secures €1.7 Billion Series C to Drive Global AI Infrastructure Expansion

Nscale Secures €1.7 Billion Series C to Drive Global AI Infrastructure Expansion NVIDIA Halts H200 AI Chip Production for China, Invests $4B in Optical Components

NVIDIA Halts H200 AI Chip Production for China, Invests $4B in Optical Components Nvidia Launches Open-Source AI Agent Platform NemoClaw to Empower Enterprises

Nvidia Launches Open-Source AI Agent Platform NemoClaw to Empower Enterprises