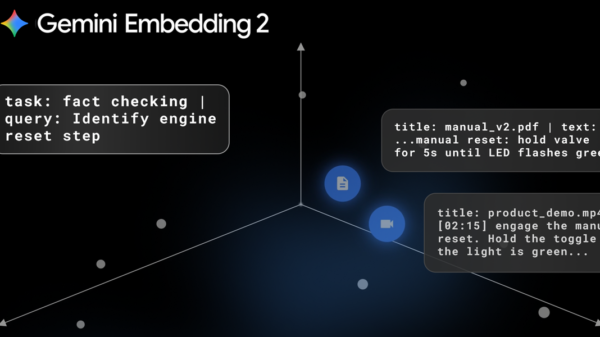

Google has launched the public preview of Gemini Embedding 2, marking its first natively multimodal embedding model designed for developers utilizing the Gemini API and Vertex AI. This innovative model generates embeddings for various content types—text, images, video, audio, and documents—within a single shared embedding space, enabling streamlined retrieval and classification across different media types.

Embedding models transform content into numerical representations, allowing software to assess similarity. They play a crucial role in semantic search, clustering, classification, and in enhancing Retrieval-Augmented Generation workflows, which help identify relevant materials from extensive data stores. The introduction of Gemini Embedding 2 builds upon Google’s previous text-only models, now extending their capabilities to accommodate multiple modalities and capturing semantic intent in over 100 languages.

Gemini Embedding 2 supports a range of input types. Text prompts can consist of up to 8,192 tokens, while the model can handle six images per request in PNG or JPEG formats. For videos, it processes content up to 120 seconds in length, accepting MP4 and MOV formats. Notably, audio input can be embedded directly without the need for prior transcription. The model is also designed for document inputs, capable of embedding PDFs of up to six pages, which is particularly useful for organizations storing unstructured content like reports or manuals.

Beyond single-modality inputs, Gemini Embedding 2 allows developers to submit mixed inputs, such as interleaved text and images, generating a unified embedding that represents diverse information. This flexibility can significantly streamline processes that traditionally require separate models for different media types. For instance, typical workflows may involve transcribing audio or extracting keyframes from videos before combining results, a complexity that Gemini seeks to reduce.

Utilizing Matryoshka Representation Learning, Gemini Embedding 2 offers embeddings that can be adjusted in size. The default output dimension is 3,072, but developers can scale this down to optimize for storage and computational costs, balancing quality against efficiency. Recommended settings include dimensions of 3,072, 1,536, and 768, with lower dimensions facilitating reduced vector database index sizes and lowered query costs for similarity searches.

Google positions Gemini Embedding 2 as a significant advancement over its earlier models, asserting it establishes a new benchmark for multimodal embedding depth. The company highlights its competitive edge, particularly in speech alongside text, image, and video processing capabilities. This release enhances Google’s position in a market increasingly focused on mixed-media search and analytics, as companies strive to manage and query diverse internal knowledge repositories that include everything from training videos to recorded meetings.

Gemini Embedding 2 is now accessible through Google’s Gemini API and Vertex AI during its public preview phase. Developers can also engage with the model via various integrations with vector database ecosystems, including LangChain, LlamaIndex, Haystack, Weaviate, QDrant, ChromaDB, and Vector Search. Such integrations are essential because embedding models are commonly employed behind vector indices that store embeddings for larger corpora, facilitating nearest-neighbor searches for applications like enterprise search and customer support systems.

While Google has not disclosed specific details about the organizations utilizing the model or their deployment strategies, early-access partners are reportedly leveraging Gemini Embedding 2 for multimodal applications. A product note from the Google DeepMind team underscored the model’s aim to support developers in building efficient retrieval and classification systems across diverse data sources. “We can’t wait to see what you build,” remarked a Google representative, hinting at the transformative potential of this technology in the evolving landscape of data management.

See also NEC Launches AI Prototype to Enhance Emergency Call Triage in Japan

NEC Launches AI Prototype to Enhance Emergency Call Triage in Japan OpenAI Launches GPT-5.4 with 1M Token Context and Six Major Enhancements for ChatGPT

OpenAI Launches GPT-5.4 with 1M Token Context and Six Major Enhancements for ChatGPT OpenAI Launches GPT-5.3 Instant, Reducing Cringe and Enhancing Accuracy

OpenAI Launches GPT-5.3 Instant, Reducing Cringe and Enhancing Accuracy