South Africa’s Draft National Artificial Intelligence Policy, published in the Government Gazette on April 10, aims to position the country within both global and African frameworks. While it aspires for South Africa to rely less on foreign technology, the policy does not sufficiently address the lack of a robust foundation for digital inclusion. This dual focus presents both strengths and weaknesses, as the document outlines a vision for self-reliance while recognizing the critical need for equitable access to technology.

Notably, the policy aligns South Africa’s approach with global best practices, including the emerging AI management standard, ISO 42001, and the widely recognized NIST AI Risk Management Framework. It emphasizes the necessity of achieving a “fair balance between policy-level oversight and organizational-level compliance” to foster safe and transparent adoption of AI technologies.

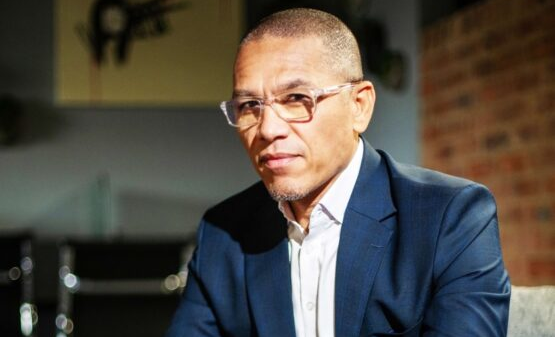

Shortly after the policy’s release, global workforce software company Workday convened a media conference in Dublin, Ireland, to discuss the regulatory challenges surrounding AI. Workday’s AI management system is certified to ISO 42001 and verified in alignment with the NIST framework. During a panel discussion titled “Globally compliant, locally relevant,” Workday’s chief corporate affairs officer, Chandler Morse, expressed support for South Africa’s approach.

“We have long thought that smart, workable governmental safeguards around AI would go a long way to building trust and supporting innovation,” Morse stated. He explained that there has been a historical tension between regulation and innovation, but the current narrative emphasizes the potential for innovation to stem from well-structured regulation. Trust, he asserted, must be a foundational element of any AI technology.

The conference conveyed a strong message that companies like Workday do not require government officials to dictate responsible AI development. However, this does not imply that governments should disengage from the conversation. Morse remarked, “We have our own values to drive that. We have our own customer relationships to drive that. But we do see the benefit of a regulatory envelope to put AI in that gives those who will be on the receiving end of AI tools some sense that their data is protected, their rights are protected, and they have some sort of transparency and explainability.”

In light of this, Workday has been actively engaging with European Union policymakers regarding the much-delayed EU Artificial Intelligence Act. Morse noted, “We have views on what we think an AI regulatory framework looks like. Sometimes that aligns with what governments want to do, and frankly, sometimes it doesn’t.” This dynamic creates a “push and pull” as Workday navigates between proactive engagement and moderating certain regulatory proposals.

When asked how global AI platforms manage the balance between standardization and local regulatory requirements outside the EU, Morse indicated that convergence is more prevalent than divergence. He highlighted that the EU AI Act serves as an early framework, while the U.S. has initiated the NIST framework. “There was a knee-jerk reaction of everyone needing their own EU AI Act,” he said, noting that this perspective has evolved over the past year. Many countries, including South Africa, Brazil, and Canada, often find themselves in a middle ground regarding AI policy.

“Not unlike Europe, many of these same countries have data protection regulations or laws,” Morse added. The OECD is also playing a role in fostering dialogue among nations, encouraging them to innovate while adhering to certain baseline standards. Morse expressed optimism about the trend toward harmonization, stating that the situation has improved compared to a year prior.

Pierre Gousset, vice president for solutions at Workday, reinforced the idea that aiming for high standards is beneficial. “It’s better to design for a higher bar. So, if you meet the European standards, that means that you’ll be able to meet more or less all of the available standards in the world,” he said. This approach aligns with Workday’s commitment to not only comply with existing regulations but also to set benchmarks for responsible AI innovation.

As global discussions around AI regulation evolve, South Africa’s policy initiative highlights the necessity of balancing innovation with ethical considerations. By aligning itself with international frameworks while addressing local needs, South Africa aims to carve a distinctive path in the AI landscape, potentially serving as a model for other nations striving for digital equity.

See also Wolters Kluwer Launches AI Tools, Valuation Gap Suggests 28.8% Undervaluation at €93.28

Wolters Kluwer Launches AI Tools, Valuation Gap Suggests 28.8% Undervaluation at €93.28 30% of Firms Report Major AI Security Incidents, Sprinto’s Report Reveals Risks

30% of Firms Report Major AI Security Incidents, Sprinto’s Report Reveals Risks Florida Senate Debates AI Bill of Rights to Protect Consumers and Children

Florida Senate Debates AI Bill of Rights to Protect Consumers and Children 2026 AI Advantage Bootcamp Sees Thousands Enroll in 48 Hours, Expands to Six Weeks

2026 AI Advantage Bootcamp Sees Thousands Enroll in 48 Hours, Expands to Six Weeks