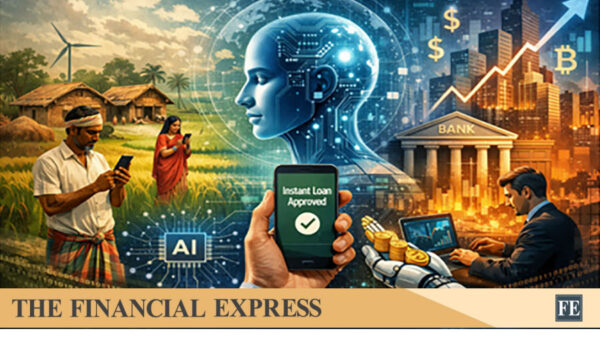

A recent survey revealed that millions of Americans are turning to AI chatbots for medical advice, frequently opting for these automated systems over consulting human doctors. This trend persists despite ongoing research highlighting significant flaws in large language model (LLM)-based tools, which claim to summarize medical records and provide health guidance based on simple text prompts.

One of the most pressing issues with these AI systems is the phenomenon known as hallucination, where models generate inaccurate clinical findings based on images they have never seen or respond to fictitious diseases created by researchers to test their reliability. Given these concerns, it is hardly surprising that experts are questioning the viability of AI adoption in healthcare settings, especially in light of the often inadequate evidence supporting its real-world benefits.

A critical editorial published in the prestigious medical journal Nature Medicine argues that “evidence that AI tools create value for patients, providers or health systems remains scarce.” The editorial points out that while claims about the clinical impact of AI tools are becoming more common in publications and product materials, there is no consensus on the level of evidence needed for such claims to be deemed credible. This discrepancy raises significant concerns about premature adoption and implementation of these technologies.

AI tools may perform well under controlled experimental conditions, yet they struggle in practical applications. A recent study in the journal JAMA Medicine found that when faced with ambiguous symptoms, advanced AI models misdiagnosed patients more than 80% of the time. The challenges surrounding AI’s use in clinical research are similarly complex. While LLMs excel in summarizing and analyzing data, researchers caution against overestimating their capabilities.

“I think that AI can help speed up many of the processes that are tedious and challenging,” said Jamie Robertson, an assistant professor of surgery at Harvard Medical School. “It can help us come up with code to do data analysis and even suggest scenarios.” However, she emphasized the necessity for individuals interacting with AI systems in clinical settings to understand their appropriate applications and limitations.

Experts warn that an over-reliance on AI could undermine scientific rigor, raising concerns about the spread of generalized—and potentially fabricated—data in the medical field. A striking example of this issue was demonstrated by Almira Osmanovic Thunström, a researcher at the University of Gothenburg, who uploaded two fictitious studies to a preprint server, successfully convincing large language models that a non-existent skin condition was real. This led to other peer-reviewed journals citing these now-retracted preprints, underscoring serious questions about research validity.

The Nature Medicine editorial calls for establishing a framework to evaluate AI medical technologies based on clear metrics and benchmarks, citing an urgent need for such standards. It warns that without a clear connection between claims and evidence, the adoption of medical AI risks outpacing the understanding of its actual value.

The relationship between AI and healthcare continues to evolve, with the potential for these technologies to transform how medical practices operate. However, the current challenges must be addressed to ensure that AI can deliver on its promises without compromising patient safety or scientific integrity. As researchers and healthcare providers navigate this landscape, the demand for transparency and rigorous evaluation will be crucial in determining the future role of AI in medicine.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions