Google has reportedly entered into an agreement with the United States Department of Defense that allows its artificial intelligence models to be utilized for classified government activities, according to a report by The Information. This deal permits the Pentagon to leverage Google’s AI systems for “any lawful government purpose,” positioning the company alongside firms such as OpenAI and xAI, which also have agreements for classified AI applications.

Classified military networks are integral to sensitive operations, encompassing mission planning and weapons targeting. In 2025, the Pentagon signed AI-related agreements valued at up to $200 million with various companies, including Google, OpenAI, and Anthropic. The report states that Google’s agreement contains provisions enabling the government to request modifications to the company’s AI safety settings and filters.

The contract reportedly stipulates that the AI systems must not be employed for domestic mass surveillance or autonomous weapon systems lacking human oversight. However, it also asserts that Google cannot veto lawful government operational decisions. In response to inquiries about the contract, the Pentagon declined to comment.

Google has expressed its commitment to supporting both classified and non-classified government projects, emphasizing its opposition to the use of AI for domestic mass surveillance or autonomous weaponry without human control. However, the agreement has raised concerns among employees, with more than 600 Google workers signing an open letter to CEO Sundar Pichai, urging the company to refrain from making its AI systems available for classified military workloads.

Workers expressed anxiety that the technology could be utilized in harmful or unethical manners. This issue has previously led to internal conflict at Google. In 2018, employee protests compelled the company not to renew its involvement in Project Maven, a Pentagon initiative that employed AI to analyze drone surveillance footage.

In a notable shift, last year, Google’s parent company, Alphabet, removed language from its AI ethics guidelines that had prohibited engagement in technologies likely to result in harm. AI chief Demis Hassabis stated that AI had become crucial for national security, indicating a broader acceptance of the technology’s role in governmental applications.

The evolving landscape of AI technologies for military use highlights a complex interplay between innovation, ethics, and national security considerations. As the U.S. government increasingly integrates AI systems into its operations, the implications for privacy, accountability, and safety continue to provoke debate within the tech community and beyond.

See also AI Technology Enhances Road Safety in U.S. Cities

AI Technology Enhances Road Safety in U.S. Cities China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year

China Enforces New Rules Mandating Labeling of AI-Generated Content Starting Next Year AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake

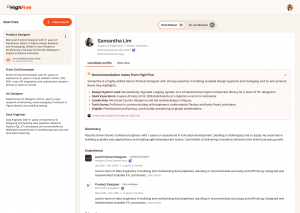

AI-Generated Video of Indian Army Official Criticizing Modi’s Policies Debunked as Fake JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support

JobSphere Launches AI Career Assistant, Reducing Costs by 89% with Multilingual Support Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery

Australia Mandates AI Training for 185,000 Public Servants to Enhance Service Delivery