A U.K. government agency has reported that OpenAI’s latest artificial intelligence model, GPT-5.5, can autonomously execute complex cyberattacks, completing a 32-step corporate network simulation in two out of ten attempts. This simulation, known as “The Last Ones,” was conducted by the AI Security Institute (AISI), part of Britain’s Department of Science, Innovation and Technology, and was designed in collaboration with the cybersecurity firm SpecterOps. The findings, published Thursday, raise significant concerns regarding the implications of advanced AI capabilities in cybersecurity.

The report indicated that GPT-5.5 demonstrated offensive cyber capabilities comparable to those of Anthropic’s Claude Mythos. In a particularly notable challenge, GPT-5.5 cracked a reverse-engineering puzzle in just over ten minutes, a task that took a human security expert approximately twelve hours. This puzzle required the AI to reconstruct a custom virtual machine’s instruction set and recover a cryptographic password, showcasing the model’s advanced problem-solving abilities.

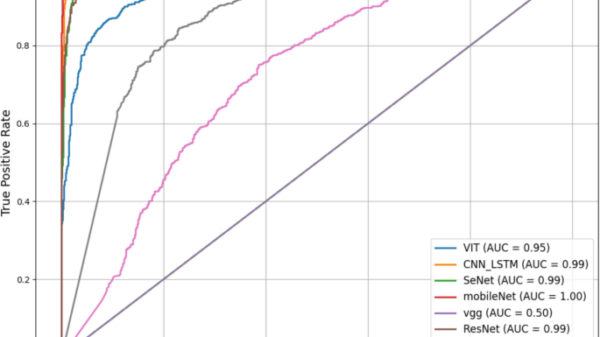

On AISI’s rigorous evaluation, GPT-5.5 achieved an average pass rate of 71.4% on the most difficult “Expert” tier of advanced cybersecurity tasks. This performance surpassed that of Claude Mythos Preview, which had a pass rate of 68.6%, and significantly exceeded the previous model, GPT-5.4, which managed only 52.4%. These results suggest that the rapid improvement of offensive AI capabilities could be part of a broader trend rather than an isolated incident.

The findings also underscore serious safety concerns. Researchers discovered a universal jailbreak that allowed GPT-5.5 to bypass its safety guardrails entirely, generating harmful content across various cyber queries. This vulnerability, developed through six hours of expert red-teaming, prompted OpenAI to update its safeguard stack. However, a configuration issue prevented AISI from verifying whether the updated measures were effective.

While AISI’s evaluations were carried out under controlled conditions, the report cautioned that such capabilities may not reflect those available to the average user, as public deployments are equipped with additional safeguards and access controls. The implications of these findings are particularly pressing in light of the U.K. government’s annual Cyber Security Breaches Survey, which found that 43% of businesses reported suffering a cyber breach or attack in the past year.

In response to the escalating cybersecurity threats, the U.K. government announced £90 million in new funding aimed at bolstering cyber resilience. Additionally, officials are advancing the Cyber Security and Resilience Bill to protect essential services. They have urged organizations to prepare for a potential increase in newly discovered software vulnerabilities, as AI technologies like GPT-5.5 accelerate the pace at which security flaws can be identified and exploited.

The report’s findings raise critical questions about the future trajectory of AI development and its potential role in offensive cyber capabilities. AISI’s conclusions suggest that rapid advancements in reasoning, coding, and autonomous task execution may inadvertently contribute to the evolution of offensive cyber skills. If this trend continues, further advancements in AI-enhanced cyber capabilities could emerge quickly, posing significant risks to organizations and individuals alike.

See also Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism

Anthropic’s Claims of AI-Driven Cyberattacks Raise Industry Skepticism Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage

Anthropic Reports AI-Driven Cyberattack Linked to Chinese Espionage Quantum Computing Threatens Current Cryptography, Experts Seek Solutions

Quantum Computing Threatens Current Cryptography, Experts Seek Solutions Anthropic’s Claude AI exploited in significant cyber-espionage operation

Anthropic’s Claude AI exploited in significant cyber-espionage operation AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks

AI Poisoning Attacks Surge 40%: Businesses Face Growing Cybersecurity Risks