Elon Musk has ignited debate within the artificial intelligence community once again, targeting Anthropic, the company behind the chatbot Claude. Responding to news about Anthropic’s updated “constitution” for Claude, Musk claimed that AI companies inevitably evolve into their antithesis. In a post on X, he suggested that “any given AI company is destined to become the opposite of its name,” implying that Anthropic would ultimately become “Misanthropic.” This remark raises questions about the company’s professed dedication to creating AI systems aligned with human values and safety.

The exchange unfolded after Anthropic announced an updated constitution for Claude, a document intended to delineate the principles, values, and behavioral boundaries the AI should follow. The update was shared online by Amanda Askell, a member of Anthropic’s technical team, who responded to Musk’s comment with humor, expressing hope that the company could “break the curse.” She also noted that it would be challenging to justify naming an AI company something like “EvilAI.”

Musk’s comments garnered additional attention because of his role as the founder of xAI, a startup also navigating the competitive landscape of AI. This interaction highlighted the intensifying rivalry and philosophical differences characterizing the sector. As companies race to develop and deploy AI technologies, the ethical implications of their choices loom large.

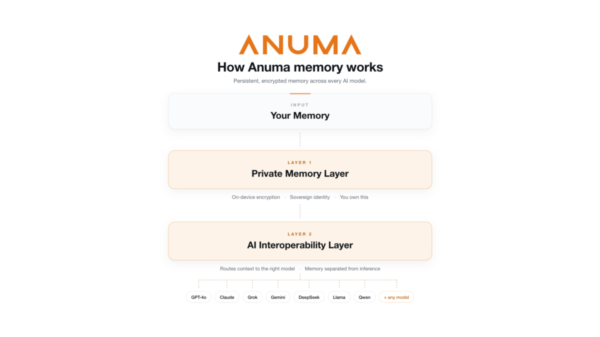

Anthropic emphasizes that Claude’s constitution serves as a foundational guide outlining what the AI represents and how it should behave. The document details the values Claude is expected to uphold and the rationale behind them, aiming to balance effectiveness with safety, ethics, and compliance with company policies. The constitution is primarily intended for the AI itself, guiding it on handling complex scenarios such as maintaining honesty while being considerate or safeguarding sensitive information. It also plays a crucial role in training future iterations of Claude, aiding in the generation of example conversations and rankings that help ensure newer models respond in accordance with these principles.

In its latest update, Anthropic identifies four core priorities for Claude: being broadly safe, acting ethically, adhering to company rules, and remaining genuinely helpful to users. In instances where these goals conflict, the AI is instructed to prioritize them in that sequence. This structured approach aims to mitigate risks while maximizing the utility of AI technologies, particularly as they become more integrated into daily life.

Musk’s brief yet impactful comment has reignited a broader discussion about the challenges the AI industry faces: whether companies can consistently uphold ethical frameworks as their technologies evolve and compete within a rapidly expanding market. As AI applications proliferate in sectors ranging from healthcare to finance, the necessity for robust ethical standards becomes increasingly apparent.

The engagement between Musk and Anthropic serves as a reminder that while technology advances, the principles guiding its development and application must remain paramount. As AI continues to shape industries and societies, the commitment to align technology with human values will be a critical factor in determining its acceptance and success. With the landscape continually shifting, the future of AI may hinge on how well companies manage to embody the ideals they profess.

For more information on Anthropic and its initiatives, visit their official website at Anthropic.

See also Mistral AI’s Arthur Mensch Declares China Not Behind in AI, ASML Invests €1.3B

Mistral AI’s Arthur Mensch Declares China Not Behind in AI, ASML Invests €1.3B EY’s Teigland: Invest in Workforce Redesign for AI Productivity Gains of 14%

EY’s Teigland: Invest in Workforce Redesign for AI Productivity Gains of 14% Anthropic Expands Claude AI with 84-page Constitution for Enhanced Ethics and Safety

Anthropic Expands Claude AI with 84-page Constitution for Enhanced Ethics and Safety LiveKit Secures $100M to Propel Voice AI Platform, Valuing Company at $1B

LiveKit Secures $100M to Propel Voice AI Platform, Valuing Company at $1B