Engineers at the University of Pennsylvania have developed a novel approach to solving complex scientific problems by harnessing artificial intelligence. Their method, termed “Mollifier Layers,” targets the challenge of inverse partial differential equations (inverse PDEs), a class of equations crucial in fields like weather systems, biology, and materials science. The findings, which will be presented at NeurIPS 2026, represent a departure from traditional AI strategies that prioritize model size and computational power.

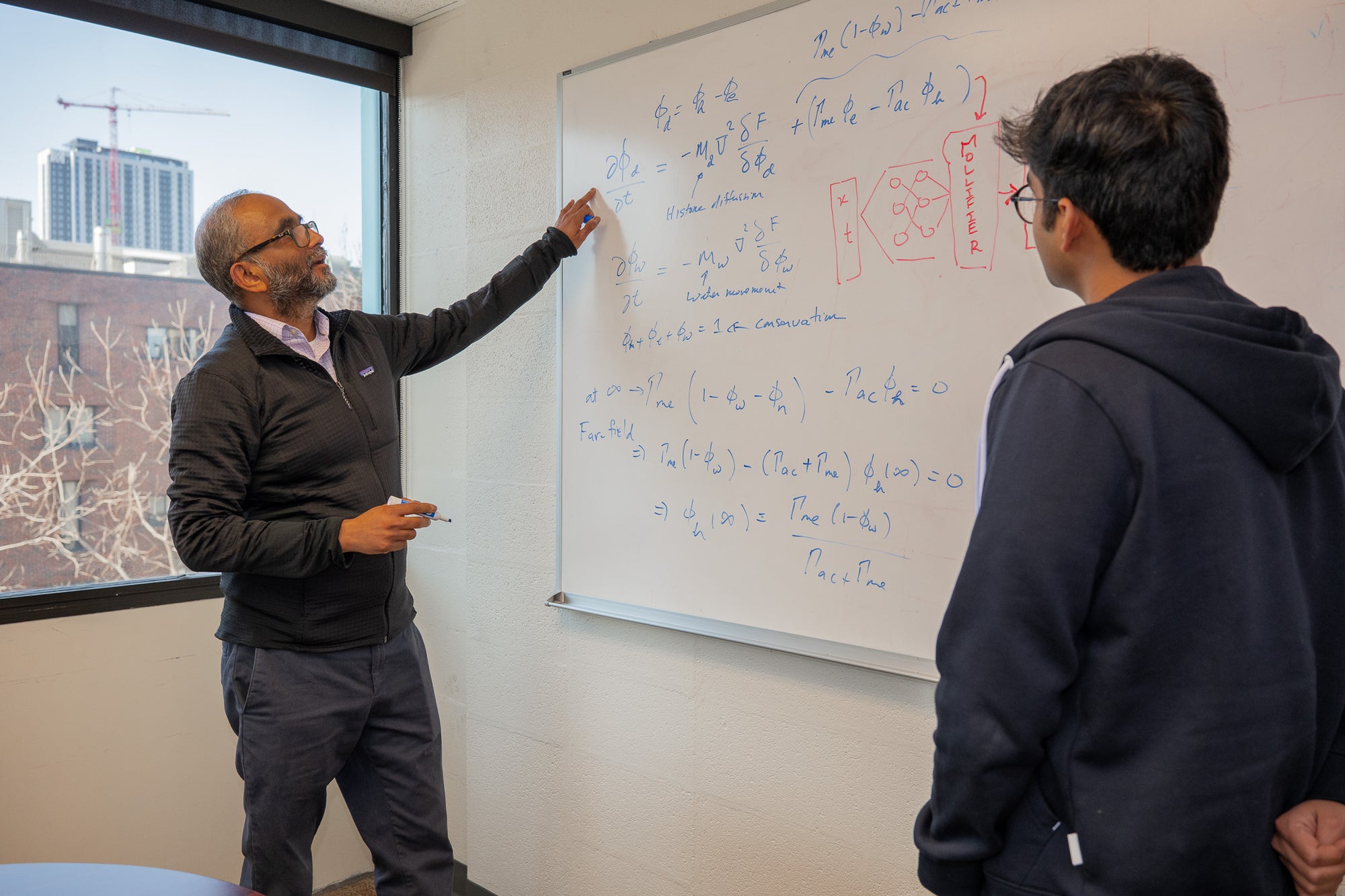

Inverse PDEs pose a significant challenge for researchers as they require working backward from observable outcomes to deduce the underlying causes. Vivek Shenoy, the senior author of the research and the Eduardo D. Glandt President’s Distinguished Professor in Materials Science and Engineering, illustrates this by comparing it to deciphering the cause of ripples in a pond. “You can see the effects clearly, but the real challenge is inferring the hidden cause,” he notes.

These equations are pivotal in understanding dynamic systems, helping scientists track changes such as heat movement, population growth, or chemical processes. However, they flip the conventional approach by starting with observations rather than known rules, complicating the inference of hidden parameters or dynamics. “We could see the structures and model their formation, but we could not reliably infer the epigenetic processes driving this system,” Shenoy explains, referring to their studies on chromatin, the folded state of DNA.

The core issue lies in differentiation—the mathematical method that measures change. Traditional AI systems calculate derivatives through recursive automatic differentiation, which can become inefficient and unstable, particularly with noisy data or higher-order derivatives. The Penn researchers found that as the complexity of systems increased, so did their computational burden. In one benchmark comparison, memory use surged from 0.21 gigabytes to 2.70 gigabytes when tackling a fourth-order reaction-diffusion problem using both data and PDE loss.

Realizing that traditional neural network design was not the primary barrier, the team pivoted to mollifiers, a mathematical tool dating back to the 1940s. Mollifiers smooth jagged or noisy functions, enhancing the stability of derivative calculations. Their innovative approach involves inserting a mollifier layer that first smooths the signal and then computes derivatives through fixed convolution-based operations. This adjustment alleviates the computational strain associated with recursive calculations and leads to more stable derivative estimates.

Testing the mollifier-based models on several benchmark problems, including a first-order 1D Langevin equation and a fourth-order 2D reaction-diffusion system, yielded impressive results. For instance, the mollified physics-informed neural network (PINN) achieved a temporal correlation of 0.97 for the Langevin case, significantly outperforming the standard PINN, which recorded a correlation of only 0.36. Memory consumption and training time also saw substantial reductions across all tested systems.

This advancement has implications that extend beyond mathematical modeling. For Shenoy’s lab, enhanced inference capabilities in chromatin structure can lead to a deeper understanding of gene expression, which is critical for areas such as cancer research and cellular aging. By enabling researchers to track how reaction rates evolve over time, the method opens pathways to potential new therapies that could influence how cells respond to various conditions.

The versatility of inverse PDEs means the Penn researchers’ framework could find applications across various scientific disciplines, from fluid mechanics to genetics. The authors suggest that the principles demonstrated could even enhance forward models and operator learning in artificial intelligence.

While the mollifier method offers promising solutions, it is not without limitations. The performance relies on the choice of mollifier kernel, which must strike a balance between suppressing noise and preserving essential features. Future research aims to explore adaptive kernels and boundary-aware formulations to enhance the method’s applicability.

This research signifies a step forward in the quest for efficient methods to extract hidden rules from complex scientific data. By improving the accuracy of parameter inference in noisy environments, it underscores the potential for AI to transform observational data into actionable insights, ultimately enabling scientists to decipher the intricate rules governing various systems. As Shenoy concludes, “If you understand the rules that govern a system, you now have the possibility of changing it.”

See also A1 Public Relations Enhances AI Visibility for Entertainment Brands in 2026

A1 Public Relations Enhances AI Visibility for Entertainment Brands in 2026 Apple Raises Mac Mini Price to $799 Amid AI-Driven Supply Shortages

Apple Raises Mac Mini Price to $799 Amid AI-Driven Supply Shortages AMD Launches Ryzen AI Halo Mini-PC with 128GB RAM and NPU for Local AI Development

AMD Launches Ryzen AI Halo Mini-PC with 128GB RAM and NPU for Local AI Development Growing Opposition to NextDC’s M3 Datacentre Expansion Amid Community Concerns in Melbourne

Growing Opposition to NextDC’s M3 Datacentre Expansion Amid Community Concerns in Melbourne Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity