Since its inception in 1991, arXiv has served as a critical platform for scientists and researchers to share their discoveries with the academic community, effectively streamlining the often lengthy peer review process. This preprint repository has allowed scholars to announce their findings with minimal delay, acting as a bridge between initial discovery and formal validation. However, the rise of artificial intelligence, particularly tools like ChatGPT, is presenting unprecedented challenges to arXiv’s integrity, raising concerns about the quality and credibility of the research being submitted.

According to a recent analysis cited in The Atlantic, Paul Ginsparg, the creator of arXiv and a professor at Cornell University, has expressed alarm over the potential for AI misuse in academic submissions. The study revealed that researchers employing large language models (LLMs) to generate or augment their papers were submitting 33 percent more work than those who did not utilize AI. This surge in submissions has led to fears that the barriers designed to maintain quality in academic publishing are being eroded.

The analysis highlighted that while AI can be beneficial in overcoming language barriers and enhancing accessibility, it also complicates traditional indicators of research quality. As Ginsparg noted, “traditional signals of scientific quality such as language complexity are becoming unreliable indicators of merit.” This situation creates a paradox where the volume of scientific output is on the rise, yet the criteria for assessing its validity are becoming increasingly blurred.

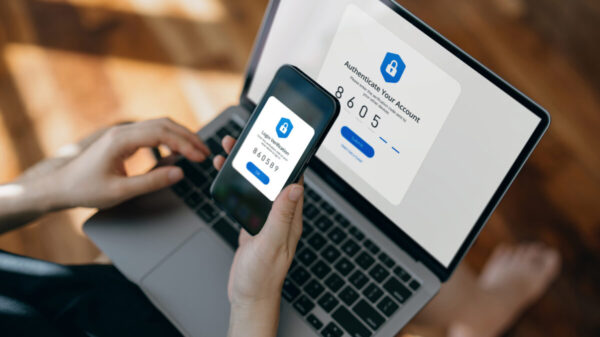

The issue extends beyond arXiv to the broader landscape of academic research. A recent incident reported in Nature detailed the mishaps of Marcel Bucher, a scientist in Germany who relied heavily on ChatGPT for various academic tasks, including generating emails and analyzing student responses. Bucher experienced a significant setback when he attempted to disable a data consent feature, resulting in the loss of two years’ worth of academic work stored exclusively on OpenAI’s servers. His lamentation in Nature underscores the risks associated with over-reliance on AI in academia.

The swelling tide of AI-generated submissions raises alarms about the reliability of scholarly research, with implications reaching far beyond individual cases. As noted in The Atlantic, it appears that the quantity of AI-assisted publications has led to a proliferation of subpar research. In fields such as cancer research, fraudulent papers can be created that mimic legitimate studies. Such efforts can pose a threat to the integrity of the scientific discourse, especially when seemingly credible claims are made without robust validation.

Moreover, the lure of AI-generated content may lead to a decline in scholarly rigor. Academic pressure to publish rapidly can incentivize shortcuts, resulting in the release of inadequate or misleading findings. As AI tools become more sophisticated, the challenge will be to ensure that the integrity of academic work is not sacrificed at the altar of expediency.

The ramifications of this trend are significant. If unchecked, the quality of research published in esteemed journals and repositories like arXiv could deteriorate, threatening the foundations of knowledge that these platforms represent. The scientific community must respond proactively, emphasizing the importance of diligence and critical evaluation in the face of emerging technologies.

The path forward requires a concerted effort from academics, peer reviewers, and repository moderators to uphold the standards that have historically defined rigorous scholarship. As the landscape of research evolves, the stakes are high, and the responsibility falls on all involved to ensure that the pursuit of knowledge remains uncompromised. The question remains: will the academic community rise to the challenge of maintaining integrity in an age increasingly influenced by AI?

See also 82% of CPG Companies Prioritize AI-Ready Infrastructure for Enhanced Operations

82% of CPG Companies Prioritize AI-Ready Infrastructure for Enhanced Operations AI Safety Market Surges 20% as OpenAI, Google DeepMind Lead Industry Growth

AI Safety Market Surges 20% as OpenAI, Google DeepMind Lead Industry Growth OpenAI Launches Prism, a Free AI Tool for Scientific Research Using GPT-5.2

OpenAI Launches Prism, a Free AI Tool for Scientific Research Using GPT-5.2 Cheng et al. Reveal Deep Learning Method for Enhanced Single-Cell Multiomic Data Analysis

Cheng et al. Reveal Deep Learning Method for Enhanced Single-Cell Multiomic Data Analysis Tempus AI Reveals Promising Immune Profile Score, Targets $1.59B Revenue by 2026

Tempus AI Reveals Promising Immune Profile Score, Targets $1.59B Revenue by 2026