New research from the University of Warwick raises concerns about the reliability of popular deep learning systems used in cancer pathology, suggesting they may rely on superficial cues rather than authentic biological signals. Published in Nature Biomedical Engineering, the study highlights potential pitfalls in AI models trained to predict cancer biology from microscope images, which are being increasingly adopted for faster and cheaper diagnostics.

Dr. Fayyaz Minhas, an Associate Professor and principal investigator at the Predictive Systems in Biomedicine (PRISM) Lab, emphasizes that many AI pathology models depend on statistical “shortcuts” rather than genuine understanding. “It’s a bit like judging a restaurant’s quality by the queue of people waiting to get in: it’s a useful shortcut, but it’s not a direct measure of what’s happening in the kitchen,” he explains.

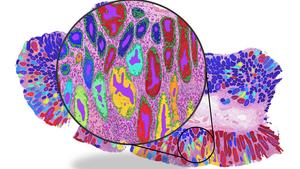

To arrive at their findings, researchers analyzed over 8,000 patient samples across four major cancer types—breast, colorectal, lung, and endometrial—and compared the efficacy of existing machine learning approaches. While the models often reported high accuracy, the underlying mechanics indicated a reliance on misleading correlations.

For instance, a model might learn that mutations in the cancer-associated BRAF gene frequently occur alongside another feature, microsatellite instability (MSI). Instead of accurately detecting BRAF mutations, the model might merely associate BRAF status with the presence of MSI, leading to unreliable predictions in different patient contexts.

Kim Branson, SVP Global Head of Artificial Intelligence and Machine Learning at GSK and a co-author of the study, likens this approach to predicting rain by observing umbrellas: “It works, but it doesn’t mean you understand meteorology. If a model cannot demonstrate information gain above a simple pathologist-assigned grade, we haven’t advanced the field; we’ve just automated a shortcut.”

When the AI models were evaluated within specific patient groups, such as high-grade breast cancers or MSI-positive tumors, their accuracy diminished significantly, underscoring the dependence on shortcut signals that fail when confounding factors are controlled.

The study found that for certain prediction tasks, the performance advantage of deep learning over traditional clinical information was limited. AI systems managed accuracy scores of just over 80% when predicting biomarkers, compared to approximately 75% using tumor grade alone—a metric already assessed by human pathologists.

Professor Nasir Rajpoot, Director of the Tissue Image Analytics (TIA) Centre at the University of Warwick, stresses that the deployment of AI in medicine must be rigorously validated. “To deliver real and lasting impact, the value of AI-based clinically important predictions must be judged through rigorous, bias-aware evaluation,” he asserts.

While the researchers acknowledge that machine learning can still be beneficial for research and clinical decision support, they advocate for a shift away from correlation-based learning. Future AI tools should explicitly model biological relationships and causal structures. Moreover, they call for stronger evaluation standards that include subgroup testing and comparisons against simple clinical baselines to ensure reliability before routine clinical deployment.

Dr. Minhas concludes, “This research is not a condemnation of AI in pathology. It is a wake-up call. Current models may perform well in controlled settings but rely on statistical shortcuts rather than genuine biological understanding. Until more robust evaluation standards are in place, these tools should not be seen as replacements for molecular testing.”

Co-author Professor Sabine Tejpar, Head of Digestive Oncology at KU Leuven, highlights the need for clinical relevance in novel technologies. “Too often, oncology is swept up by innovation with limited or no impact on patient care, driven more by what can be provided or sold than by rigorous assessment of what is truly relevant for individual patients,” she says.

The findings underline the importance of adhering to deeper validation methods in the development and deployment of AI in pathology. As the field progresses, understanding the limitations of current models and embracing the complexity of biological signals will be crucial to ensure that AI applications enhance, rather than jeopardize, patient care.

See also MIT Experts Discuss AI’s Impact on Productivity and Collaboration in Recent Podcast

MIT Experts Discuss AI’s Impact on Productivity and Collaboration in Recent Podcast AI Study Reveals US, China, and EU Diverge on Future Development Pathways

AI Study Reveals US, China, and EU Diverge on Future Development Pathways Fujitsu Launches AI-Driven Platform, Achieving 100-Fold Productivity Increase in Software Development

Fujitsu Launches AI-Driven Platform, Achieving 100-Fold Productivity Increase in Software Development Georgia Tech Researchers Unveil ZEN Framework for 100% AI Model Attribution Accuracy

Georgia Tech Researchers Unveil ZEN Framework for 100% AI Model Attribution Accuracy Brands May Gain Consumer Trust from AI Content, Study Finds Positive Reactions in 41%

Brands May Gain Consumer Trust from AI Content, Study Finds Positive Reactions in 41%