The artificial intelligence sector has entered a significant phase of collaboration with government entities following OpenAI‘s recent revisions to its contract with the United States Department of Defense. CEO Sam Altman announced these updates, which respond to public criticism and concerns from employees regarding the ethical implications of employing AI in military contexts. The changes aim to establish clearer ethical boundaries, bolster safeguards, and promote responsible deployment of advanced AI technologies.

This development has captured the attention of policymakers, technology investors, and market participants, emphasizing the growing influence of AI partnerships on both innovation and regulation. OpenAI’s relationship with the Pentagon, which dates back to a major government initiative to integrate cutting-edge AI into national defense, underscores the strategic importance of AI in enhancing military operations.

In 2025, the U.S. Department of Defense awarded OpenAI a contract potentially worth up to 200 million dollars to develop AI prototypes tailored for various military applications, including administrative tasks, cybersecurity, and operational support. The partnership has focused primarily on improving operational efficiency rather than replacing human decision-making. Key objectives have included enhancing healthcare systems for military personnel and streamlining logistics analysis to better support military readiness.

However, the initial rollout of the agreement faced considerable scrutiny due to fears over the potential misuse of AI technologies, particularly regarding domestic surveillance and the development of autonomous weapons. In light of this backlash, Altman acknowledged that the initial agreement lacked clarity and transparency, necessitating revisions to ensure that ethical standards were met. The updated contract now explicitly prohibits domestic mass surveillance, clarifies the legal parameters for intelligence agency access, and reinforces ethical guidelines governing the technology’s deployment.

Despite these adjustments, OpenAI’s collaboration with the Pentagon remains robust, with the Defense Department continuing to integrate customized versions of ChatGPT into its enterprise AI platform, known as GenAI.mil. This platform provides secure access to AI tools for approximately 3 million Department personnel, facilitating mission planning and administrative workflows. The functionalities include automated document analysis, coding assistance, data summarization, and operational planning support, while ensuring that sensitive government data remains confidential and isolated from public AI model training.

The revisions to the agreement reflect broader ethical concerns within the AI industry regarding military collaborations. Other companies, such as Anthropic, have opted against similar defense contracts, citing reservations about surveillance and autonomous weapons. Advocacy groups have intensified their criticism, arguing that AI could enable expansive monitoring capabilities if safeguards are insufficient. In response, OpenAI has reinforced its commitment to human oversight and legal compliance as foundational principles guiding AI deployment.

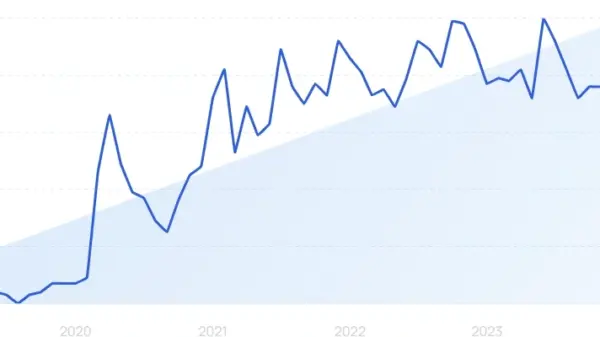

The ramifications of the Pentagon’s revised agreement extend beyond immediate government policy. Investors are closely monitoring defense-related AI partnerships as indicators of future revenue opportunities and technology adoption trends. Such government contracts often serve as validation for emerging technologies, impacting investor sentiment and influencing stock valuations across the broader technology market. Analysts have noted a heightened focus among investors on AI infrastructure providers, alongside increasing institutional investment in enterprise AI platforms.

Recognizing the competitive landscape of artificial intelligence, governments around the world are making substantial investments to enhance their technological capabilities. The Pentagon has emphasized that AI tools are intended to assist rather than replace human decision-makers, aiming to boost operational efficiency while upholding accountability. OpenAI’s partnership reflects a growing trend of private technology firms shaping national security initiatives, with expectations for such collaborations to expand further as AI adoption accelerates globally.

In the wake of these developments, OpenAI has pledged to enhance transparency regarding its defense partnerships. Stronger contractual language has been introduced to outline acceptable use cases and prevent potential misuse of AI technologies. Safeguards include requirements for human oversight in sensitive applications, legal compliance verification prior to deployment, and technical safety controls embedded within AI systems.

Industry experts believe that these new measures could serve as a model for future government AI contracts worldwide. By acting swiftly to revise its agreement, OpenAI aims to address public concerns while continuing to foster innovation in partnership with government entities. The overarching narrative highlights the evolving interplay between AI advancement and public accountability, emphasizing the need for clear ethical boundaries as the technology matures.

As governments increasingly engage with private AI developers, the revised agreement with the Pentagon signifies a pivotal moment in public sector AI adoption, with implications for national security, regulatory frameworks, and technology markets. The ongoing collaboration between OpenAI and the U.S. government underscores the growing significance of artificial intelligence in shaping economic and geopolitical landscapes.

See also NationGraph Secures $18 Million to Enhance AI in US Government Contracting Sector

NationGraph Secures $18 Million to Enhance AI in US Government Contracting Sector Federal Agencies Catalog Over 2,500 AI Applications, Boosting Operational Efficiency

Federal Agencies Catalog Over 2,500 AI Applications, Boosting Operational Efficiency OpenAI’s Joseph Larson: Infrastructure Essential for Effective Public Sector AI Use

OpenAI’s Joseph Larson: Infrastructure Essential for Effective Public Sector AI Use Trump and Hegseth Target Anthropic’s AI Guardrails, Advocating for Unrestricted Development

Trump and Hegseth Target Anthropic’s AI Guardrails, Advocating for Unrestricted Development Senate Passes AI Bill of Rights, Mandates Parental Consent for Minor Usage

Senate Passes AI Bill of Rights, Mandates Parental Consent for Minor Usage