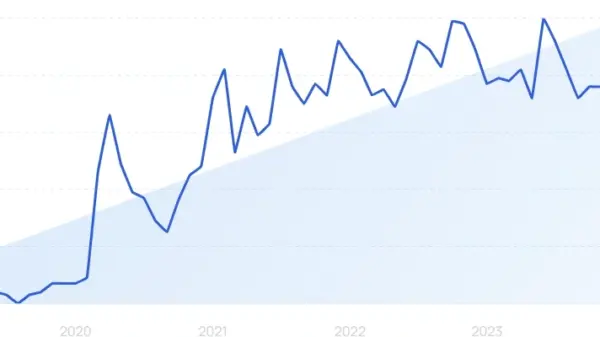

Inception launched its new large language model, Mercury 2, last week, marking a significant shift in the generative AI landscape. Unlike traditional autoregressive models used by major AI labs, Mercury 2 employs a diffusion approach, as explained by Inception CEO and co-founder Stefano Ermon during a recent episode of The New Stack Agents. This innovative model is expected to reshape how AI applications are developed, offering advantages in both speed and efficiency.

Traditional large language models (LLMs) generate text sequentially, processing one token at a time from left to right, a method Ermon likens to “fancy autocomplete.” In contrast, diffusion models begin with an approximate output and refine it in parallel, similar to how image models like Stable Diffusion convert noise into coherent images. Inception’s own testing indicates that Mercury 2 can produce more than 1,000 tokens per second, achieving speeds five to ten times faster than optimized models from industry leaders such as OpenAI, Anthropic, and Google.

Ermon noted, “What we’re seeing is that our Mercury 2 model, which is a reasoning model, is actually able to match the quality of these speed-optimized models from frontier labs OpenAI, Anthropic, Meta, and Google, while being five to ten times faster in terms of, like, the end-to-end latency, how long you need to wait before it gives you an answer.” This capability is particularly significant as the demand for rapid response times in AI applications continues to grow.

The slower performance of autoregressive models stems from their reliance on memory to process data sequentially, as opposed to the parallel computation approach favored by diffusion models. This parallelism takes advantage of the architecture of modern GPUs, which are designed for such computational tasks. Nvidia, a key investor in Inception, is also assisting in optimizing Mercury 2’s serving engine to enhance performance further.

Ermon, who has a background in developing diffusion models for images during his time at Stanford, highlighted the trade-offs involved in this new technology. While Mercury 2 is capable of matching the quality of Claude Haiku and Google Flash-class models, it does not yet reach the performance level of Claude Opus or OpenAI’s GPT-4. However, Ermon maintains that as models scale, the economic advantages of the diffusion approach will become increasingly compelling. He emphasized that reinforcement learning, a technique foundational to current reasoning models, benefits from the efficiency of diffusion architectures, particularly in addressing inference bottlenecks.

Currently, Inception stands out as the only company offering a production-level diffusion LLM, with Google’s text diffusion model still classified as “experimental.” Mercury 2 is now accessible via an OpenAI-compatible API, with plans for integration into AWS Bedrock expected soon.

As the competitive landscape of AI continues to evolve, the introduction of Mercury 2 may signal a broader shift in the industry, highlighting the potential for new methodologies to redefine traditional approaches to AI development.

See also Agüera y Arcas Reveals New AI Theory Linking Computation to Biological Intelligence

Agüera y Arcas Reveals New AI Theory Linking Computation to Biological Intelligence VCI Global Launches Malaysia’s First NVIDIA-Powered AI GPU Computing Center

VCI Global Launches Malaysia’s First NVIDIA-Powered AI GPU Computing Center Adobe Firefly Launches Video Model with Unlimited Image Generations Until March 16

Adobe Firefly Launches Video Model with Unlimited Image Generations Until March 16 Lenovo Licenses Surrey’s SD3.5-Flash for On-Device, Private Image Generation

Lenovo Licenses Surrey’s SD3.5-Flash for On-Device, Private Image Generation