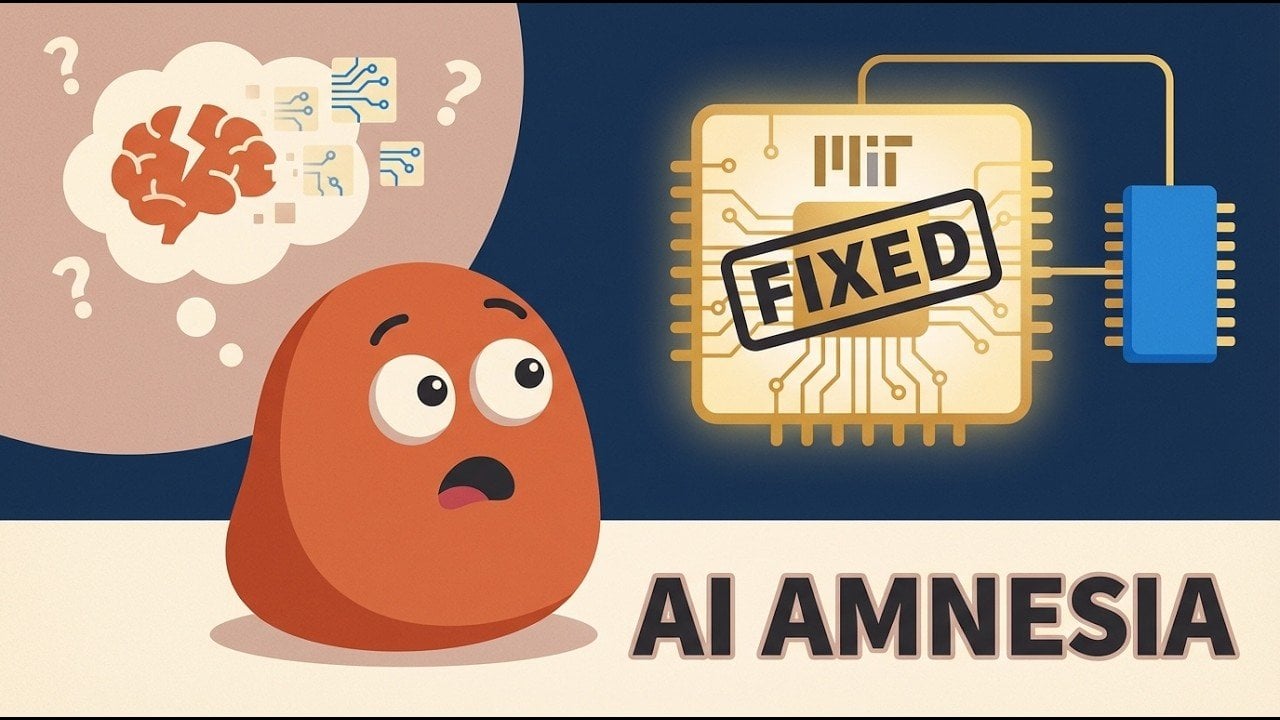

Artificial intelligence has long grappled with a significant challenge known as catastrophic forgetting, where learning new tasks leads models to lose previously acquired knowledge. This phenomenon poses serious implications, particularly in fields like medical diagnostics and scientific research that require retaining earlier insights. Researchers at MIT have made strides in this area with the introduction of Self-Distillation Fine-Tuning (SDFT), a method innovatively designed to mitigate this issue. By partitioning a single AI model into distinct “teacher” and “student” roles, SDFT allows the model to enhance its reasoning abilities while safeguarding prior knowledge, creating a more robust approach to continuous learning.

This approach not only improves the retention of knowledge but also emphasizes the reasoning process over mere rote memorization. In doing so, SDFT shows promise in addressing the challenges faced by traditional AI training methods, particularly in scenarios that require adaptability and long-term learning. However, the method does come with increased computational demands and varying performance metrics across different tasks.

Catastrophic forgetting remains a critical limitation in conventional AI training, especially in supervised fine-tuning (SFT). When AI models receive updates for new tasks, they frequently overwrite parameters linked to earlier tasks, resulting in the loss of previously learned information. This issue is particularly problematic in sequential learning contexts, where the capacity to retain knowledge over time is vital. For instance, an AI system designed to diagnose medical conditions might lose its ability to recognize earlier diseases when trained with newer diagnostic criteria. This limitation significantly hampers the development of AI systems that can adapt over time, an essential requirement in domains such as healthcare, education, and scientific research.

To combat this challenge, MIT’s SDFT introduces a novel framework that divides a single AI model into two roles: teacher and student. The teacher is responsible for providing demonstrations and guidance based on its existing knowledge, while the student learns from the reasoning style of the teacher and develops its own outputs. This dynamic interaction not only refines the model’s skills but also ensures that previously acquired knowledge is preserved. By focusing on reasoning processes instead of memorization, SDFT enables the model to assimilate new information without sacrificing its existing capabilities.

Experimental evaluations of SDFT have yielded positive results, particularly in tasks requiring complex reasoning and knowledge retention. Models trained using this method have demonstrated superior performance compared to traditional approaches. They exhibit enhanced accuracy in scenarios where integrating new facts is critical, as well as improved retention of reasoning capabilities when faced with new datasets. Nonetheless, the SDFT method is not without its challenges. It demands approximately 2.5 times more computational resources than conventional methods, and its effectiveness can depend on factors such as model size and in-context learning ability.

Despite these hurdles, the development of SDFT marks a significant step forward in addressing catastrophic forgetting. Its approach underscores the importance of designing AI systems that can adapt and evolve over time, akin to human learning processes. The ability to balance retention of knowledge with the acquisition of new skills could revolutionize applications in sectors that rely heavily on dynamic and adaptive AI solutions.

While SDFT is not a panacea, it signals a promising direction for future AI training methodologies. As researchers continue to refine this technique and explore complementary strategies, the dream of creating truly adaptive AI systems that can learn, adapt, and thrive in changing environments comes closer to realization. Currently, SDFT stands as an important milestone in overcoming one of AI’s most persistent challenges, offering hope for more sophisticated applications in areas like healthcare, education, and scientific research.

See also C&EN Reveals How AI and Funding Transform the Future of Chemistry Research

C&EN Reveals How AI and Funding Transform the Future of Chemistry Research Kimi AI Launches Versatile Research Tool to Compete with ChatGPT and Claude

Kimi AI Launches Versatile Research Tool to Compete with ChatGPT and Claude U of T and AMD Launch AI Research Lab with 100 Projects Over Three Years

U of T and AMD Launch AI Research Lab with 100 Projects Over Three Years LSU Experts Discuss $10B AI Data Centers’ Impact on Louisiana’s Energy Landscape

LSU Experts Discuss $10B AI Data Centers’ Impact on Louisiana’s Energy Landscape