As artificial intelligence (AI) continues to advance, understanding its foundational principles has become increasingly critical for professionals across various industries. In a recent feature, technology journalist Maddy Zhang delves into ten core AI concepts that are shaping the technological landscape in 2026, offering insight into the evolution of AI applications.

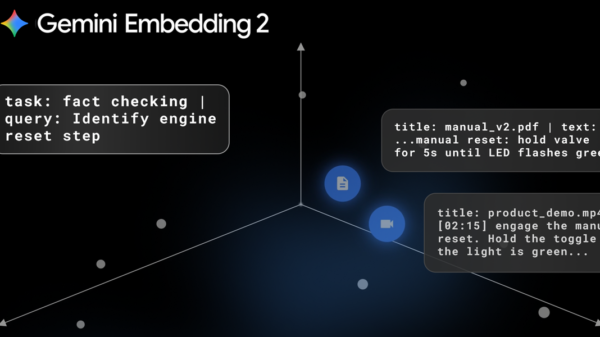

Among the highlighted concepts is Retrieval-Augmented Generation (RAG), a hybrid approach that integrates database retrieval with AI-generated responses. This method significantly enhances accuracy and contextual relevance, addressing limitations in real-time knowledge access. RAG is particularly beneficial in sectors like healthcare and legal services, where precision is essential for effective outcomes.

Zhang’s overview also emphasizes the importance of understanding how tokens and context windows influence an AI model’s capability to process information. Additionally, the role of multimodal AI is explored, showcasing its ability to integrate various data types such as text, images, and audio. Insights into practical applications of reasoning models and fine-tuning techniques further illustrate how AI systems can adapt to specialized needs, enhancing their utility across diverse fields.

Large Language Models (LLMs) are a focal point in this discussion. These advanced neural networks, trained on extensive text datasets, enable a wide array of capabilities including reasoning, summarization, and coding. Tools like GPT-4 exemplify the versatility of LLMs, which are increasingly integral to solving complex problems in industries ranging from customer service to content creation. However, the effectiveness of LLMs is heavily reliant on the diversity and quality of their training data, suggesting a need for continuous evolution to remain relevant.

Tokens, defined as the units through which AI models process text, play a crucial role in determining how much information can be analyzed simultaneously. The concept of context windows refers to this limitation; for example, a model with a 4,000-token context window can effectively manage tasks such as summarizing lengthy documents. Research is ongoing to expand these context windows, thereby enhancing AI’s capacity to tackle complex tasks in areas like legal analysis and academic research.

Autonomous systems known as AI agents have emerged as pivotal players in this evolving landscape. Unlike conventional chatbots that follow predefined scripts, AI agents possess the ability to reason, plan, and execute tasks autonomously. Their applications span industries such as supply chain management and financial analysis, making them essential for automating workflows and decision-making processes. As their capabilities continue to expand, AI agents are expected to transform the way businesses operate.

Another significant aspect of Zhang’s analysis is the Model Context Protocol (MCP), a standardized framework that facilitates seamless connections between AI models and external tools, databases, and APIs. This interoperability allows AI systems to access real-time data, enabling them to execute more complex tasks effectively. As AI adoption grows, the MCP framework is anticipated to become increasingly vital for enterprise-level AI systems.

Fine-tuning pre-trained AI models using specialized datasets is a process that allows developers to customize model behavior to meet specific requirements. This adaptability is particularly valuable in fields such as healthcare, where a general-purpose language model might be fine-tuned to understand medical terminology. Such targeted adjustments can optimize existing models to serve niche applications without requiring extensive retraining.

Context engineering is another important consideration, involving the design of the information environment around AI models to optimize performance. By structuring input data and prompts effectively, developers can guide AI models to generate more relevant and coherent responses. This is especially crucial in applications that demand high precision, such as legal document analysis or scientific research.

Reasoning models, designed to think step-by-step, significantly enhance AI systems’ ability to solve complex problems. This capability is particularly useful in scenarios requiring logical reasoning, such as mathematical problem-solving and strategic decision-making. As these models become more sophisticated, they are likely to play an increasingly critical role in educational contexts and other domains requiring analytical thinking.

Lastly, multimodal AI represents a transformative development in the field, as models can now process and generate multiple data types, including text, images, audio, and video. This innovation facilitates a broader range of applications, from creating interactive virtual assistants to analyzing medical imaging. By integrating diverse forms of data, multimodal AI provides a comprehensive understanding of complex scenarios, making it an invaluable asset in industries like healthcare and entertainment.

The Mixture of Experts (MOE) architecture further illustrates the efficiency of modern AI design. This approach activates specialized sub-networks within a larger model for specific tasks, balancing performance with computational costs. As AI systems continue to grow in complexity, MOE is expected to emerge as a key strategy for optimizing functionality while managing resources effectively.

By understanding these ten foundational concepts, stakeholders in technology and related fields can better navigate the complexities of AI development and its future implications. From large language models to the integration of multimodal data, each concept contributes to advancing AI’s capabilities, highlighting the ongoing evolution of this transformative technology.

See also AI Innovation Accelerates: Vision Language Models Transform Threat Detection in Security

AI Innovation Accelerates: Vision Language Models Transform Threat Detection in Security AI-Generated War Footage Surges Amid US-Israel-Iran Conflict, Warns Fact-Checkers

AI-Generated War Footage Surges Amid US-Israel-Iran Conflict, Warns Fact-Checkers Digital Agency Launches AI Test for 180K Staff, Aiming for 2027 Implementation

Digital Agency Launches AI Test for 180K Staff, Aiming for 2027 Implementation AI Research Reveals 66% of Pseudonymous Users Can Be Unmasked with New Techniques

AI Research Reveals 66% of Pseudonymous Users Can Be Unmasked with New Techniques ByteDance Launches Seedream 5.0 Lite: Affordable AI Image Generator at $0.035 Per Image

ByteDance Launches Seedream 5.0 Lite: Affordable AI Image Generator at $0.035 Per Image