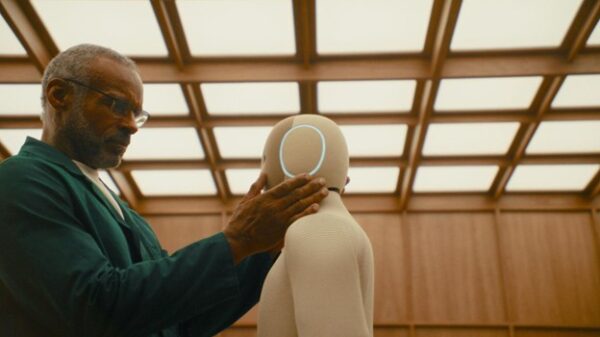

Australia is at a crucial juncture in its approach to regulating artificial intelligence (AI), following the government’s recent decision to abandon plans for an AI advisory body. This move has drawn criticism from prominent AI researcher Toby Walsh, who warned of the potential risks young people face without adequate regulatory measures in place.

In lieu of direct oversight, Australia’s National AI Plan emphasizes investments in data centers and communication infrastructure, the establishment of an ‘AI Safety Institute,’ and some transparency initiatives. However, experts point out that the current legal frameworks are inadequate for addressing the complexities and semi-autonomous characteristics of AI systems, presenting significant challenges for effective regulation.

Globally, nations are adopting varied strategies toward AI oversight. The European Union is advancing its AI Act to mitigate the potential misuse of AI technologies, while the United States and the United Kingdom continue to grapple with the lack of comprehensive regulatory frameworks. Australia’s more cautious, wait-and-see approach may lead to unpredictability in the market, hindering innovation and raising questions about the future of AI regulation in the country.

The absence of an advisory body signals a shift away from proactive governance in favor of a more reactive stance, a decision that some analysts fear could leave Australia lagging behind its global counterparts. As AI increasingly permeates various sectors, the urgency for robust regulatory structures becomes more pronounced. This is particularly pertinent as young people, who are often the most engaged with emerging technologies, could face significant risks in the absence of protective measures.

Investment in an AI Safety Institute aligns with broader initiatives aimed at fostering a safer AI landscape, but industry experts argue that these measures alone may not suffice. They emphasize that without a clear regulatory framework, the potential for misuse and ethical dilemmas remains high. The rapid evolution of AI technologies makes it imperative for regulatory bodies to adapt quickly, yet Australia seems to be adopting a more cautious approach.

This regulatory ambiguity may deter international investment and innovation, as companies seek environments with clearer guidelines. The need for a balanced approach that fosters innovation while ensuring safety is becoming increasingly critical. As the AI sector matures, the Australian government faces mounting pressure to re-evaluate its strategy, particularly amid growing concerns about data privacy and algorithmic bias.

As Australia navigates these complex issues, the impact of its decisions could resonate globally. The country’s regulatory framework will likely serve as a reference point for other nations grappling with similar challenges. In the evolving landscape of AI governance, Australia has the potential to emerge as a leader or risk falling behind, underscoring the importance of timely and effective regulatory measures.

Looking ahead, the future of AI regulation in Australia remains uncertain, with the government’s current strategy under scrutiny. Stakeholders from various sectors will be keenly observing how the situation unfolds, as the balance between innovation and regulation continues to be a critical point of discussion. The decisions made today may shape the trajectory of AI development and its societal implications for years to come.

(With inputs from agencies.)

See also AI Adoption Surges as 90% of Students Use Tools, Universities Revise Classroom Policies

AI Adoption Surges as 90% of Students Use Tools, Universities Revise Classroom Policies Anthropic’s AI Deal with DoD Canceled Over Controversial Surveillance Restrictions

Anthropic’s AI Deal with DoD Canceled Over Controversial Surveillance Restrictions Bipartisan Coalition Reveals Pro-Human AI Declaration with 5 Key Governance Pillars

Bipartisan Coalition Reveals Pro-Human AI Declaration with 5 Key Governance Pillars Vietnam Enacts Landmark AI Law, Introducing Risk-Tiered Regulation for Companies

Vietnam Enacts Landmark AI Law, Introducing Risk-Tiered Regulation for Companies AI Transforms Business Travel in North America, Streamlining Policies and Enhancing Compliance

AI Transforms Business Travel in North America, Streamlining Policies and Enhancing Compliance