Cybercriminals, particularly those linked to nation-states like North Korea, are increasingly leveraging advanced AI technologies to enhance the efficiency of their operations, according to Sherrod DeGrippo, Microsoft’s General Manager of Global Threat Intelligence. In a recent interview with The Register, DeGrippo highlighted how “interview AI agents” are enabling these malicious actors to offload essential yet mundane tasks in the preparation for cyberattacks.

This functionality includes executing reconnaissance on compromised systems and managing the infrastructure necessary for attacks. While such tasks may not seem as dramatic as hacking into a secure network, they are critical components of modern cybercrime that demand attention from cybersecurity professionals. “Agentic, automated reconnaissance against systems is something that is worth taking a look at,” DeGrippo noted, emphasizing the potential of AI to gather valuable information efficiently. “Go scan the net blocks owned by this particular entity,” she added, illustrating the capabilities that these AI agents offer.

While attackers could manually perform these reconnaissance tasks, employing AI agents significantly reduces the time and effort involved, making it a formidable tool for cybercriminals. DeGrippo remarked, “It’s a great example of AI that can be used for regular, standard business purposes and can also be used by threat actors for malicious purposes.”

In a blog post released on Friday, Microsoft elaborated on how such technologies are allowing criminals to conduct attacks that are larger in scale and more sophisticated than ever before. Infrastructure management is another area where AI agents prove beneficial. DeGrippo explained that threat actors have long relied on both compromising legitimate infrastructure and establishing their own systems to support their campaigns. “We have always seen threat actors stand up the infrastructure,” she said, referring to this dual approach.

Microsoft’s threat intelligence team has observed North Korean groups, including one known as Coral Sleet, utilizing development platforms to rapidly create and manage their attack infrastructure. This capability enables swift staging, testing, and command-and-control operations for their malicious campaigns. “From an agentic AI use case, this is very interesting because you can talk to your malicious infrastructure with natural language and convey your ideas just by expressing them,” DeGrippo said, underscoring the ease with which malicious operations can be executed.

These advancements not only save attackers time but also open doors for less technically adept criminals, simplifying the creation of undetectable infrastructure. DeGrippo stated, “Threat actors will do what works, and they will do what gets them their objective easiest and fastest.” The availability of powerful AI tools is likely to embolden such activities, making it imperative for cybersecurity measures to evolve accordingly.

While Microsoft and other security researchers have documented the use of agentic AI for generating malware, DeGrippo cautioned that the capabilities of AI-generated code still lag behind those produced by human programmers. Nonetheless, she noted a distinction in AI-generated malware that makes it identifiable. “When we detect AI-generated or AI-enabled malware, traditionally, we have noticed that it’s different from regular malware,” she explained. The unique characteristics of this type of malware can alert cybersecurity experts to its origins.

DeGrippo also highlighted a more advanced use case involving malware that can integrate AI functionalities and libraries. “Anyone developing any kind of code is thinking about how to use an AI assistant to do that,” she asserted, suggesting that developers—whether working on benign applications or malicious software—are increasingly seeking to optimize their workflows with AI tools.

The rise of AI in cybercrime not only complicates defense strategies but also raises broader questions about the future of cybersecurity. As threat actors continue to harness these advanced technologies, the challenge for defenders will be to develop equally sophisticated methods to counteract these evolving threats.

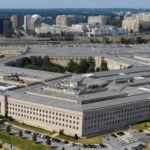

See also OpenAI Hardware Chief Resigns Over Pentagon AI Deployment Concerns

OpenAI Hardware Chief Resigns Over Pentagon AI Deployment Concerns Trump Administration Proposes New Export Rules Linking AI Chip Sales to U.S. Data Center Investments

Trump Administration Proposes New Export Rules Linking AI Chip Sales to U.S. Data Center Investments Intel Expands AI-Native 6G Alliances, Enhancing Confidential Computing Strategy

Intel Expands AI-Native 6G Alliances, Enhancing Confidential Computing Strategy Synthetic Diamond Market Surges as AI Computing Drives $90 Billion Heat Dissipation Opportunity

Synthetic Diamond Market Surges as AI Computing Drives $90 Billion Heat Dissipation Opportunity Legal AI Systems Risk Cross-Border Errors Due to Misleading Outputs, Warns TransLegal CEO

Legal AI Systems Risk Cross-Border Errors Due to Misleading Outputs, Warns TransLegal CEO