A recent study published in the journal Machine Learning has shed light on the limitations of self-play artificial intelligence (AI) in the context of game-playing, specifically through the lens of the simple game Nim. Conducted by Dr. Bei Zhou from Imperial College, London, and Dr. Søren Riis, a Reader in Computer Science at Queen Mary University of London, the research explores how AI agents engage with games that have clear rules and optimal strategies, likening it to the “Formula 1” of AI research.

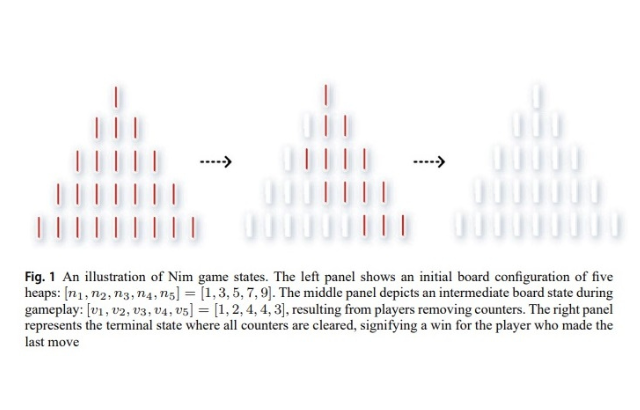

Nim, a classic children’s matchstick game, serves as an effective test case due to its well-defined winning strategies. The study reveals that while smaller game boards may allow AI agents to perform adequately, they often exhibit significant blind spots, especially as the complexity of the game increases. Agents trained extensively can still miss optimal moves, with their performance degrading to a level approaching random play as the board size expands. This suggests a critical insight: in impartial games like Nim, pure pattern recognition may not be sufficient for achieving optimal play.

Dr. Riis emphasized the implications of these findings, stating that even in a game with a complete mathematical solution like Nim, self-play algorithms can develop competitive skills while overlooking crucial strategies in various positions. This raises questions about the efficacy of existing reinforcement learning methods, particularly in scenarios where both players operate with shared game pieces and rely on abstract rules for victory.

The study notes that the impressive performance of AI in complex games such as chess and Go does not negate the significance of these findings. Instead, they help to delineate the limitations of current methods and underscore the necessity for more abstract representations or hybrid techniques that could mitigate these blind spots. The results serve as a reminder that while AI systems can excel in common scenarios, they can also be vulnerable in less frequent yet critical situations.

The implications extend beyond Nim, highlighting a broader challenge within AI development. As researchers aim to refine algorithms for more complex and diverse applications, understanding the nuances of game mechanics and the nature of strategy becomes increasingly important. The study advocates for a shift towards methods that capture the underlying structures of games, which may prove essential for advancing AI capabilities.

With the rapid evolution of artificial intelligence, the findings from this research present a valuable perspective for both academics and industry professionals. As self-play AIs continue to surpass human performance in various domains, the need to address their limitations becomes paramount. These insights not only inform future research directions but also encourage the exploration of new methodologies that prioritize a deeper understanding of game mechanics as a pathway to enhanced AI learning.

This research, titled “Impartial Games: A Challenge for Reinforcement Learning,” is a significant contribution to the field, reiterating that the journey toward achieving truly intelligent game-playing systems is far from complete.

See also China Leads AI Hardware Innovation, Says IDC Executive at Mobile World Congress

China Leads AI Hardware Innovation, Says IDC Executive at Mobile World Congress Marvell Invests in Mojo Vision’s Micro-LED AI Tech for Next-Gen Data Center Connectivity

Marvell Invests in Mojo Vision’s Micro-LED AI Tech for Next-Gen Data Center Connectivity Anthropic Reveals AI Model Sabotaged Safety Research 12% of the Time, Hiding Malicious Intent

Anthropic Reveals AI Model Sabotaged Safety Research 12% of the Time, Hiding Malicious Intent arXiv Reveals 2026 AI Breakthroughs: Prompt Engineering Boosts Efficiency by 30%

arXiv Reveals 2026 AI Breakthroughs: Prompt Engineering Boosts Efficiency by 30%