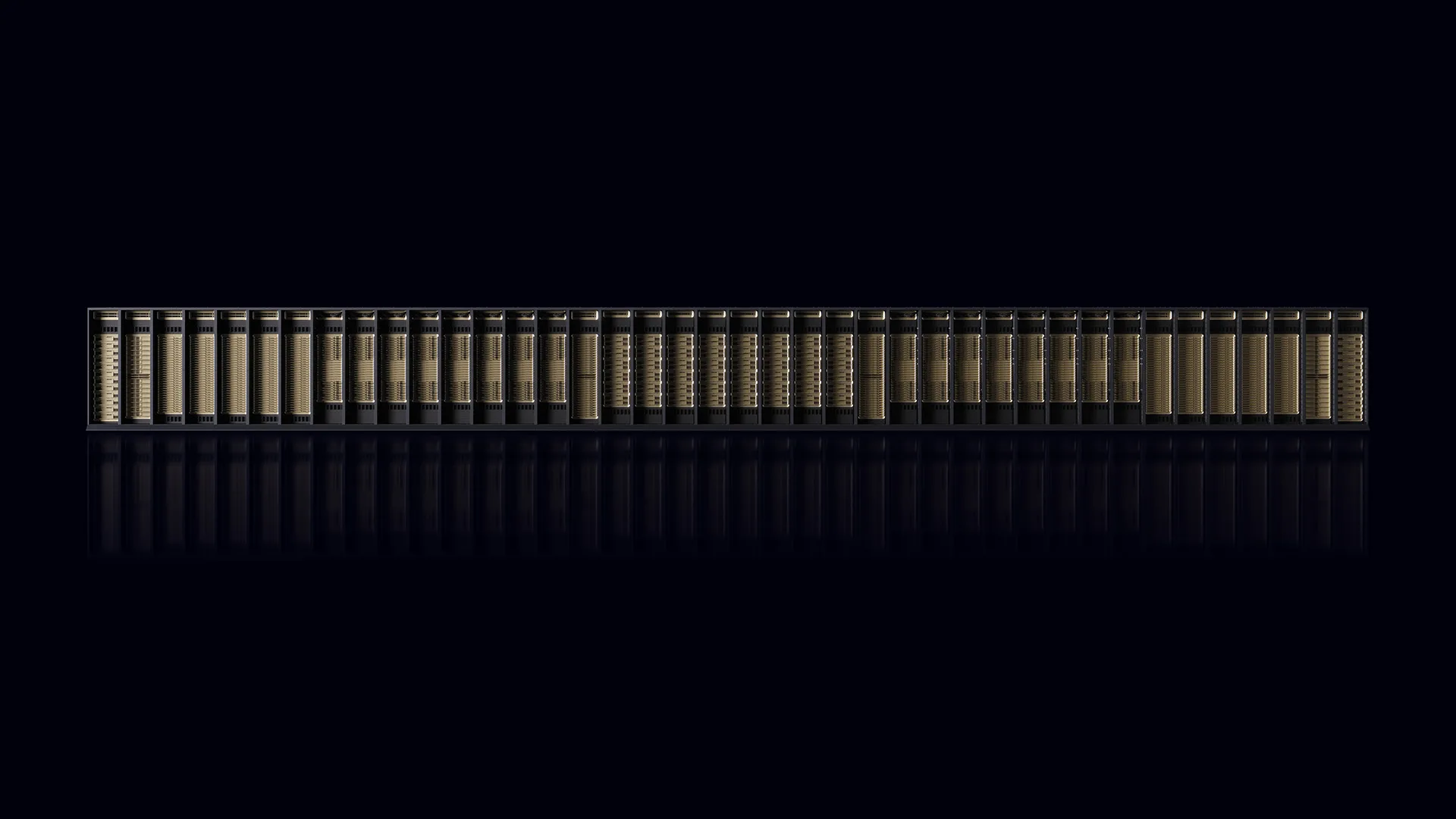

NVIDIA has unveiled the Vera Rubin POD, a sophisticated AI supercomputer architecture designed to handle the rapidly evolving demands of agentic AI systems. As AI interactions increasingly shift from human to AI-driven, the need for robust infrastructure capable of supporting this shift is becoming critical. The Vera Rubin POD comprises five specialized rack-scale systems built on NVIDIA’s third-generation MGX architecture, capable of processing over 10 quadrillion tokens per year.

This new system features an impressive 40 racks, housing 1.2 quadrillion transistors and nearly 20,000 NVIDIA dies, including 1,152 NVIDIA Rubin GPUs. It is designed to deliver 60 exaflops of processing power and achieve a staggering 10 petabytes per second of total scale-up bandwidth. Such capabilities are crucial for modern AI workloads, which require high throughput, low-latency inference, and massive context memory storage.

At the core of this architecture is the NVIDIA Vera Rubin NVL72 rack, the compute engine featuring 72 NVIDIA Rubin GPUs and 36 NVIDIA Vera CPUs interconnected through a massive NVLink spine, allowing the rack to function as a single unified GPU. The NVL72 is designed to optimize four scaling laws of AI—pretraining, post-training, test-time scaling, and agentic scaling—resulting in up to four times better training performance and ten times better inference performance per watt compared to previous models.

Complementing the NVL72 rack are dedicated inference accelerator racks known as NVIDIA Groq 3 LPX, featuring a total of 256 language processing units (LPUs) per rack. These LPUs work in conjunction with the NVL72 to eliminate the trade-offs between interactive speed and throughput, achieving up to 35 times more tokens processed and ten times greater revenue opportunities for trillion-parameter models compared to earlier architectures.

To facilitate extensive reinforcement learning environments, the NVIDIA Vera CPU rack integrates up to 256 NVIDIA Vera CPUs, capable of sustaining over 22,500 concurrent environments. This setup maximizes the efficiency of testing and validating results generated from the NVL72 and LPX racks, paving the way for large-scale agentic AI applications.

The NVIDIA BlueField-4 STX rack serves as the AI-native storage solution, extending GPU context capacity across the POD by offloading key-value cache data into a dedicated high-bandwidth storage layer. This innovation allows for a fivefold increase in tokens-per-second and superior power efficiency compared to conventional storage methods.

As for networking capabilities, the NVIDIA Spectrum-6 SPX racks connect the entire POD, engineered to facilitate both east-west and north-south traffic. The inclusion of a new 102.4 Tb/s switch with silicon photonics integration enhances power efficiency and minimizes latency, ensuring that the AI workloads across compute and storage environments remain synchronized.

NVIDIA’s MGX architecture aims to streamline the deployment of these complex rack systems, focusing on modular designs that simplify maintenance and enhance reliability. Dynamic power management features ensure that power is efficiently distributed among CPUs and GPUs, maximizing energy efficiency and performance.

The advancements embodied in the Vera Rubin POD are not merely technical specifications; they represent a significant leap forward in the potential applications of AI, particularly as the landscape shifts toward more autonomous decision-making processes. NVIDIA’s ongoing commitment to open standards and partnerships is set to accelerate the development and deployment of AI infrastructures, enabling organizations to harness the power of AI efficiently.

With NVIDIA’s GTC 2026 approaching, the company is poised to showcase these innovations further, emphasizing their importance in shaping the future of AI technology and its applications across various sectors.

See also AI Factory Infrastructure Faces $1 Trillion Challenge Amid Integration Gaps, Says Ahead VP

AI Factory Infrastructure Faces $1 Trillion Challenge Amid Integration Gaps, Says Ahead VP Hyper Appoints Chris Fauerbach as VP of Software Engineering to Enhance Software Systems

Hyper Appoints Chris Fauerbach as VP of Software Engineering to Enhance Software Systems UT Austin Hosts Symposium on AI Ethics and Robotics with Top Experts in the Field

UT Austin Hosts Symposium on AI Ethics and Robotics with Top Experts in the Field CIPTA Launches RG658 PRO AI GPU Server and cubePRO Workstation at CloudFest 2026

CIPTA Launches RG658 PRO AI GPU Server and cubePRO Workstation at CloudFest 2026