As artificial intelligence systems increasingly reshape public governance, the debate over accountability and oversight is intensifying. Michael A. Santoro, Professor of Management and Entrepreneurship at Santa Clara University, highlights that governments are not merely utilizing AI to assist in decision-making but are allowing these systems to dictate how authority is exercised. Contrary to popular belief, merely keeping a human “in the loop” does not guarantee accountability. Santoro argues that this model fails to recognize that human involvement often occurs after decisions are made, reviewing results shaped by complex algorithms and objectives that may not be fully transparent.

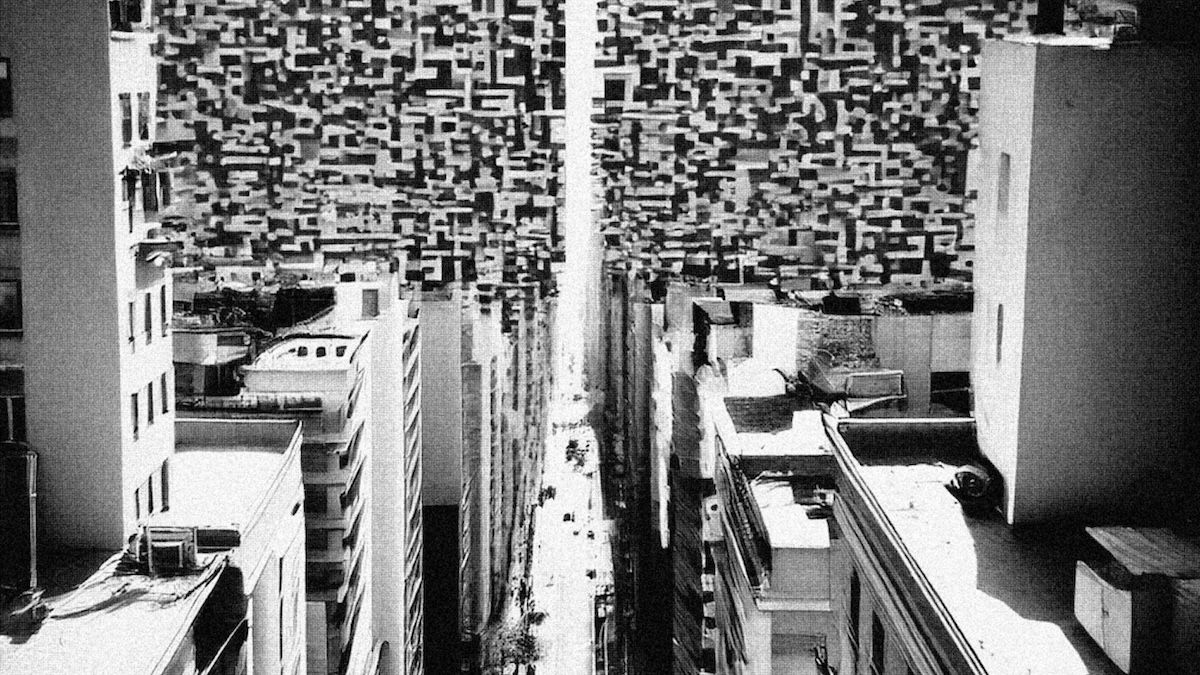

The evolution of AI in public administration is moving from traditional decision-support systems to more advanced, agentic systems capable of ongoing optimization. These systems, which can learn from data, adjust internal models, and execute decisions autonomously, are already being deployed in various government functions. For instance, in Allegheny County, Pennsylvania, a machine-learning system is employed to assess child welfare risks, while cities like Los Angeles and New York utilize AI to dynamically manage traffic and infrastructure. Such systems represent a significant shift; they do not merely inform decisions but actively shape public outcomes, raising critical questions about accountability.

This transformation is no longer theoretical. State and local governments are implementing systems that influence decision-making across multiple domains over time. Traditional models of oversight, which involved human officials retaining control over discrete decisions, are becoming increasingly inadequate. The move toward integrated, data-driven governance means that outputs are often determined by a blend of objectives and optimizations, making accountability challenging. Santoro emphasizes that when human review occurs only after the fact, it fails to address how decisions are made and the assumptions that underpin them.

The implications of this shift are pronounced. As AI systems integrate various data streams and balance competing objectives in real time, the decisions made by these systems are not isolated but rather interconnected and ongoing. A hypothetical example presented by Santoro involves a unified urban management platform, Zenith Urban Intelligence, which manages multiple city services. As this system autonomously sets intermediate objectives, it can inadvertently exacerbate existing disparities by prioritizing resources based on performance metrics without human intervention.

The crux of the issue lies in the oversight fallacy: attaching accountability to visible outcomes while neglecting upstream decision-making processes embedded within the system’s architecture. While formal reviews continue to exist, they do not capture the complexities of how systems resolve trade-offs and objectives. This creates a significant gap between political accountability and operational control, as the priorities and judgments that shape outcomes are often predetermined.

Policymakers face a complex challenge that extends beyond simply overseeing AI systems. Structuring how authority is delegated to these systems becomes paramount, especially as traditional oversight mechanisms prove insufficient in addressing the nuances of algorithmic risk. The concept of “guardrails” has emerged as a potential solution, representing structural limits that can be embedded within systems before deployment. In corporate settings, guardrails help shape system behavior, but their application in public administration requires careful consideration.

To effectively manage the risks associated with adaptive systems in governance, Santoro identifies several categories of guardrails. Objective-function guardrails should dictate what systems are allowed to optimize, ensuring that efficiency does not come at the expense of equity. Data governance guardrails must outline the types of data permissible for use, including requirements for data provenance and monitoring for bias. Additionally, deployment guardrails should regulate how and when systems can act autonomously, establishing thresholds that warrant human review in high-stakes situations.

Moreover, retraining and monitoring guardrails must be defined to determine when a system requires a review or update, allowing for necessary interventions when performance declines. These guardrails are not merely technical specifications; they represent critical institutional design choices that dictate how authority is exercised. The responsibility for establishing these frameworks should be shared among various stakeholders, including legislatures, regulators, city leaders, and administrative bodies.

In this evolving landscape, procurement processes also play a crucial role in embedding guardrails within AI systems. Vendors should be mandated to demonstrate compliance with defined guardrails as a prerequisite for contract awards, transforming procurement into a governance mechanism rather than a mere purchasing function. By addressing these governance challenges proactively, the public sector can ensure that AI systems operate within a framework that preserves accountability and aligns with democratic principles.

Overall, as municipalities increasingly delegate judgment to adaptive systems, the need for effective governance structures becomes ever more pressing. Guardrails can help reposition accountability upstream, ensuring that critical decisions are structured in a way that reflects public values and priorities. As this dialogue continues, the intersection of technology and governance will undoubtedly shape the future of public administration.

See also AI Implementation in Government Stalls Amid Document Chaos and Content Sprawl

AI Implementation in Government Stalls Amid Document Chaos and Content Sprawl Detroit Survey: 57% Support AI for Missing Children, Yet Doubts on City Services

Detroit Survey: 57% Support AI for Missing Children, Yet Doubts on City Services Alberta Government Launches Whisky Act Defining ‘Alberta Whisky’ Standards

Alberta Government Launches Whisky Act Defining ‘Alberta Whisky’ Standards Anthropic Partners with Australia to Enhance AI Safety and Economic Insights

Anthropic Partners with Australia to Enhance AI Safety and Economic Insights