In a groundbreaking shift for legislative processes, California Congressman Ted Lieu introduced what he dubbed the first piece of federal legislation generated by artificial intelligence in 2023. Using ChatGPT, the resolution aimed to express support for Congress’s focus on AI. Fast forward to today, this once-novel approach is rapidly becoming mainstream. The Trump administration is reportedly considering employing Google Gemini for drafting federal transportation regulations, while the US Department of Education is exploring AI-assisted regulatory drafting.

As technology advances, several companies are developing tools aimed at aiding lawmakers in analyzing and drafting legislation. Among them, **Vulcan Technologies**, a startup backed by Y Combinator, is working on a “regulatory operating system.” This platform integrates laws, regulations, and court decisions from various jurisdictions, enabling users to analyze statutory language and generate proposed legislative text with supporting citations. In July, Virginia Governor Glenn Youngkin mandated the use of Vulcan’s technology across all state agencies to streamline regulations by one-third.

The platform is also in use by the US Department of Education and the South Carolina Department of Government Efficiency (DOGESC), which is focused on curbing regulatory overreach. **FiscalNote** has introduced a similar tool named **PolicyNote**, which tracks legislative and regulatory actions, aids in drafting legislation, and forecasts bill outcomes. Although FiscalNote has not disclosed its client list, it claims to serve various entities across federal and state governments.

However, the rise of AI in legislative drafting raises significant concerns among experts. Currently, there are no regulations restricting the use of AI for writing laws, nor any requirement that legislative text be drafted by humans. Critics warn that the technology may be employed without a comprehensive understanding of its limitations and potential biases. Kay Firth-Butterfield, CEO of Good Tech Advisory, voiced concern that society is passively allowing AI to shape laws without sufficient scrutiny. “The ability for the general public to actually question how and why the tools were used doesn’t exist,” she stated.

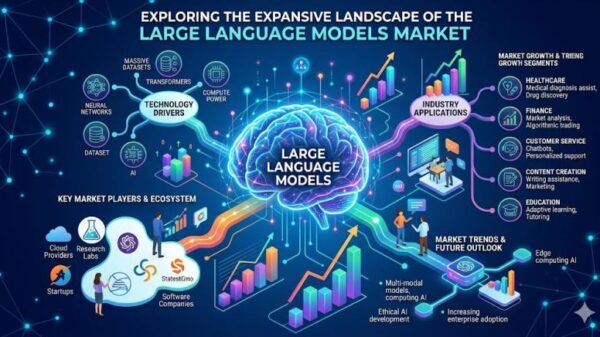

The appeal of AI tools for lawmakers, particularly those who may lack legal expertise, is undeniable. Vermont House member Monique Priestley reported that she frequently uses large language models (LLMs) to simplify complex bills into more digestible summaries for her constituents. Additionally, she employs AI during the drafting process to analyze data protection laws and gather insights from other states.

Despite the advantages, experts remind lawmakers that initial drafts are typically vetted by experienced staff before reaching the final version. Ryan Calo, a law professor at the University of Washington, emphasized that while AI can enhance efficiency, it is not a substitute for thorough review. “AI is a tool,” he said, cautioning against overreliance on automated systems.

Concerns exist regarding the quality and accountability of AI-generated legislative text. Coglianese pointed out that LLMs cannot formulate the nuanced policy judgments necessary for effective legislation. “Unique policy choices that call for more than just putting words together really have to map onto the problems in the world,” he explained. The risk of overconfidence is also significant, as lawmakers may be tempted to treat AI outputs as reliable without adequate verification. “The large language models tend not to sufficiently display that uncertainty,” he added.

As AI technologies evolve in legislative contexts, the importance of maintaining human oversight remains paramount. Policymakers and staffers worry that lobbyists might use AI to draft amendments that could bypass legal scrutiny, potentially leading to legislation that has not been thoroughly reviewed. Priestley voiced concerns about the implications of AI on public comments submitted to regulatory agencies, noting that a flood of AI-generated feedback could overwhelm the review process and drown out legitimate concerns.

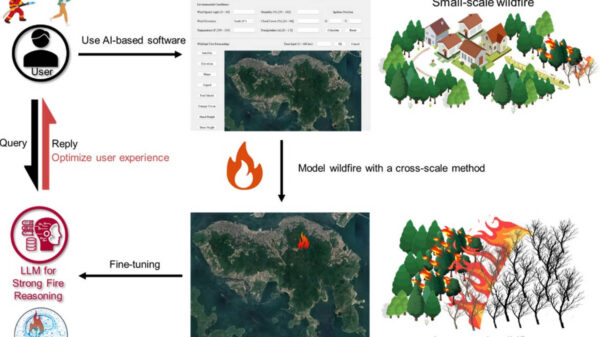

As the use of AI in legislative processes expands, the challenge lies in designing effective validation methods to ensure responsible use. Coglianese suggested that a side-by-side comparison of AI-generated and human-generated legislative reviews could provide insights into their respective strengths and weaknesses. “There’s a lot of nuance here,” he noted. “These tools can be very positive and constructive, but they can also be abused.”

Looking ahead, the prospect of lawmakers employing AI to draft regulations for AI technologies themselves raises ethical questions. As AI tools are shaped by companies subject to future regulations, the potential for bias and manipulation looms large. As Priestley aptly summarized, “It’s always in the back of my mind” whether AI can objectively evaluate its own risks and limitations. The path forward will require careful consideration of how AI is integrated into the legislative landscape, ensuring that human oversight remains an essential part of the process.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health