As artificial intelligence (AI) continues to permeate the healthcare sector, experts emphasize the urgent need to address governance, ethics, and regulatory considerations. With the promise of enhanced patient outcomes and clinical efficiencies, stakeholders are acutely aware of the potential pitfalls that accompany the deployment of AI technologies in sensitive healthcare environments.

Jason Prestinario, CEO of Particle Health, highlights the importance of robust regulations to ensure AI models are specifically trained for medical decision-making. He notes that this is particularly vital for consumer-centric AI healthcare tools like ChatGPT Health. Equally important, he states, is the interoperability of AI with Electronic Health Records (EHR), as a steady stream of data is essential for the ongoing improvement of these models.

Ben Hilmes, CEO at Healthcare IT Leaders, echoes this sentiment, asserting that successful AI implementations require leadership beyond technical expertise. He argues that trust must be built through transparent governance and clear accountability, emphasizing that AI should enhance, not replace, clinical judgment. Without this foundational trust, even the most advanced technologies risk being underutilized by clinicians.

Addressing concerns over the “black box” nature of AI, Sam Gopal, Senior Vice President of Product & Technology at Interwell Health, argues for transparency and human oversight in AI deployments. He stresses that AI should serve as decision support, with clinicians involved at every stage—from model development to real-world application—to ensure clinically sound insights. Gopal also underscores the necessity of strict data governance, urging healthcare organizations to clearly define patient data usage and maintain secure environments.

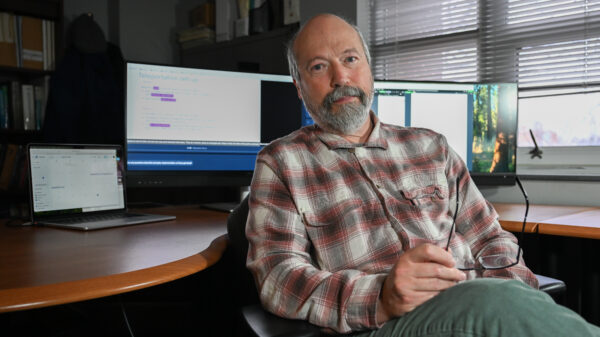

Daniel Vreeman, Chief Standards Development Officer and Chief AI Officer at Health Level Seven (HL7) International, points to governance as a critical challenge. He stresses the need for a clear understanding of data quality and monitoring of AI systems throughout their lifecycle. Vreeman advocates for the adoption of open, interoperable data standards, arguing that AI should be treated like any other clinical infrastructure, subject to rigorous oversight and continuous evaluation.

Gokul Mohan, CEO at CareHarmony, emphasizes that AI should be designed to support clinicians, not replace them. He calls for transparency in data usage and insight generation, as well as vigilance against historical biases that may be reflected in data. Mohan points out that ongoing oversight is essential, recommending that clinician involvement and regular performance evaluations become standard practice.

Deepak Prakash, Co-Founder and CTO at Sonio, stresses that healthcare organizations must prioritize compliance with stringent health tech security protocols. He advocates for regular tests of digital infrastructure and timely security updates as vital safeguards against emerging cyber threats. Meanwhile, Lisa Israelovitch, Co-Founder and CEO at AssistIQ, highlights the importance of HIPAA compliance and robust cybersecurity measures in crafting a safe AI-enhanced healthcare platform.

From a data perspective, George Dealy, VP of Healthcare Applications at Dimensional Insight, warns of the risks associated with an over-reliance on AI-generated information. He insists on thorough validation against established standards to ensure that AI-driven insights remain reliable and trustworthy, further underscoring the growing necessity of effective governance.

Jackie Mattingly, Senior Director of Consulting Services at Clearwater Security, calls for a shared responsibility for AI governance across clinical, legal, compliance, and operational teams, rather than relegating it solely to IT departments. She stresses that patient safety should always be prioritized, with a transparent process in place for evaluating AI’s impact on patient care and EHR data.

Firoze Lafeer, SVP of Data Engineering at Revecore, adds that protecting sensitive data is both a legal and ethical necessity. He notes that addressing biases in AI training data is essential to prevent reinforcing existing social inequities. Lafeer advocates for rigorous testing and validation across diverse populations to ensure AI tools lead to equitable health outcomes.

In this complex landscape, Heather Bassett, Chief Medical Officer at Xsolis, emphasizes accountability as a cornerstone of effective governance. She argues that clinicians and compliance leaders must be integral to the AI lifecycle, from use-case selection to ongoing monitoring. Bassett advocates for a risk-informed framework that prioritizes transparency and real-world validation to build trust in AI technologies.

As healthcare organizations navigate the challenges of AI implementation, a commitment to responsible design, ethical oversight, and rigorous validation will be paramount. The ongoing dialogue among industry leaders suggests that while AI holds immense potential, its deployment must prioritize patient safety and equitable access to care.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health