Voice AI, often seen as a straightforward interface where users speak and machines reply, is underpinned by a sophisticated network of technologies. This intricate ecosystem ensures that the seamless user experience, which appears simple, is actually the product of multiple components functioning in concert. The architecture of a Voice AI system is akin to an orchestra, where each stage—from capturing sound to delivering a response—must operate at peak performance. A failure in any part of this process can undermine the entire interaction, underscoring the necessity for efficiency across the pipeline.

The journey of a Voice AI system begins with Automated Speech Recognition (ASR), which converts spoken language into text. For the system to appear human-like, it must accurately capture user intent, accommodating various accents, speaking speeds, and background noises. An essential aspect of ASR is mastering end-pointing, or the ability to discern when a user has finished speaking. If the ASR fails to recognize the end of a sentence, the interaction becomes disjointed. Even the most advanced AI cannot compensate for inefficiencies at this initial stage, making reliable speech-to-text functionality fundamental for building conversational trust.

Once the speech has been digitized, the Large Language Model (LLM) takes center stage, generating responses that are not only accurate but also contextually relevant. Effective Voice AI relies on contextual persistence, allowing it to remember details from previous turns in the conversation. This capability is crucial for maintaining coherence and avoiding repetitive responses. The challenge lies in balancing raw computational power with the nuanced art of narrative flow, ensuring that interactions feel both natural and engaging.

The final step in this complex process is Text to Speech (TTS), which transforms the AI-generated text into natural-sounding audio. Recent advancements in voice synthesis have produced speech that is expressive and human-like, enhancing user engagement. The underlying infrastructure that connects these components is equally important, as it enables real-time communication essential for maintaining the flow of conversation. By implementing real-time streaming, users can start hearing responses before the entire sentence is processed, preventing interruptions that would otherwise break immersion.

In contemporary applications, Voice AI is evolving into a multimodal experience, integrating visual elements such as digital avatars to complement auditory interactions. This addition enhances emotional resonance and makes AI feel less like a mere tool and more like a collaborative partner. This evolution is particularly beneficial in high-stakes environments such as healthcare and education, where a visual presence can significantly improve user experience and comfort.

The real challenge in Voice AI development is not merely advancing individual components, but orchestrating a cohesive experience. Achieving low latency is vital, as each step—listening, processing, and speaking—must occur within milliseconds. The complexity of managing the transitions between ASR, LLM, and TTS requires sophisticated engineering, highlighting the importance of real-time communication infrastructure and orchestrating layers in conversational AI.

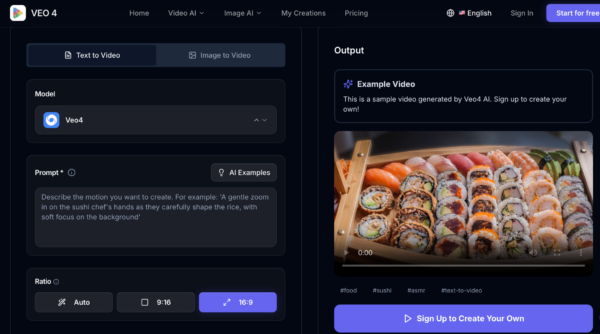

To navigate this complexity, many organizations are turning to specialized infrastructure platforms such as Agora designed to support real-time conversational experiences. These platforms serve as a backbone, integrating various AI services to ensure uninterrupted conversation flow while providing developers with the flexibility to customize models for their specific needs. While all-in-one solutions may offer a quick start for simpler projects, they often lack the depth required for more complex applications. As these technologies mature, businesses increasingly seek adaptable architectures that can accommodate unique brand voices and evolving AI capabilities without sacrificing performance.

Scaling Voice AI presents its own set of infrastructure challenges. Unlike traditional web applications that handle sporadic requests, Voice AI demands persistent, stateful connections that remain active throughout user interactions. The system must coordinate multiple heavy processes simultaneously, ensuring smooth operation even as user bases expand. Scalability extends beyond merely accommodating more users; it is about preserving high-quality, human-like interactions regardless of volume.

As Voice AI reshapes how we engage with technology, it is crucial to recognize that a powerful AI model is just one component of the equation. Creating an experience that genuinely feels human requires a meticulously orchestrated technological stack, where communication, intelligence, and delivery are aligned for optimal performance.

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature