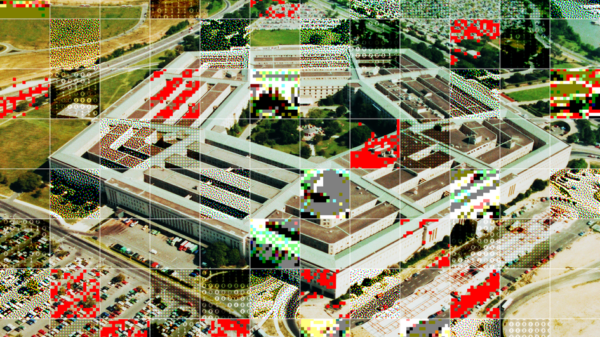

In April 2017, Deputy Defense Secretary Bob Work established an Algorithmic Warfare Cross-Functional Team that launched Project Maven, an initiative aimed at incorporating artificial intelligence (AI) and machine learning into military intelligence and combat operations. This effort marked a pivotal moment in the evolution of AI technologies, transitioning from early development to large-scale integration. Project Maven has since become a cornerstone among over 800 AI projects undertaken by the Pentagon, as well as 300 machine learning tools developed by the CIA and various international organizations, including the United Nations and non-governmental organizations.

As AI technologies gain international traction and showcase groundbreaking applications, organizations such as the World Food Programme (WFP), the International Rescue Committee (IRC), and the United Nations High Commissioner for Refugees (UNHCR) have adopted these tools in humanitarian contexts. Humanitarian organizations are particularly attracted to the efficiency that AI solutions offer in crisis management. However, concerns have emerged regarding the ethical implications of such technologies, particularly in relation to their ability to adhere to evolving humanitarian standards.

On October 9, 2023, the Israeli military imposed a “complete siege” on the Gaza Strip, resulting in widespread destruction and humanitarian crises. The operation disrupted civilian access to essential resources such as food, electricity, and fuel, leading to the collapse of critical infrastructure, including hospitals and communication systems. The conflict caused approximately 9,000 fatalities, predominantly from airstrikes, hunger, and disease, left 25,000 injured, and displaced 70% of residents. These dire statistics highlight the urgent need for enhanced disaster mapping, an area where AI capabilities are proving invaluable.

One of AI’s most significant applications is its ability to conduct precise mapping of disaster sites. This capability relies on machine-learning models that analyze satellite images taken before and after an event, detecting geospatial differences effectively. Humanitarian organizations, such as the United Nations Satellite Centre (UNOSAT), utilize this analysis to identify areas needing reconstruction and develop actionable strategies. In Gaza, reports generated through this technology successfully tracked destroyed infrastructure and mapped civilian displacement patterns, facilitating the more efficient allocation of aid resources.

Despite its advantages, the use of AI in satellite image tracking raises ethical concerns, particularly regarding civilian privacy rights. In recent years, the United Nations General Assembly (UNGA) has voiced apprehension over the rapid advancement of AI technologies, which may enhance the capacities of governments and corporations for surveillance and data collection. The UNGA has characterized “unlawful or arbitrary surveillance” as a highly intrusive act that infringes on privacy rights in non-consensual situations.

Current high-resolution satellite technology can detect features as small as 31 centimeters (about one foot), enabling it to monitor individual movements, identify faces, and produce detailed images of private property. Humanitarian organizations must navigate a delicate balance between utilizing this technology for credible purposes and avoiding perceived exploitation of sensitive data. The adoption of AI presents a complex risk for these organizations; neglecting ethical considerations may inadvertently jeopardize civilian welfare.

Beyond post-disaster relief, AI technology has demonstrated efficacy in providing pre-disaster assistance to vulnerable communities. Machine learning models combined with cloud-based data processing tools have led to innovations such as Google’s Flood Forecasting System, which analyzes weather patterns, and California’s Earthquake Warning System, designed to monitor seismic activity and predict natural disasters. The ability to accurately forecast such unpredictable events has become invaluable for humanitarian organizations in planning proactive resource allocation.

In recent years, the UNHCR has harnessed AI to develop forecasting models aimed at anticipating refugee movements to inform planning and resource allocation. The organization’s 2022 model, Project Jetson, utilized data related to climate, remittances, and market prices to predict levels of forced displacement in Somalia, allowing for timely interventions in response to escalating violence and conflict. Similarly, the WFP has implemented a model to project food insecurity levels in regions affected by conflict, aiding in the understanding and response to anticipated cases of undernourishment.

See also Meta and Microsoft Announce 16,000 Job Cuts Amid Rising AI Investment Costs

Meta and Microsoft Announce 16,000 Job Cuts Amid Rising AI Investment Costs AI Tool Malus.sh “Liberates” Software from Copyright, Raising Legal Concerns

AI Tool Malus.sh “Liberates” Software from Copyright, Raising Legal Concerns Legal AI Tools Exhibit Hallucination Risks, Highlighting AI-Nativity Gap in Law Firms

Legal AI Tools Exhibit Hallucination Risks, Highlighting AI-Nativity Gap in Law Firms AI Criminals Hire Humans via RentAHuman, Raising Legal Responsibility Gaps

AI Criminals Hire Humans via RentAHuman, Raising Legal Responsibility Gaps Snowflake Shares Rise 3.2% as Company Reaffirms Fiscal 2027 Guidance and AI Updates

Snowflake Shares Rise 3.2% as Company Reaffirms Fiscal 2027 Guidance and AI Updates