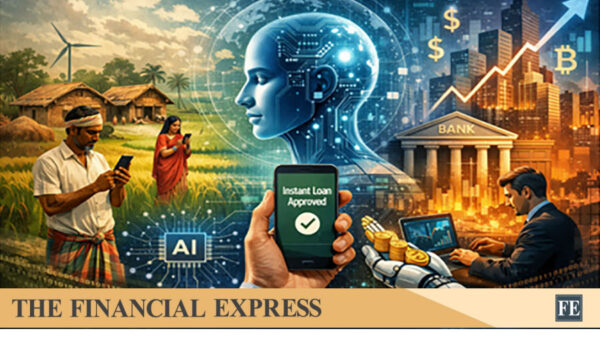

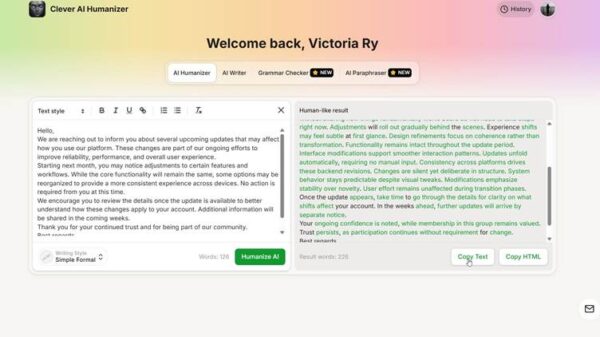

The emergence of generative technology is reshaping the landscape of information creation and consumption. These tools present significant opportunities for enhanced creativity and efficiency, yet they also raise pressing concerns regarding truth and authorship. In academic, journalistic, and professional settings, the ability to distinguish between human-generated content and machine-generated text has become vital for maintaining trust. Employing reliable detectors of AI-generated content has become standard practice among editors and educators, ensuring the originality and authenticity of published or graded materials. As synthetic media proliferates, these verification tools serve as crucial guardians of digital integrity.

Current language models, despite their advanced capabilities, leave behind subtle statistical traces that can be analyzed for detection purposes. Machines tend to prioritize the most likely word choices, resulting in a predictability often absent in human writing. Detection tools are designed to analyze these patterns, searching for stylistic uniformity and a lack of emotional nuance that typically characterize human expression. While a human writer might employ an unusual metaphor or an irregular sentence structure for emphasis, algorithms more frequently produce smoother, more consistent text. Identifying these subtle differences presents a complex challenge that requires ongoing adaptation as technological advancements continue to evolve.

The stakes are particularly high for digital marketers and website owners, as search engines become increasingly adept at identifying low-value, automatically generated content that fails to provide meaningful answers to users. Relying solely on automated text without human oversight can lead to severe penalties in search rankings, potentially undermining months of diligent efforts. By verifying the authenticity of their articles, brands can ensure they deliver unique value and uphold a high standard of quality. Authenticity significantly influences how search engines assess authority, making human-centric content a critical asset for long-term digital success.

In academia, the proliferation of synthetic text has prompted a reevaluation of assessment methods for students. The objective is not to curtail technological use but to foster the development of critical thinking and individual voice among students. Verification tools assist educators in identifying instances where students may depend excessively on automated assistance, allowing for essential discussions about ethics and learning. In the realm of research, confirming that a paper or study is the product of human analysis, rather than a mere accumulation of predicted data, is essential for advancing scientific knowledge and preserving credible scholarship.

As society navigates this evolving digital landscape, the value of human creativity and original thought has never been more apparent. While AI tools can serve as remarkable assistants, they cannot replicate the unique perspectives and emotional depth individuals bring to their work. Establishing a balanced relationship with these technologies necessitates both curiosity and skepticism. By leveraging verification tools to uphold authenticity, stakeholders can harness the advantages of automation while ensuring that the essence of communication remains transparent and truthful. Ultimately, in this information age, the most invaluable asset will be the truth, and safeguarding it is a collective responsibility.

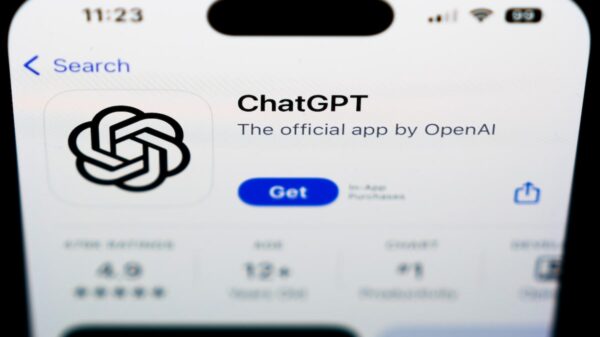

See also Sam Altman Praises ChatGPT for Improved Em Dash Handling

Sam Altman Praises ChatGPT for Improved Em Dash Handling AI Country Song Fails to Top Billboard Chart Amid Viral Buzz

AI Country Song Fails to Top Billboard Chart Amid Viral Buzz GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test

GPT-5.1 and Claude 4.5 Sonnet Personality Showdown: A Comprehensive Test Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative

Rethink Your Presentations with OnlyOffice: A Free PowerPoint Alternative OpenAI Enhances ChatGPT with Em-Dash Personalization Feature

OpenAI Enhances ChatGPT with Em-Dash Personalization Feature