Central banks and financial regulators are facing challenges in keeping up with the rapid adoption of artificial intelligence (AI) by financial institutions, according to a recent survey conducted by the Cambridge Centre for Alternative Finance in collaboration with the Bank for International Settlements and the International Monetary Fund. The findings raise serious concerns about the capability of regulatory bodies to effectively monitor and mitigate risks associated with sophisticated AI models, such as **Anthropic’s** **Mythos**. The research highlights a significant lag in AI adoption by supervisory authorities compared to the financial industry, potentially jeopardizing global financial stability.

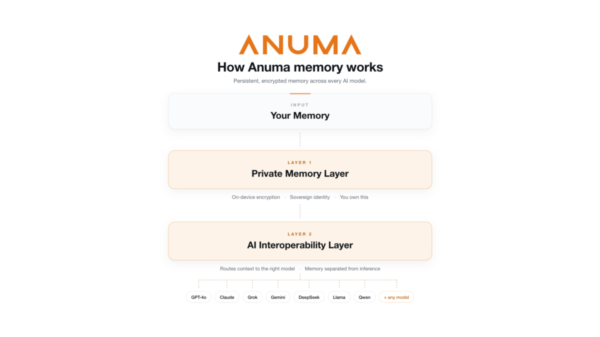

The survey reveals that financial firms are integrating AI technologies at a pace more than double that of their regulatory counterparts. Only 20% of regulators reported having “advanced AI adoption,” and merely 24% are actively collecting data on the integration of AI within the industry. Alarmingly, 43% of respondents indicated they have no plans to begin data collection on AI practices in the next two years. This “empirical blind spot,” as termed in the report, poses a barrier to effective oversight in a rapidly evolving technological landscape.

Advanced systems like **Mythos** are perceived as a significant threat, capable of exploiting software vulnerabilities at scale, which could undermine the efficacy of traditional human governance structures in the banking sector. While regulators maintain that financial institutions must be accountable for any harm caused, complexities arise when autonomous systems are supplied and managed by third-party vendors. This situation necessitates a reevaluation of accountability frameworks, especially given the increasing dependence on powerful AI models.

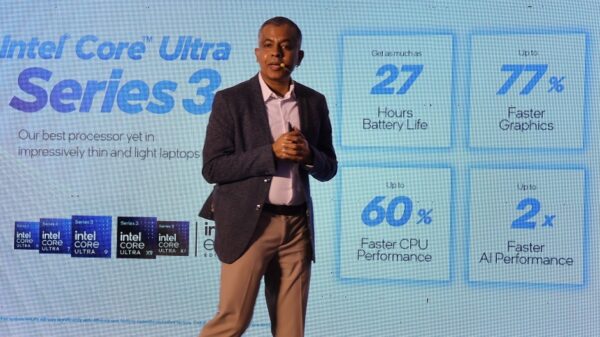

The report further underscores the growing reliance of the financial sector on a limited number of AI providers, with 69% of respondents indicating they utilize **OpenAI** services. This concentration creates a “critical third-party risk consideration,” which could expose the global financial system to vulnerabilities and resilience challenges. The data suggests that supervisory authorities may need to adopt their own AI capabilities to keep pace with the technologies they are tasked with overseeing.

As AI continues to reshape the financial landscape, the gap between regulatory capabilities and industry practices raises questions about the efficacy of current oversight mechanisms. The report calls for urgent action from regulators to enhance their understanding and management of AI-related risks. This includes not only improving data collection practices but also fostering a more proactive approach to integrating AI within their own operations.

In a rapidly evolving environment marked by technological innovation, the need for robust regulatory frameworks becomes increasingly critical. The ability of financial regulators to adapt and respond effectively to the challenges posed by AI will play a crucial role in safeguarding financial stability and protecting consumers. As the industry moves forward, the intersection of AI technology and regulatory oversight will likely remain a focal point of discussion among policymakers and industry leaders alike.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility