Nvidia has made significant strides in the South Korean market, releasing 7 million Korean-language synthetic personas and introducing the multimodal model Nemotron3 Nano within just one month. This development signifies Nvidia’s entry with a comprehensive package that integrates models, data, frameworks, and hardware, drawing parallels to its successful CUDA framework that revolutionized the graphics processing unit (GPU) industry.

On April 20, Nvidia published the ‘Nemotron-Personas-Korea’ dataset on Hugging Face, marking the first large-scale Korean-language persona dataset. This dataset, licensed under CC BY 4.0 for commercial use, contains entries synthesized from various reliable sources, including Statistics Korea, the Supreme Court, the National Health Insurance Service, and others. The move to offer such a dataset for free is seen as a strategic maneuver to dominate the Korean AI landscape.

The release of the Korean-language dataset is reminiscent of how CUDA eliminated cost barriers for developers, effectively sidelining competing technologies. By providing Nemotron, Nvidia aims to eliminate financial obstacles for models, data, and frameworks, thereby making its hardware the most efficient environment for executing these resources.

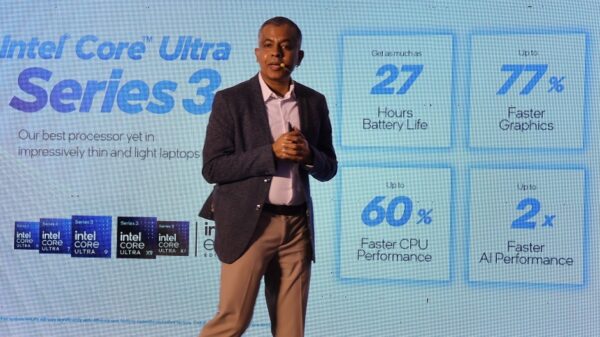

At a Seoul National University lecture on the 28th, Brian Catanzaro, Nvidia’s vice president of applied research, stated, “Whenever good things happen in AI, it is an opportunity for Nvidia to grow.” Following the release of Nemotron3 Nano (30B), Nvidia is also set to introduce Nemotron3 Ultra (approximately 500B) in the coming weeks. The newly added Nemotron3 Nano Omni is a multimodal reasoning model, capable of processing text, images, audio, and video, boasting a throughput nine times higher than similar open omni models.

Catanzaro highlighted that the Super model achieved the top rank among open models in the MMLU Pro benchmark without pre-optimization, with cumulative downloads of the Nemotron3 family surpassing 50 million over the past year. This focus on model competitiveness leads to the crucial data stage, where the 7 million Korean personas align with Nvidia’s global synthetic dataset strategy. Catanzaro noted that similar datasets in other languages have proven effective in product development and warranted a dedicated effort for Korea.

Attributes such as name, gender, age, marital status, education level, occupation, and region were meticulously synthesized to reflect the actual demographic distribution of the Korean population. Catanzaro added that refining the pretraining dataset has significantly improved training efficiency on the same hardware by four times over the past year.

To facilitate the application of these powerful models and precise datasets, Nvidia has developed the Nemo framework. The company released its entire post-training pipeline, which includes supervised fine-tuning, reinforcement learning based on reward models, and more. Additionally, Nvidia introduced ‘Pivot RL’, an algorithm that accelerates processing by 5.5 times, alongside a multi-domain policy distillation technique. Catanzaro emphasized the benefits for developers, stating, “If you can start from open-source technology and customise it, the option value increases.”

The final element of Nvidia’s strategy revolves around hardware dependence, which is not included in the free offerings. Catanzaro remarked, “If Nvidia did not deeply understand neural network architectures, it would not have been able to make Blackwell.” The newly introduced NVLink 72 in the Blackwell generation allows memory access among 72 GPUs, designed to maximize efficiency for mixture-of-experts (MoE) models, with the Nemotron3’s ‘Latent MoE’ structure tailored to leverage NVLink 72 capabilities.

South Korean companies now face a dilemma between short-term efficiency and long-term dependence on Nvidia’s ecosystem. The ideal environment for executing the models, data, and frameworks released by Nvidia is indeed its own GPUs. Catanzaro’s coalition model aims to establish de facto standards through collaborative development with large global players, contrasting with the monetization strategies of companies like OpenAI and Anthropic, which rely on API sales for closed models.

This lock-in structure introduces tensions with the South Korean government’s sovereign AI policy, which advocates for autonomy through native language, data, and infrastructure. The phenomenon of a foreign entity synthesizing Korean-language data and distributing it for free, while concurrently being the most efficient provider of necessary hardware, raises questions about AI sovereignty. However, the Korean-language persona dataset offers immediate resources for training in-house models, posing risks of increasing reliance on Nvidia’s Nemo framework and GPU stack as data utilization grows.

As the landscape evolves, local entities such as Naver, Kakao, and LG AI Research will need to navigate the balance between customizing the Nemotron bases for immediate gains and avoiding long-term dependency on Nvidia. An industry official remarked, “The structure itself, where a foreign company reconstructs Korean data and then distributes it back domestically, suggests the need to reorganise the sovereign AI initiative.”

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility